Most Surfer SEO reviews will tell you whether the tool works. This one asks a harder question: works for what, exactly, and compared to what alternative. After enough time with Surfer to have changed my mind about it twice, here’s what I actually think — about the tool, about optimization scores, and about whether any of this still makes sense in a search landscape that looks nothing like the one Surfer was built for.

Table of Contents

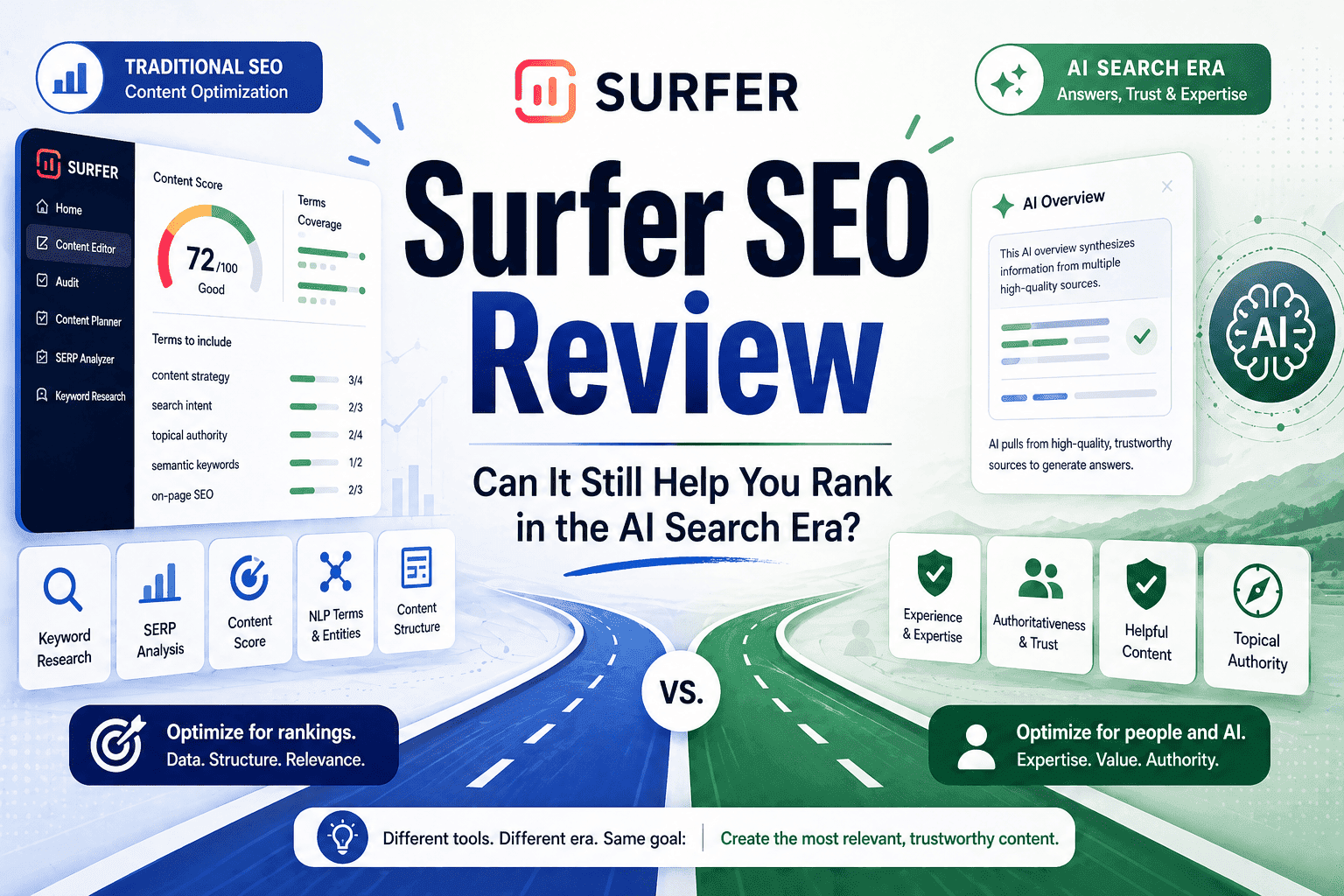

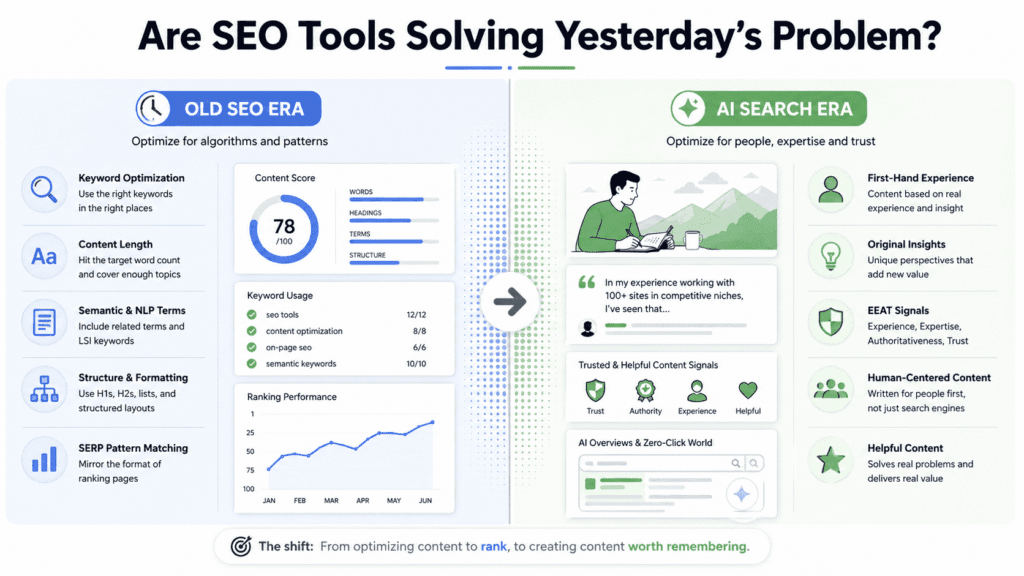

Before Reviewing Surfer, It’s Fair to Ask Whether SEO Tools Are Solving Yesterday’s Problem

Most Surfer SEO reviews skip straight to the features. I want to do something different first — ask whether the problem Surfer was built to solve is still the problem we actually have.

When tools like Surfer came up, the game was fairly legible. Google’s algorithm rewarded pages that had the right words in the right places, covered topics with enough depth, and matched the structural patterns of whatever was already ranking. Reverse-engineer those patterns, apply them to your content, and you had a reasonable shot at moving up. It wasn’t glamorous, but it worked.

That’s still partially true. But “partially” is doing a lot of work in that sentence.

The shift that’s happened — and I think a lot of people in SEO are dancing around this rather than saying it plainly — is that the signals these tools measure have become much easier to manufacture. An AI can hit every semantic keyword, match the average word count, cover the subtopics the top-ranking pages cover, and produce something that looks optimized in every measurable way while having nothing original to say. The metrics haven’t changed. What’s changed is how cheaply those metrics can be gamed.

Google’s response to this has been messy and imperfect, but the direction is clear. The helpful content updates, the increasing weight on first-hand experience, the way EEAT has evolved from a quality guideline into something with actual ranking implications — all of it points toward search rewarding content that’s harder to fake. Content that comes from real experience, real opinion, real knowledge of a subject.

Here’s where it gets interesting for a tool like Surfer: none of this necessarily makes it irrelevant. But it does reframe what you should be using it for. If you’re reaching for Surfer to tell you what to write, you’re probably using it wrong. If you’re using it to pressure-test something you already know how to say — checking whether you’ve covered the ground, whether the structure holds up — that’s a different thing entirely.

That tension is what I kept coming back to while putting together this Surfer SEO review. Not “does it work” as a binary, but does it make your content better in ways that actually matter now — or does it just make it look better by the standards of three years ago?

What Surfer SEO Does in 2026: Features, Changes, and What’s Been Overhauled

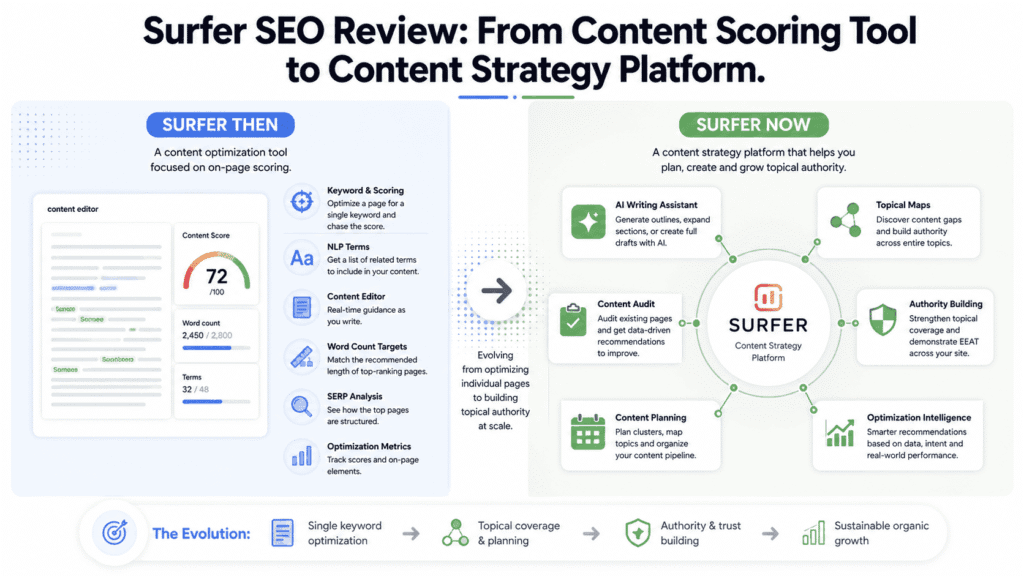

If you’ve heard of Surfer but never used it, you probably have a rough mental model: paste in a keyword, get a score, chase the score. That’s not wrong, but it’s about three years out of date.

The scoring editor is still there, and it’s still the thing most people spend the most time in. You pick a keyword, Surfer scrapes the top-ranking pages for that query, runs them through NLP analysis, and produces a target document — recommended word count, a list of terms to include, suggested headings. Write against that template, and a score climbs toward green. The core loop hasn’t changed. What’s changed is how much Surfer has built around it.

There’s now a full AI writing layer inside the editor. You can generate outlines, expand sections, or just hand the whole brief to the AI and walk away with a draft. Plenty of SEO tools have bolted on AI features in the last two years and the results are usually forgettable. Surfer’s version is marginally more interesting because the generated content gets scored against the same optimization guidelines as anything a human would write — so at least the AI isn’t just producing fluent nonsense, it’s producing fluent nonsense that covers the right subtopics. That’s a low bar, but it’s a real one.

The feature that’s actually changed how people use the tool day-to-day is Topical Maps. The pitch is that Surfer can look at your site, work out which topics you’re missing, and hand you a content plan that builds authority across a whole subject area rather than just optimizing individual pages in isolation. I’ve used it, and my honest reaction was: useful, but blunt. It’s good at spotting obvious gaps. It’s much less good at understanding what’s strategically interesting about your specific situation. If you’re in a competitive, well-mapped niche, the suggestions will feel familiar. If your niche is narrow or genuinely specialist, some of what it recommends won’t make sense.

Something I didn’t expect when revisiting Surfer for this review: the interface has gotten friendlier in ways that aren’t entirely an improvement. Earlier versions exposed more of the raw competitive data — you could see exactly what the top ten pages were doing and make your own calls about what to take from it. Now there’s more hand-holding, more explanation, more “here’s why this matters.” For someone new to content optimization that’s probably welcome. For someone who already knows what NLP entities are and why they matter, it can feel like the tool is talking down to you slightly.

On pricing — it’s not cheap, and the structure nudges you toward upgrading in ways that feel designed rather than incidental. The entry plan is workable if you’re writing for one site at a modest pace. But the features that make Surfer genuinely useful at scale — the audit tool, the topical mapping, higher article limits — sit behind the pricier tiers. That’s not unusual for SaaS tools, but it’s worth going in with clear eyes about which plan you actually need rather than which one looks reasonable on the pricing page.

One thing any honest Surfer SEO review should say plainly: this is not a full SEO platform. No keyword research to speak of. No technical auditing, no crawling, no backlink data. If you come in expecting Ahrefs with a content editor attached, you’ll be frustrated. What Surfer actually is — at its best — is a tool for thinking through the structure and coverage of a piece of content before and while you write it. That’s a specific job. It does that job reasonably well. Whether that job is worth the monthly cost depends almost entirely on how central content is to your growth strategy.

If keyword research and technical auditing are what you’re actually after, a full SEO platform like Semrush is a better starting point than Surfer.

The Strange Problem With “Optimization Scores” Nobody Talks About Enough

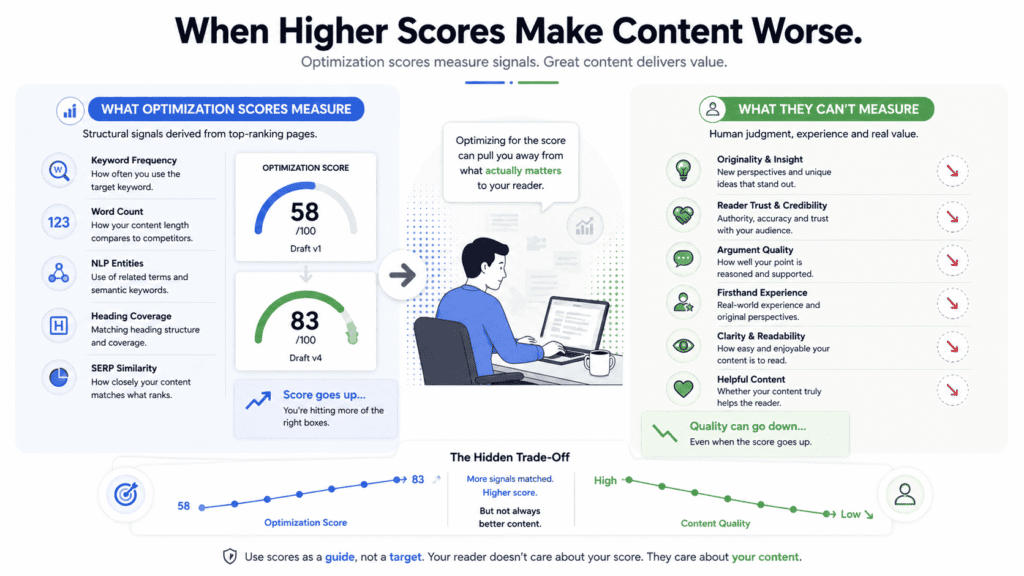

The score is the most seductive thing about Surfer. It’s also, in my experience, the thing most likely to quietly make your content worse.

I want to be specific about why, because the usual critique — “don’t just chase the number” — is true but not particularly useful. Of course you shouldn’t blindly chase the number. The problem is that the score is designed to be chased. It sits in the corner of your screen, it updates in real time, it has colors. Green means good. It’s doing everything short of playing a sound effect when you hit 80. Writing against that kind of live feedback without letting it redirect your decisions takes a level of active resistance that most people — most professionals, under normal working conditions — aren’t going to maintain for an entire draft.

So what actually happens is this. You write something you think is good. You run it through Surfer. The score comes back lower than you expected — say, 58. You look at the missing terms, the sections flagged as too short, the recommended headings you skipped. And you start editing. Not because the content needed those things, but because the score said so. By the end, the score is 83 and the draft is measurably worse — more bloated, less direct, the original line of thinking interrupted by paragraphs that exist purely to satisfy a tool. Nobody catches this because the number went up.

Here’s the part that almost never gets said plainly in a Surfer SEO review: the score isn’t measuring quality. It’s measuring similarity to whatever is currently ranking. Those two things overlap often enough to make the score feel authoritative. They diverge often enough to make following it blindly genuinely risky. Surfer has no way of knowing whether your argument holds together, whether your explanation lands, whether the 200 words you just added to hit the recommended count are doing anything useful or just sitting there. It knows you used the target keyword four times instead of seven. It knows your word count is below the average. It does not know whether any of this matters for the actual human reading the piece.

And this is where it gets structurally interesting. The score inherits the biases of whatever content is winning right now — which isn’t always the best content, or even particularly good content. Sometimes pages rank because of domain authority, or age, or backlink profiles that have nothing to do with what’s on the page. Surfer can’t see any of that. It sees the patterns and reports them as targets. Which means if mediocre content happens to be structurally consistent, you’ll get a detailed blueprint for producing something mediocre and structurally consistent. The score will be excellent. The content will be fine. Fine is a bad place to aim.

There’s a version of using Surfer where none of this matters much — where you treat the score as a loose sense-check at the end of a draft rather than a live target while you’re writing. Check coverage, catch obvious gaps, move on. That’s a legitimate workflow. But that’s not how most people use it, and it’s not really how the product is set up to be used either. The whole interface orients you toward the score from the moment you open a document. Using it as a background reference requires actively working against the grain of the tool, which is a strange thing to have to say about software you’re paying for monthly.

I don’t think this is a fixable problem, either — not without Surfer fundamentally reconsidering what the score represents. Any optimization framework built on competitive pattern-matching will have this ceiling. The tool can tell you what the winners look like. It cannot tell you whether looking like the winners is enough anymore — and in 2026, increasingly, it isn’t.

AI Has Flooded Search With Mediocre Content — Which Is Exactly Why a Structural Tool Like Surfer Still Has a Place

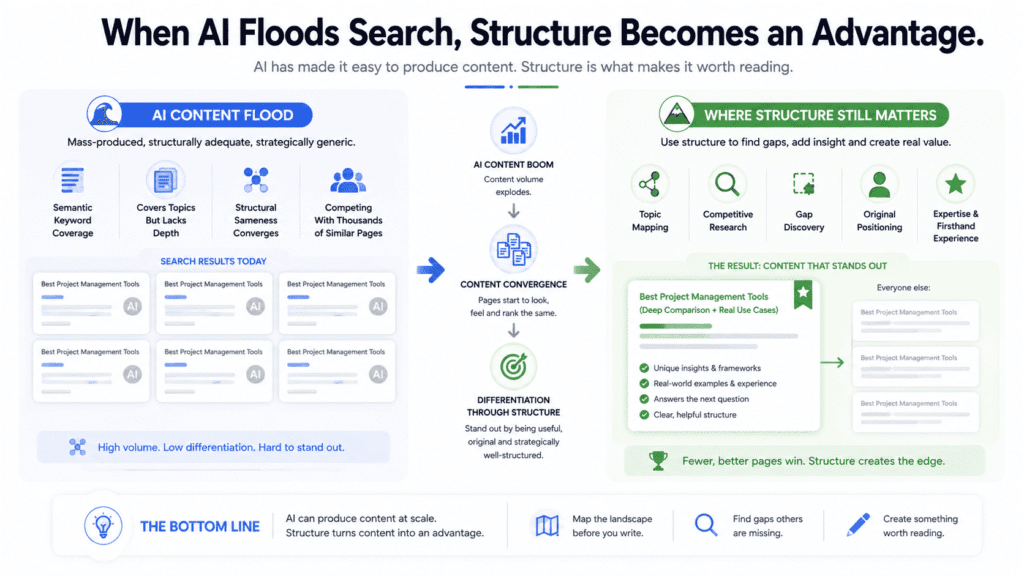

There’s an argument going around SEO circles that AI-generated content has made optimization tools redundant. I think it’s almost exactly backwards.

What’s happened to search over the last two years isn’t that content got better and harder to compete with. It’s that the volume of structurally adequate content has exploded while the volume of genuinely useful content has stayed roughly flat. Anyone with API access and a halfway decent prompt can produce articles that cover the right topics, hit reasonable word counts, and read fluently enough that a casual visitor wouldn’t immediately clock them as machine-written. The floor for producing something has basically disappeared. The ceiling — content that actually helps someone, that has a point of view, that reflects real experience with a subject — hasn’t moved.

That gap is where search is getting fought over right now. And it’s a stranger, more complicated battleground than most SEO commentary acknowledges.

The thing about AI-generated content that gets underappreciated is that its failure mode isn’t usually keywords or topic coverage — it’s structure in the deeper sense. It covers a subject the way someone would if they’d read a lot about it but never done it. It answers the question that was asked without anticipating the one that comes next. It organizes information in whatever order feels default rather than whatever order would actually serve the person reading. A Surfer SEO review that was entirely AI-generated would probably hit every semantic term and still somehow miss the point of what someone researching the tool actually needs to know.

This is where Surfer’s case for still existing in 2026 becomes more interesting than the obvious one. The tool’s value isn’t that it helps you add missing keywords — it’s that it forces a more disciplined reckoning with what a topic actually requires before you start writing. Used before drafting rather than during or after, it changes character completely. You’re not chasing a score. You’re auditing the competitive landscape — what subtopics keep appearing, what questions everyone seems to feel obligated to answer, what the structural consensus around a topic looks like. That’s useful raw material for someone with genuine things to say. It’s less useful as a replacement for having genuine things to say.

I’ve tested this both ways, and the difference is real. Writers who open Surfer after finishing a draft and spend an hour chasing the score produce content that gets measurably more generic. Writers who spend twenty minutes with Surfer before writing a single sentence — just mapping the territory, identifying gaps — tend to produce something more focused and harder to replicate with a generic prompt. Same tool, completely different outcome, entirely down to when in the process it enters.

Now, there’s something that should make anyone using Surfer at scale slightly uncomfortable. If every writer on a given topic is using the same tool, drawing from the same pool of currently-ranking content, identifying the same gaps and covering the same angles — you don’t get better content. You get faster content convergence. The structural sameness that already makes a lot of search results feel interchangeable gets more pronounced, not less. Surfer doesn’t cause this problem, but it can accelerate it when people use it as a content strategy rather than a content check.

The writers I’ve seen get genuine value from it treat the output as a map of what already exists — which is useful precisely because it shows you where the gaps are, where every other piece on the topic is saying the same thing in slightly different words, where there might actually be space to say something that doesn’t already exist in ten other articles. That’s a different job than optimization. It’s closer to competitive research. And it’s probably the more honest description of what Surfer is actually good for in a search environment that has more structurally adequate content than it knows what to do with.

Where Surfer Can Lead Any Writer Off Track — Not Just Beginners

Something counterintuitive has happened to search over the last two years, and I don’t think it’s been fully absorbed yet: the easier it became to produce content, the more valuable genuinely structured thinking became. Not optimized content. Not keyword-dense content. Thinking that’s actually organized — that anticipates what someone needs to know next, that covers a topic in an order that makes sense rather than an order that’s just conventional.

Because here’s what AI-generated content actually looks like in bulk, once you’ve read enough of it: it’s competent. Eerily competent in some ways. It covers the right topics, uses the right terms, formats itself correctly. What it almost never does is decide anything. It doesn’t choose what to leave out. It doesn’t make a structural bet about what the reader needs to understand first. It produces the expected shape of an article without making any of the judgments that give an article its shape. And search is now absolutely full of this — pages that look complete and land empty.

This is the environment Surfer is operating in. And weirdly, it’s a better environment for a structural tool than the pre-AI one was.

When I first started using Surfer, the main use case felt obvious: figure out what the top-ranking pages have, make sure yours has it too. That logic made sense when the competition was other humans who might have genuinely missed something. It makes less sense now, when the competition is often AI content that has mechanically covered every angle without understanding any of them. Matching the structural patterns of that content isn’t a strategy. It’s a way of joining the pile.

What changed in how I use it — and what I’d suggest anyone reconsidering Surfer in 2026 try — is treating it as a pre-writing tool rather than a drafting aid. Not “how do I score higher on this draft” but “what does the current landscape around this topic actually look like before I decide what to say.” That reframe turns Surfer from an optimization tool into something closer to structured competitive research. You’re not asking it what to write. You’re asking it what everyone else has already written, so you can figure out what’s actually missing.

Run any reasonably competitive keyword through Surfer and spend ten minutes just looking at what comes back — not to copy the patterns, but to notice where they’re identical across every result. That sameness is a signal. It tells you what the default version of this content looks like, which is exactly what you want to avoid producing. The gaps in Surfer’s content map are often more useful than the recommendations.

There’s an irony buried in any honest Surfer SEO review of this era: the tool that was built to help content match what’s ranking has become, in the right hands, a tool for identifying what’s worth departing from. That’s not how it’s marketed. It’s not really how the interface is designed. But it’s the most defensible reason to keep paying for it in a search landscape where matching the consensus is the least interesting thing you can do.

I Used to Think Surfer Was About Rankings. Now I See It as a Content Thinking Tool — And That’s a Better Reason to Pay for It

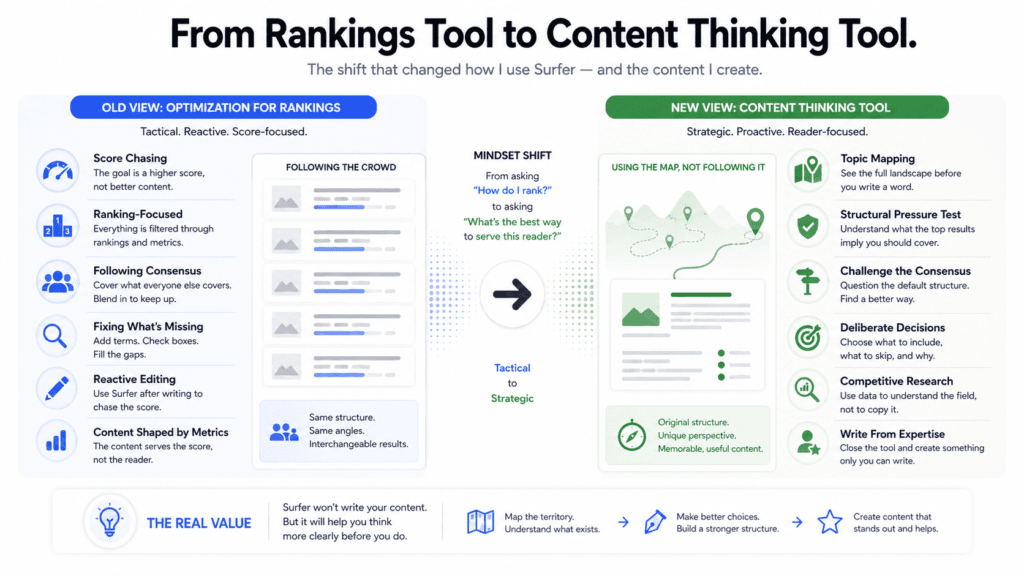

For the first couple of years I used Surfer, I was using it wrong. Not wrong in the sense of misreading the interface — wrong in the sense of asking it the wrong question. I wanted it to tell me why something wasn’t ranking and what to change. It can kind of do that. But that’s not actually what it’s good at.

The moment this shifted for me wasn’t dramatic. I was working on a piece about a topic I knew reasonably well, had a rough structure in my head, and pulled up Surfer mostly out of habit. And what I noticed — looking at the suggested headings, the subtopics, the terms coming up repeatedly across the top results — wasn’t “here’s what I’m missing.” It was “here’s how everyone else has decided to organize this, and I think they’re wrong.” That disagreement was more useful than any optimization suggestion the tool has ever given me. It forced me to articulate why my structure was better, which made the structure actually better.

That’s a strange thing to discover about a tool — that arguing with it is more valuable than following it.

Since then I’ve started thinking about Surfer less as an optimization layer and more as a structural pressure test. Not “what score does this draft get” but “what has everyone else decided this topic requires, and do I agree.” Sometimes the consensus is right and I’ve missed something obvious. More often, the consensus reveals a kind of default thinking about a topic that’s worth consciously departing from — the conventional order of ideas, the obligatory sections that show up in every article without anyone questioning whether they need to be there.

Now, I want to be straight about something, because I’ve read enough Surfer SEO review content to know this is where the argument can quietly go soft. Saying “use Surfer as a thinking tool” is a convenient reframe if the original promise — better rankings — isn’t reliably delivering. And I’m not going to pretend the reframe is entirely separate from that. Rankings from Surfer are real but inconsistent. Competitive niches with strong domains are hard to move regardless of your optimization score. Less competitive niches often move without much optimization at all. The middle ground where Surfer’s influence is cleanest is narrower than the marketing implies.

What I keep coming back to is a simpler question: what would my content process look like without it. For a period last year I stopped using it deliberately, just to check. What I found was that my drafts got less disciplined — not worse in terms of ideas, but sloppier structurally. I’d miss subtopics I would have caught. I’d underweight sections that readers expected. The content was more distinctively mine and slightly less complete. Whether that trade-off is worth $90 a month depends entirely on where you sit on that spectrum.

The writers I’ve watched get the most out of Surfer are almost never the ones optimizing toward the score. They’re the ones using it before they write anything — spending twenty minutes mapping a topic, then closing the tool and writing from their own knowledge. The score barely enters into it. What they’ve taken from Surfer is a clearer picture of the territory, which lets them make more deliberate decisions about what to include, what to skip, and where they actually have something different to say.

That’s a content thinking tool. It’s just not what Surfer calls itself. And honestly, if they marketed it that way, I think fewer people would buy it — which probably explains why they don’t.

The Bigger Threat to Surfer May Not Be AI Search — It May Be Smarter SEO Tools

Every conversation about Surfer’s future eventually arrives at the same destination: AI search is going to eat organic traffic, SGE will absorb informational queries, zero-click results keep expanding, so does any of this matter anymore. I’ve had this conversation a lot. And I think it’s the wrong conversation to be having about Surfer specifically.

AI search is real and it’s eating certain query types — but unevenly, slowly, and in ways that keep confounding the more confident predictions. Informational queries are getting absorbed faster than commercial ones. Anything requiring recent information, local knowledge, or genuine first-hand experience is holding up better than expected. The death of SEO has been announced so many times now that the announcements have become their own content category, complete with their own optimization strategies. Surfer probably has more runway from AI disruption than the current discourse implies.

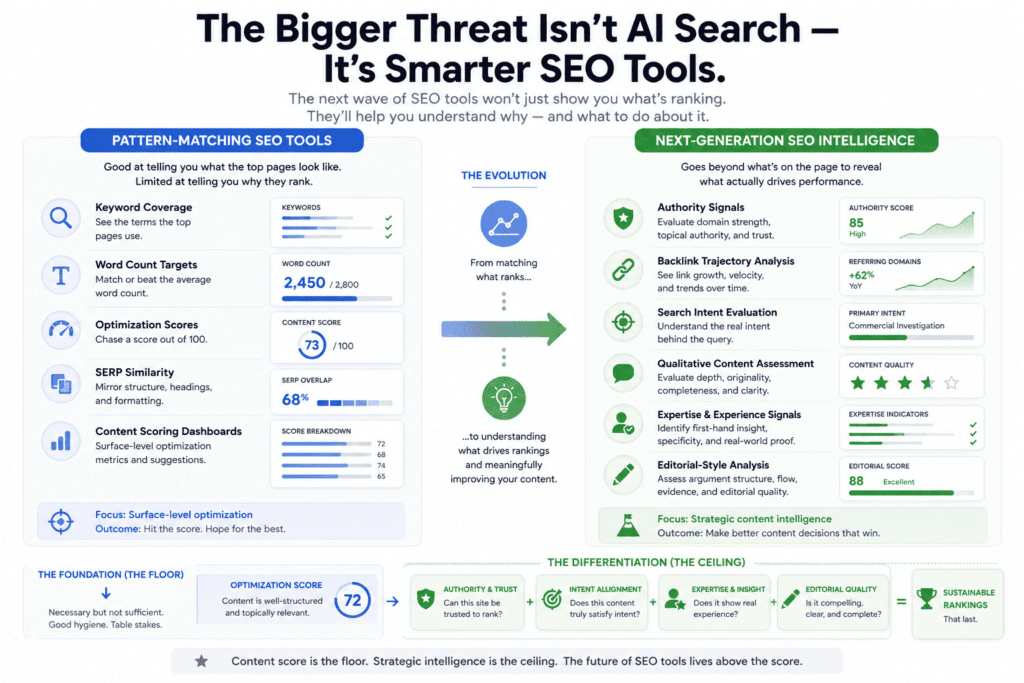

What it has less runway from is its own tool category getting smarter around it.

Here’s the problem I keep coming back to, and that I haven’t seen Surfer meaningfully address in any recent update: the tool tells you what the top-ranking content looks like, with no visibility into why it’s actually ranking. It sees semantic terms, word counts, heading structures. It doesn’t see that position one has 6,000 referring domains and would rank if the article were a list of bullet points and a stock photo. It doesn’t see that position three has been sitting there since 2019, accumulating clicks and dwell time signals that a new piece couldn’t replicate in two years of solid optimization. The competitive picture Surfer produces is accurate in the narrowest sense — here’s what these pages contain — and quietly misleading in every sense that actually matters for strategy.

Other tools are starting to close that gap. Not completely, not cleanly, but the direction is clear. Platforms layering content analysis on top of authority signals, backlink trajectory data, and traffic trend information are giving writers a fundamentally more honest picture of what they’re actually up against. Knowing that the top result is only beatable because its referring domain count peaked in 2020 and the site has been slowly declining is a different kind of insight than “your average word count is 380 below the top ten.” One changes what you decide to write. The other changes how long the article is.

The more interesting competitive pressure is on the quality evaluation side, and this one doesn’t get talked about much in any Surfer SEO review I’ve read. The tools making moves here aren’t the ones with better AI writing assistants — that feature is everywhere now and almost entirely undifferentiated. The interesting development is tools trying to evaluate content on dimensions Surfer doesn’t touch at all: whether the piece answers the specific question someone at this stage of search intent would actually ask, whether the argument structure holds together, whether the level of specificity signals someone who has actually done the thing versus someone who has read about it. None of them have solved this. But they’re pointed in a direction that makes pattern-matching against the current top ten look increasingly like a previous generation’s approach.

Surfer’s constraint here is partly technical and partly a product identity question. Measuring semantic coverage is tractable. Evaluating whether content reflects genuine expertise is not — at least not in ways that current tooling handles reliably. So some of what Surfer hasn’t built isn’t a strategic oversight, it’s a hard problem that nobody has cracked cleanly yet.

But hard problems get solved, and well-funded competitors are working on this specifically. The tool that gets qualitative content evaluation right — even partially right — doesn’t make Surfer useless. It makes Surfer’s core loop feel like a narrow, earlier version of what SEO tooling can do. The content score becomes a floor, not a ceiling. A hygiene check rather than a strategy.

That’s what I’d be watching. Not ChatGPT eating search queries. Not AI overviews expanding. The SEO platform that learns to evaluate content the way a sharp editor would — fast enough to be useful in an actual workflow — is the one that makes chasing a score out of 100 feel like something people used to do.

What Surfer SEO Actually Costs — And Where the Pricing Gets Complicated

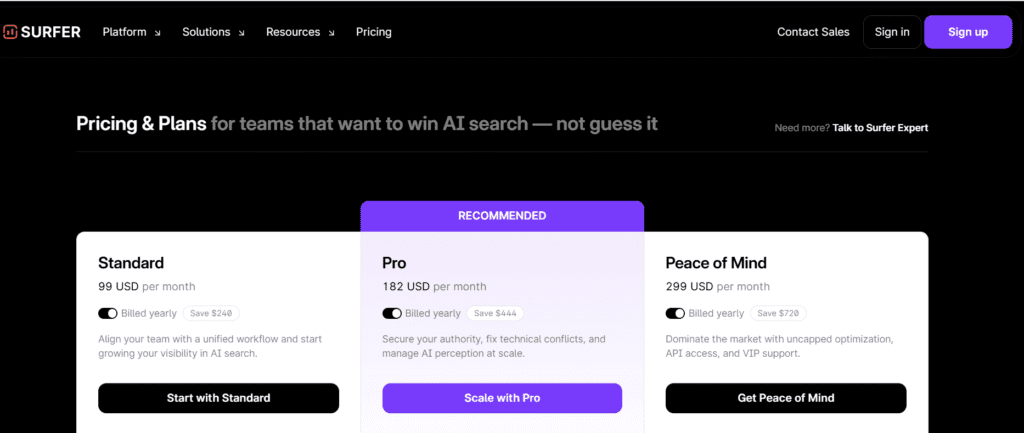

Surfer’s pricing has moved around enough that I’d always recommend checking their current page before budgeting — but the structure of how they price things has stayed consistent, and that structure is worth understanding before you see the numbers.

The entry plan gets you the content editor, the optimization score, and a capped number of articles per month. It’s functional. What it isn’t is the version of Surfer that people are usually talking about when they say Surfer is useful. The features that show up in recommendations — the site audit, Topical Maps, meaningful article volume — live on the mid and upper tiers. The gap between what the entry plan offers and what makes the tool genuinely useful at scale is wider than the pricing page makes it look at first glance.

The AI writing layer — Surfer AI — is either bundled or an add-on depending on which plan you’re looking at. Read this carefully before assuming it’s included. It’s the kind of thing that can add a meaningful chunk to a monthly bill you thought you’d already calculated.

Here’s the math that actually matters: at low publishing volumes, the cost per article is hard to justify. If you’re writing three pieces a month, you’re paying a significant per-article premium for structure and coverage checking you could approximate with a good brief template. At ten or more pieces a month — especially across multiple sites or with multiple writers — the per-article cost drops, the organizational features start earning their keep, and the ROI argument becomes coherent.

Surfer does offer a free trial. Use it on something real, not a throwaway topic. The only honest way to evaluate whether the workflow fits you is to run it against content where the outcome actually matters to you.

Surfer SEO Alternatives: How It Compares to Clearscope, Frase, and MarketMuse

I want to be careful here because tool comparisons are where SEO content goes to become useless fastest. Every comparison ends up saying the same things in the same order. So instead of a feature matrix, here’s what actually differentiates these tools in practice — which is a different question.

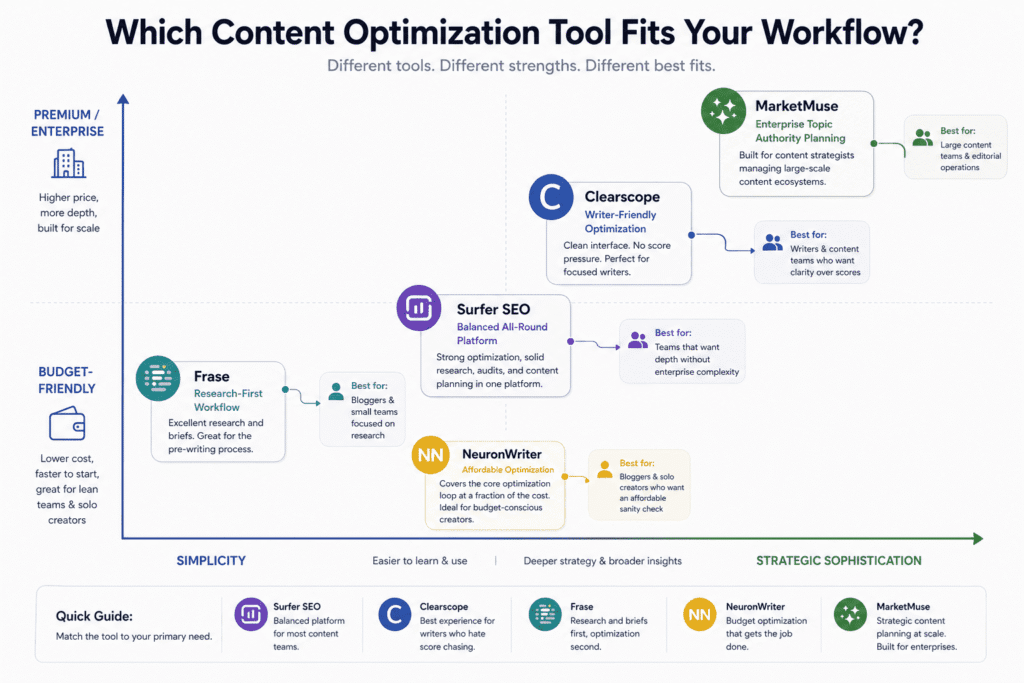

Clearscope is what a lot of writers move to when they find Surfer’s scoring interface too difficult to resist chasing. The term suggestions are cleaner, the UX doesn’t put a live number in your peripheral vision while you’re trying to think, and the overall experience feels less like being graded and more like being informed. It costs more at the entry level and does significantly less — no topical mapping, no audit, no AI writing. If your complaint about Surfer is that the score ruins your drafting process, Clearscope solves that specific problem. If your complaint is that you need more strategic planning tools, it doesn’t.

MarketMuse is a different category of tool wearing similar clothes. It’s thinking about your content at a site and topic authority level rather than a page level, and its briefs reflect that — more detailed, more strategic, more opinionated about what a piece needs to accomplish within a broader content ecosystem. It’s also meaningfully more expensive and takes longer to learn. I’ve seen content strategists managing large editorial operations swear by it. I’ve also seen solo bloggers buy it, feel overwhelmed within a week, and quietly go back to something simpler. It’s the right tool for a specific kind of operation and the wrong tool for almost everyone else.

Frase is the one I’d tell someone to look at seriously if they’re price-sensitive and primarily need help with the research and brief-building stage rather than the optimization stage. Its pre-writing research workflow is genuinely good — better than Surfer’s in some respects at that specific moment in the process. The optimization analysis is thinner and the NLP suggestions less reliable. Think of it as a research-first tool that happens to have optimization features, rather than an optimization tool that happens to have research features.

NeuronWriter keeps coming up in conversations about Surfer because it covers the core optimization loop — content scoring, term suggestions, competitive analysis — at a fraction of the price. The interface is rougher, the feature set narrower, and some of the data less reliable. But for a blogger who primarily wants a sanity check on coverage and doesn’t need the platform features, it’s harder to dismiss than Surfer’s marketing would prefer. If you’re considering Surfer at the entry tier, trial NeuronWriter first. You might find it does 80% of what you actually need at 30% of the cost.

The honest summary of where Surfer sits: above Frase and NeuronWriter on depth and reliability, roughly comparable to Clearscope with a broader feature set but a more gamified interface, below MarketMuse on strategic sophistication. None of these tools is straightforwardly the best. They’re each the best tool for a specific workflow at a specific scale, and matching the tool to your actual situation matters more than picking the one with the best reviews.

If you’re evaluating the broader SEO platform landscape beyond content tools, our Semrush review covers the full suite in depth.

Questions People Actually Ask About Surfer — Answered Honestly

Is Surfer SEO worth it in 2026?

For some people, yes. For more people than the reviews suggest, no. The tool works — the question is whether it’s working on the right problem for your situation. If your site is young, your publishing volume is low, or your niche isn’t particularly competitive, you’re probably paying for optimization when what you need is distribution, authority-building, or just more content. If you’re publishing at volume in a competitive niche, it’s much easier to make the case.

Does Surfer actually move rankings?

This is the question every Surfer SEO review should answer more carefully than most do. Sometimes yes, and it’s almost impossible to isolate. Rankings respond to dozens of variables Surfer doesn’t touch — domain age, backlink profile, click-through rate, content freshness, query intent drift. The more honest claim is that Surfer helps you produce more structurally complete content, and structurally complete content tends to perform better over time. Whether Surfer specifically caused the ranking or whether you would have gotten there with a good brief and a careful editor is a question nobody can answer cleanly.

Surfer vs Clearscope — which one?

If the score-chasing dynamic bothers you and you don’t need topical mapping or site audits, Clearscope. If you want a broader platform and can resist the live scoring interface, Surfer. The content quality of what you produce with either tool will depend far more on what you bring to it than on which tool you chose.

Is there a free version?

There’s a free trial, no permanent free tier. Run the trial on a real project in a niche you actually care about. A trial on a throwaway topic tells you almost nothing about whether the tool fits your workflow.

What doesn’t Surfer do?

Quite a lot, and this matters: no keyword research worth relying on, no technical SEO auditing, no backlink data, no rank tracking. If you come to Surfer expecting an all-in-one SEO platform you will be disappointed. It is a content structure and coverage tool. That’s the whole job. Everything else is thin or absent. For those features, see our Semrush review for a platform that covers all of them.

Is it good for beginners?

The interface is approachable enough, but beginners are the most vulnerable to the specific failure mode Surfer creates — optimizing toward the score rather than toward the reader. The score is a concrete, gamified target in a discipline that’s otherwise frustratingly abstract, and beginners will chase it harder than experienced writers do. If you’re new to content and you use Surfer, make yourself a rule: don’t open the tool until the first draft is finished. Score it after. Edit for coverage after. Don’t let it into the room while you’re still working out what you’re trying to say.

Who Should Actually Pay for Surfer — And Who’s Better Off Without It

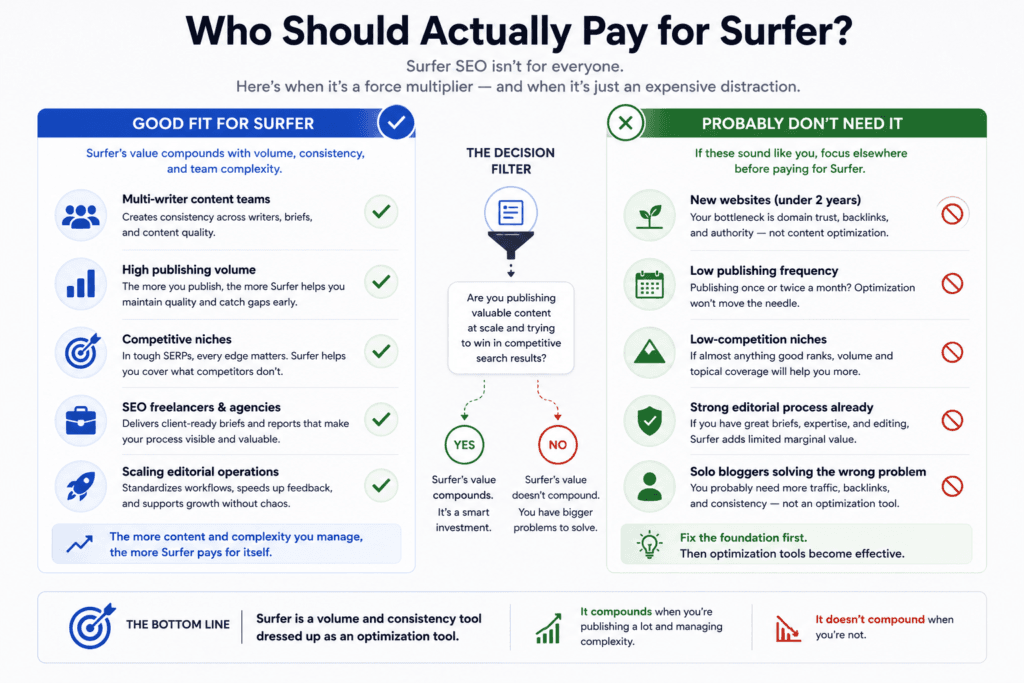

Most “who is this for” sections in any Surfer SEO review are written to include as many people as possible. This one is written to exclude people, because that’s more useful.

If you’re running a content operation at real volume — multiple writers, regular publishing cadence, competitive niches where content quality is actually the differentiating variable — Surfer earns its cost. Not because the score is magic, but because the process it imposes creates consistency that’s hard to achieve when multiple people are writing from their own instincts. Briefs become more structured. Coverage gaps get caught before publishing rather than after. The score, irritating as it is when you’re chasing it, gives editors and writers a shared reference point that speeds up feedback cycles.

Freelance writers working with SEO-aware clients get a secondary benefit worth naming: Surfer produces deliverables clients can see and understand. A content brief with term suggestions and structural recommendations looks like a process, and clients pay for process as much as output. Whether the score actually predicts ranking is almost beside the point in that context.

Now for the people who should close this tab and spend their money elsewhere.

If your site is under two years old and you’re publishing once or twice a month, your constraint is not content optimization. It’s domain trust, it’s backlink profile, it’s the basic question of whether Google has decided your site is worth paying attention to yet. Surfer cannot fix any of those things. A $100+ monthly subscription solving the wrong problem is a particularly frustrating kind of waste.

If you already have a strong editorial process — detailed briefs, genuine subject matter expertise, a good editor who pushes back on weak structure — the marginal value Surfer adds is real but probably not $100 a month worth of real. The tool fills gaps. If the gaps aren’t that large, the tool isn’t that valuable.

And if you’re in a niche where the competition is thin enough that almost anything reasonably good ranks — stop optimizing and start publishing more. Volume and topical coverage will do more for you than optimization scores in a low-competition environment.

The one-sentence version, and I mean this without the softening most reviews add: Surfer is a volume and consistency tool dressed up as an optimization tool. Its value compounds when you’re publishing a lot and managing complexity. It doesn’t compound when you’re not.

Building a backlink profile and understanding domain authority are the skills that actually move the needle at this stage — our guide to Ahrefs for beginners is a better place to start than a content optimization tool.

My Honest Take: Surfer Is Still Useful, But Not for the Reason Most Reviews Claim

I’ll say the quiet part out loud: most Surfer SEO reviews are written by people who are either affiliates, optimists, or haven’t used the tool long enough to watch it disappoint them. I’ve been all three at different points. What follows is where I’ve actually landed after enough time with it to have changed my mind twice.

Surfer works. I want to be clear about that before anything else, because the nuanced take can easily read as a dismissal and it isn’t one. It works in the specific, limited way that any disciplined pre-writing process works — it makes you think harder about structure and coverage before you’ve written yourself into a corner. The writers I’ve seen get consistent value from it are the ones who use it before a draft exists, not the ones chasing a score on something already written. That distinction matters more than any feature comparison.

But here’s what the reviews don’t say: the gap between “Surfer helped me think through this piece” and “Surfer helped me rank” is enormous, and almost nobody is measuring it honestly. Rankings have too many variables for a content optimization tool to claim reliable credit. Domain age, backlink profile, how recently Google crawled the page, whether the site has had a manual action in its history, whether the query intent shifted three months ago and nobody noticed — all of it sits upstream of whether your semantic coverage score was 78 or 84. When a piece ranks after being run through Surfer, Surfer gets the credit. When it doesn’t, the explanation is usually “we need more backlinks” or “the niche is competitive.” The tool is rarely examined.

I ran a quiet experiment last year — stopped using Surfer for a full quarter on one site, kept using it on another, tried to hold everything else roughly constant. The results were inconclusive in the way that honest SEO experiments usually are, which itself felt like data. What I did notice was that the site without Surfer produced messier content — not worse ideas, but less considered structure, more gaps in coverage, sections that could have used more development. Whether that affected rankings I genuinely can’t say. Whether it affected the quality of what I was publishing I’m more confident about.

That’s actually where I think Surfer’s real value proposition lives in 2026, and it’s a less exciting pitch than the one on the pricing page. It’s a content quality tool that got marketed as a rankings tool, and the mismatch has created a user base with the wrong expectations. People buy it to rank, get inconsistent ranking results, either churn or convince themselves it’s working, and miss the thing it’s actually decent at — which is making content more complete and structurally considered than it would be if you were just writing from instinct.

Whether that’s worth the monthly cost is genuinely a function of your situation. If you’re writing at volume, managing other writers, or working in a niche competitive enough that content quality is actually the differentiating variable — yes, probably. If you’re a solo writer posting occasionally into a young site with no backlink profile, Surfer is solving a problem that isn’t your most urgent problem. Your most urgent problem is that Google doesn’t trust your domain yet, and no optimization score fixes that.

The thing I keep coming back to — the thing that any genuinely useful Surfer SEO review should probably leave you with — is that the tool reflects a particular theory of what makes content rank. That theory was more accurate in 2019 than it is now, and the trajectory isn’t moving in Surfer’s favor. Content that ranks in 2026 increasingly does so because of things Surfer can’t see: trust, experience, genuine usefulness to a specific reader with a specific problem. Surfer can help you cover the ground. It cannot help you have something worth saying when you get there.

Most of the content I’m proudest of — the pieces that have driven real traffic, real links, real return readers — scored somewhere between mediocre and average in Surfer. Make of that what you will.

If your comparison is less about content tools and more about full SEO platforms, our Semrush vs Ahrefs breakdown covers that decision in detail.

Disclaimer: This article may contain affiliate links. If you make a purchase through these links, I may earn a commission at no extra cost to you. All opinions are based on hands-on testing and independent research.