Starting a new website and trying to figure out keyword research is one of those experiences that feels straightforward until you’re actually doing it. The guides make it sound logical. The tools make it look organised. And then you spend three hours building a keyword list, write your first few articles around it, and watch them sit on page five while websites you’ve never heard of sit comfortably above you.

The problem isn’t the effort. It’s that most advice on keyword research for new websites was written by people whose sites already have years of authority behind them. They’re not lying — the advice works, for them. But a site with ten years of backlinks and a site with ten days of existence are not playing the same game, and treating them as if they are is where most beginners quietly go wrong.

The reason this matters is stark — according to Ahrefs’ own research, 96.55% of pages get no traffic from Google — and the sites making up that majority aren’t failing because of bad writing. They’re failing because of bad keyword selection at the start.

This guide is written specifically for the starting-from-zero situation. Not the theoretical version of it — the real one, where your domain authority is negligible, your backlink profile is essentially nonexistent, and the standard playbook keeps producing content that ranks nowhere useful. What follows isn’t about finding the most popular keywords in your niche. It’s about finding the ones you can actually do something with right now, with the site you have today — and building from there in a way that compounds over time rather than just accumulating pages.

Table of Contents

Nobody Told You the Game Was Rigged From the Start

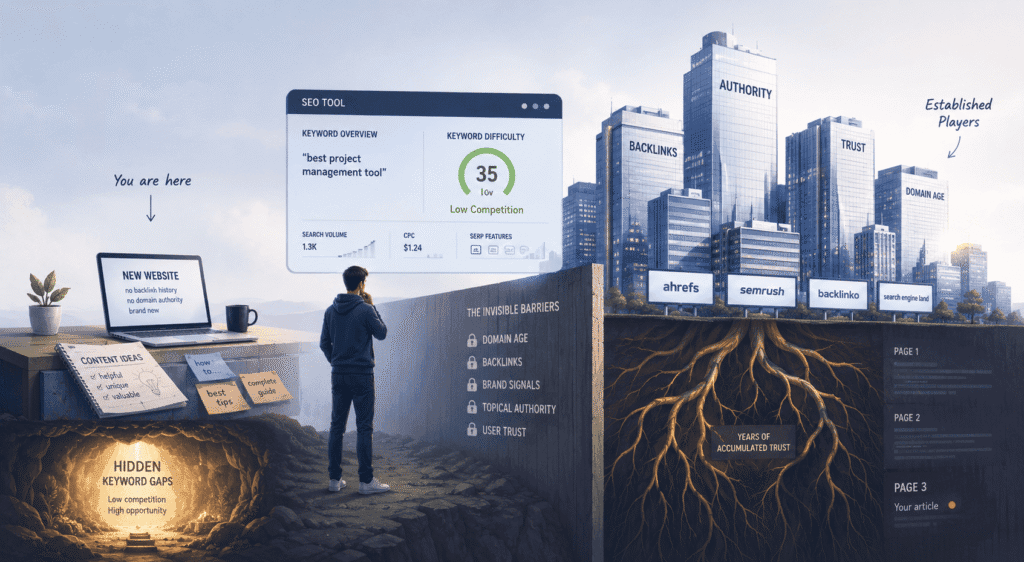

Most guides on keyword research for new websites skip a step. A pretty important one, actually — they forget to mention that the advice they’re about to give you was written for websites that already have something yours doesn’t: history.

Not expertise. Not better content. Just time. And the authority that quietly accumulates with it.

The guides come from Ahrefs, Semrush, Backlinko — sites that have been earning backlinks and building trust signals since before some of their readers were in high school. When they label a keyword “low competition,” they mean low competition for them. A difficulty score of 35 is a gentle warm-up when you have thousands of referring domains behind you. When you have none, that same score is a wall.

What makes this frustrating is that the tools don’t tell you this. You run a search, see a green difficulty indicator, write a genuinely good piece of content, and then watch it park itself on page three while a four-year-old article with half the depth sits comfortably above you. So you assume you did something wrong with the content. You didn’t. You picked the wrong keyword for your current situation — which is a completely different problem, and one that nobody flagged.

Here’s what’s actually happening under the hood. Google doesn’t evaluate your article in isolation. It evaluates your article in the context of your entire site — how long it’s been around, what other sites say about it, whether there’s any accumulated reason to trust it. A new domain is, from Google’s perspective, an unknown quantity. And unknown quantities don’t get the benefit of the doubt when there are established, trusted sources already covering the same ground.

This isn’t a flaw in the system, really. It’s just how trust works. You’d do the same thing.

The shift that changes everything — and what the rest of this guide is built around — is learning to stop thinking about keywords as search terms and start thinking about them as competitive environments. Some of those environments are effectively closed to new sites. Others are wide open, either because the big players never noticed them or because they’re simply not worth a site with a million monthly visitors bothering to cover.

Those gaps are real. They’re rankable. And for a new site, they’re not a consolation prize — they’re the actual strategy.

Forget Search Volume. Seriously, Just Forget It For Now.

There’s a number sitting at the top of every keyword tool, big and bright and apparently very important. It is the first thing new site owners look at, the main thing they optimise for, and — at this stage at least — probably the least useful signal they could be chasing.

There’s a logical endpoint to this argument that most people don’t follow all the way through: some of the most valuable keywords you’ll ever target show zero search volume in every tool you check. Not low volume. Zero. No recorded data. The tools have nothing.

This happens because keyword tools measure search volume by aggregating historical data — and some queries are either too specific, too new, or too conversational to register in that data at a meaningful threshold. But real people are still typing them. A search like “freelance copywriter for SaaS onboarding emails London” might show zero volume in Ubersuggest. It also might be exactly what a high-value client types at the exact moment they’re ready to hire someone. Zero volume doesn’t mean zero searches. It means the tool couldn’t measure it — which is a very different thing.

For a new site, this reframes the research process slightly. You’re not just looking for keywords with measurable volume that you can realistically rank for. You’re also listening for the specific, natural-language phrases your audience uses when they’re closest to needing what you offer — and those phrases often live below the threshold any tool can detect.

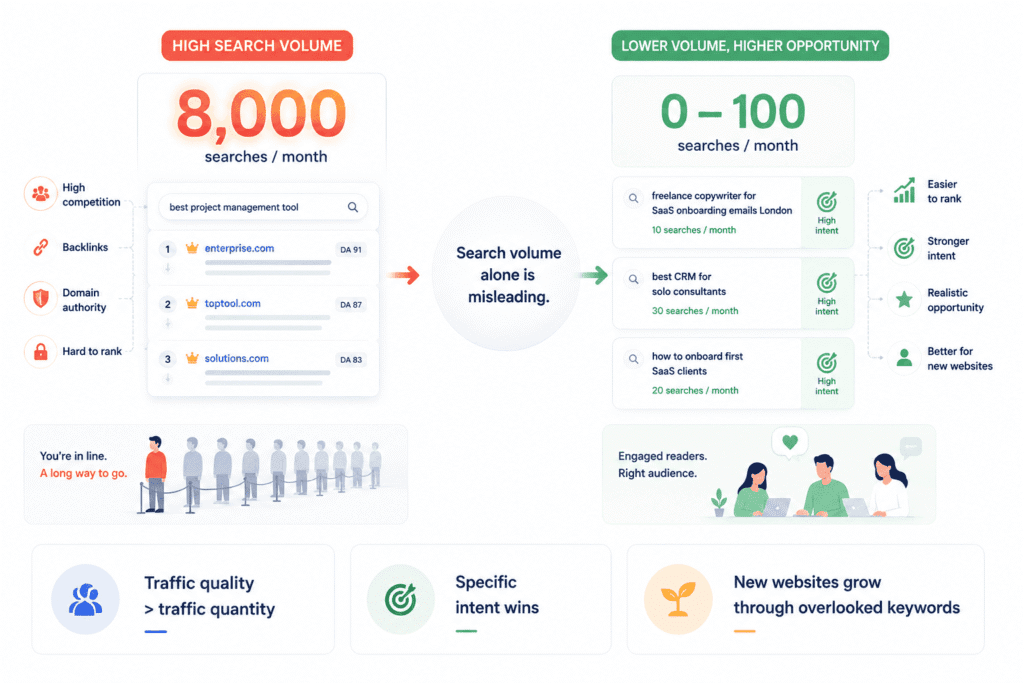

Search volume tells you how many people searched for something. That’s it. It tells you nothing about whether you can rank for it, nothing about whether the people searching are actually looking for what you offer, and nothing about whether the content already sitting at the top of those results is even beatable. It’s a popularity contest score with no context attached.

And here’s the part that took me a while to really internalise: high search volume and high competition aren’t two separate problems you weigh against each other. They’re the same problem. The reason a keyword has 8,000 monthly searches is largely because established sites spotted it years ago, built content around it, earned links to it, and have been compounding that advantage ever since. By the time you find it in a keyword tool looking exciting and green, the window closed a long time ago.

What you’re actually looking at when you see a high-volume keyword isn’t an opportunity. It’s a waiting list. And new sites don’t jump the queue — they wait, sometimes for a year or more, while Google slowly decides whether to trust them.

So what actually matters?

Honestly, the question worth asking isn’t “how many people search for this” — it’s “are the people already ranking for this actually answering it well?” Because that’s where the real gaps live. Not in volume nobody else has noticed, but in intent nobody else has served properly. There are keywords with 300 monthly searches where the top three results are generic, outdated, or clearly written for a slightly different audience. Those are the ones a new site can walk into and genuinely compete.

This is something that proper keyword research for new websites forces you to confront early: traffic quality matters more than traffic quantity, especially at the start. A hundred readers who found exactly what they needed — who stayed, read, maybe came back — do more for a new site than two thousand who landed on the wrong page and left in ten seconds. And increasingly, Google notices the difference.

The volume obsession is understandable. The numbers are right there. They feel like a measure of potential. But potential you can’t access yet isn’t really potential — it’s just noise. The sites consistently ranking for high-volume terms aren’t doing it through better writing. They’re doing it through accumulated trust that took years to build. You can get there. Just not by targeting their keywords on day one.

Start smaller. Start specific. Find the questions where the current answers are genuinely lacking and you can do something about that right now, with the site you have today. The bigger keywords will still be there when you’ve earned the right to go after them.

Search Intent Isn’t a Theory — It’s the Reason Your Content Gets Ignored

Let me describe something that happens to almost every new site owner at least once. You find a keyword that feels right — relevant to your niche, reasonable difficulty score, decent enough search volume. You write the article carefully. You optimise it. You publish it. And then nothing happens. No rankings, barely any impressions, total silence. So you assume it’s the domain age, or the backlinks, or some technical issue you haven’t figured out yet.

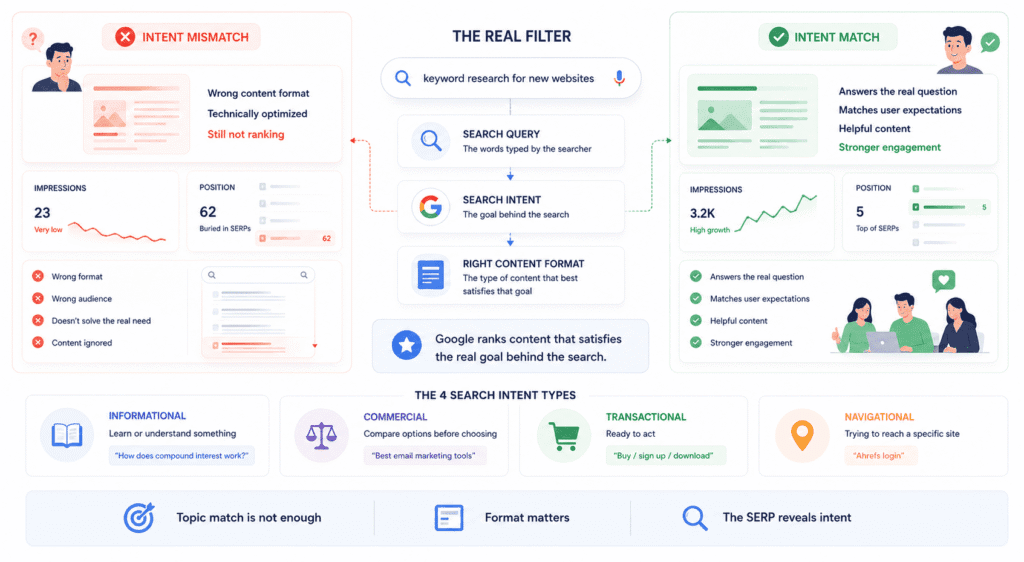

Sometimes it’s none of those things. Sometimes you just wrote the wrong type of content for what that keyword was actually asking for.

That’s search intent in practice. Not the theory — the frustrating, invisible reality of it.

Google has spent years building a model of what different searchers expect when they type different things. And that model isn’t just about topic — it’s about format, depth, content type, even the assumed level of knowledge of the person searching. Google’s helpful content guidelines make this explicit — the question isn’t whether content is well-optimised, it’s whether it genuinely satisfies what the person searching was actually trying to accomplish. That gap — between technically relevant content and content that resolves the real intent — is where most new site content quietly fails.

The Four Intent Types, Explained Without the Jargon

Most guides present this as a clean taxonomy and move on. Four types, four boxes, sorted. The problem is that framing makes it feel more academic than useful, so here’s the version that actually helps when you’re sitting in front of a keyword trying to decide what to write.

Some searches are just people trying to understand something. “How does compound interest work.” “Why won’t my sourdough rise.” These people want an explanation — they’re not shopping, they’re not comparing options, they just want to understand. Writing a product page or a “top 10 tools” listicle for a keyword like this is a category error. You’re selling to someone who came in to learn.

Then there are searches where someone is already close to a decision. This is the intent type that new site owners most consistently miss. “Best email marketing tools for bloggers.” “Convertkit vs Mailchimp.” “Ahrefs review 2026.” The person searching already knows what the thing is — they don’t need it explained. They want comparison, opinion, honest assessment. An introductory explainer here lands completely wrong, even if the keyword looks like a natural fit for your beginner-focused content.

Transactional intent is the clearest — someone ready to do something. Buy, download, sign up, book. If your page isn’t set up to facilitate that action, ranking for it barely helps you.

And navigational searches — someone typing a brand name or trying to reach a specific site — aren’t really worth chasing unless you are that destination. Move on.

The part the textbook version glosses over: intent is messier in real life than it is in a framework. “Keyword research for new websites” could be pure information-seeking or it could be someone evaluating tools and approaches before they commit to a strategy. The same keyword means different things depending on where the searcher is in their journey. When you’re unsure, the SERP tells you more than any category label ever could.

How to Spot Intent Mismatches Before You Write a Single Word

Here’s the actual habit worth building — and it takes about four minutes, which makes it embarrassing how often people skip it.

Before writing anything, search the keyword in an incognito window and just look at what Google is serving. Not the domain names. Not the difficulty scores. The content itself — what format is it, how long does it appear to be, is it written for someone who knows nothing or someone who knows quite a bit already.

What you’re reading is Google’s current best guess at what this keyword demands. That mix of results is the most honest signal you’ll find anywhere — more honest than a tool score, more honest than your instinct about what the searcher wants. If the top five results are all comparison articles and you’re planning a beginner’s guide, you’re not filling a gap. You’re bringing the wrong thing.

Consistency matters here too. When all five results are the same format, Google is confident. Deviating from that as a new site is a serious gamble — you’d need a compelling reason and frankly the trust to back it up, neither of which a new domain typically has. When the results are genuinely mixed, that’s where there might be room to own a specific angle rather than compete head-on.

One thing that gets overlooked in almost every keyword research for new websites conversation is language level. Look at how the ranking content speaks. Is it defining basic terms or assuming the reader already understands them? That tells you where in their journey the typical searcher is — and shapes everything from your opening sentence to how deep the content actually needs to go before it’s done its job.

Miss the intent and the writing doesn’t matter. Nail it and you’ve already solved the problem most new sites don’t even know they have.

The SERP Is Telling You Who You’re Actually Fighting

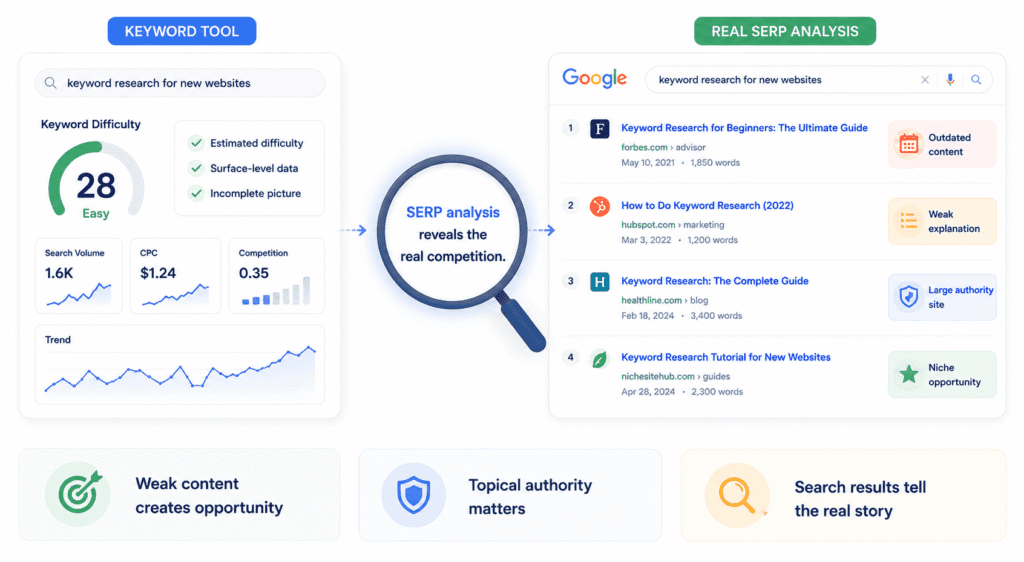

A keyword difficulty score is a number a tool calculated using signals you didn’t choose and weightings you can’t see. It’s a starting point. Treat it as anything more than that and it will mislead you — sometimes badly.

The actual answer to “can I rank for this” is sitting one search away.

Pull up the results page for a keyword you’re considering and just spend two minutes actually looking at it. Not scanning for brand names you recognise. Looking. Because what’s on that page is a live, unfiltered picture of who Google currently trusts to answer this question — and more importantly, why it trusts them. That context is worth more than any difficulty score, and almost nobody does this properly when they’re starting out.

The thing that tends to surprise people when they start doing this seriously is how often the content ranking on page one is genuinely mediocre. Not terrible — just thin. Surface-level. The kind of article that covers the obvious angles without going anywhere near the parts a real person actually wants to know. It ranks because it was early, or because the domain behind it has accumulated weight across thousands of other articles, or because nobody with actual expertise has bothered to write something better yet. When you find that — a keyword where the top results are coasting on authority rather than quality — that’s a real opening. Not a guaranteed win, but a genuine one.

What you’re also looking for is content age. If the top three results were last updated two or three years ago and the topic has genuinely moved on, that staleness matters. Google doesn’t keep outdated content at the top out of loyalty — it keeps it there until something better arrives. The question is whether you can be that something. If the content is recent, actively maintained, and clearly written by someone who knows the subject deeply, that’s a harder environment and you should go in with eyes open.

The type of site ranking matters too, and not in the way most people assume. A large publication — a Healthline, a Forbes, a major industry blog — ranking for a niche keyword is often doing so on domain authority alone. Their content is broad because their audience is broad. That’s actually beatable with genuine depth and specificity, because you can go further into the topic than they ever will. A dedicated niche site where this subject sits at the core of everything they publish is a different challenge entirely. They’ve built topical authority across dozens of interconnected articles, Google understands their focus clearly, and displacing them requires more than one good piece of content.

One check that almost never gets mentioned: how many of the top five positions are owned by the same domain? A diverse results page — five different sites in five positions — suggests the SERP is relatively open. Three positions held by one site means Google has decided that domain owns this topic. That’s not impossible to compete with, but it’s a much steeper climb than it looks from the outside.

📖 Related: How to Do a Complete SEO Audit for a Business Website

The AI Overview Check Nobody Tells Beginners to Do

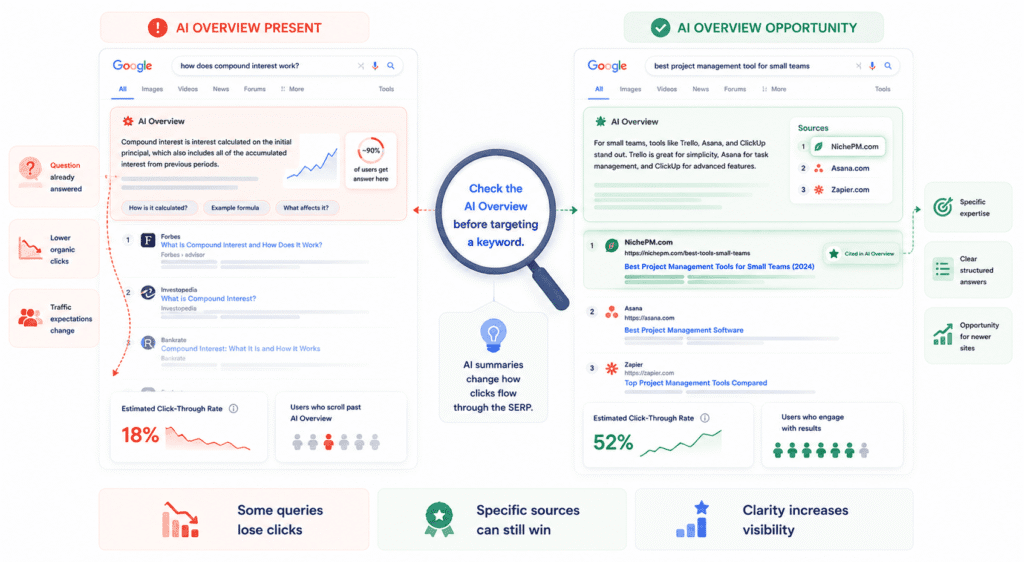

There’s a layer to SERP reading in 2026 that most keyword research for new websites guides haven’t properly caught up to, and glossing over it leads to a specific kind of disappointment — you rank, you check Search Console, and the traffic is a fraction of what the search volume suggested.

Before committing to any informational keyword, search it and check whether an AI Overview appears. That expanding panel at the top of the page that summarises an answer before a single organic result is visible. If you’ve been doing keyword research for a while you’ll have noticed these appearing more frequently — and if you haven’t been paying attention to them, start now, because their presence changes the traffic reality of a keyword significantly.

The issue isn’t that AI Overviews exist. It’s that for certain types of queries, they answer the question well enough that a meaningful portion of searchers never scroll down. They get what they needed from the summary and leave. The organic results beneath it still technically rank. They just don’t get the traffic the search volume implied they would.

This doesn’t mean avoiding all keywords that trigger overviews. It means looking more carefully at what the overview actually does. Sometimes it’s thin — hedged answers pulled from sources that don’t quite fit, or a summary that covers the surface without touching what the searcher actually wants to know. Those keywords still drive clicks, because the overview leaves people wanting more. The ones to be cautious about are the queries where the AI summary is genuinely good. Complete, clearly sourced, directly useful. Ranking fourth or fifth beneath one of those is often a lot of effort for very little return.

There’s a flip side worth knowing about. The sources Google pulls into AI Overviews aren’t always the highest authority domains — they’re often the most specific, clearly structured, directly relevant ones. A newer site with genuinely expert content that answers a precise question in a citable way can end up referenced in an overview even without years of domain history behind it. It’s not the same as a traditional ranking, but for a new site trying to build visibility from scratch, appearing in an AI Overview is real exposure — and it’s built through the same things that make content rankable anyway: clarity, specificity, and actually knowing what you’re talking about.

Search the keyword. Read what’s there. Check for the overview. The page is having a conversation about what it takes to rank — most people just aren’t listening.

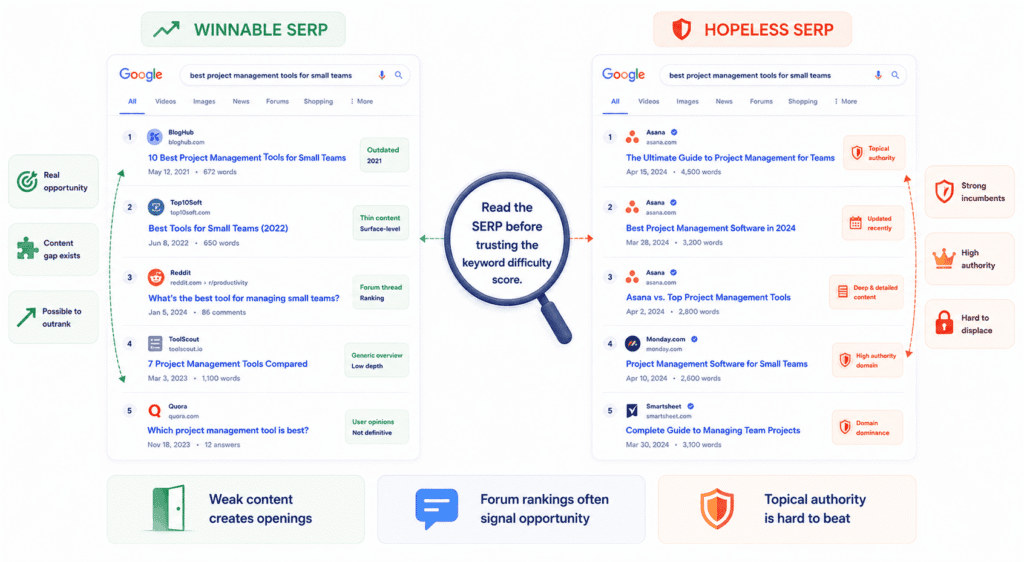

What a Winnable SERP Actually Looks Like vs. a Hopeless One

“Find low competition keywords” is advice that gets repeated so often it’s lost all meaning. Nobody explains what low competition actually looks like when you’re staring at a results page trying to make a real call on whether a keyword is worth three hours of your time.

So here’s the concrete version.

Forget the difficulty score for a moment. Open the results and just read what’s there. The first thing you’re trying to figure out isn’t who’s ranking — it’s why they’re ranking. Because those are very different questions, and the answer to the second one tells you whether you have a realistic shot.

Some content ranks because it genuinely earned it. It’s deep, current, written by someone who clearly knows the subject, and hosted on a site where this topic is central to everything they publish. That content is hard to displace and you should know that going in. But a lot of content ranks for a much simpler reason: the domain behind it is trusted broadly, and Google hasn’t yet found anything better to put there. Click through to the first result and you find a 700-word overview that skims the surface, doesn’t go anywhere useful, and was clearly written to cover the keyword rather than actually answer it. That’s not a strong incumbent. That’s a placeholder, and placeholders get replaced.

The other signal that genuinely excites me when I see it — and one that confuses a lot of beginners — is Reddit or Quora sitting in the top five. The instinct is to see a site with enormous domain authority and feel intimidated. But that’s the wrong read entirely. When Google is pulling forum threads into the top results for a keyword, it’s telling you it couldn’t find a properly structured article that answered the question well enough. It settled. A focused, well-researched piece on a dedicated site should beat a Reddit thread for almost any keyword where the thread isn’t genuinely exceptional — and most of the time, it isn’t.

Content age matters, but not the way people usually think. Old content sitting in position one isn’t automatically vulnerable. If it’s old and still genuinely good — still accurate, still complete, still the best available answer — Google has no reason to move it. What makes age an actual opportunity is when the topic has changed and the content hasn’t. Something that was true in 2021 that isn’t quite true anymore, or a space that’s evolved in ways the existing content doesn’t reflect. That’s a real argument for displacement. Age alone isn’t.

A hopeless SERP is easier to read once you’ve seen a few winnable ones, because it’s basically the opposite of everything above. Recent content, actively maintained. Written with obvious expertise — you can feel the depth when you read it, the kind of specificity that comes from someone who has actually done the thing rather than researched it. Multiple positions owned by the same domain. No forum threads, no thin overviews, no outdated angles. When every result looks like that, the difficulty score is almost irrelevant — you’re looking at a keyword that’s been properly claimed, and the honest answer is that you probably need more authority than you currently have before this fight is worth picking.

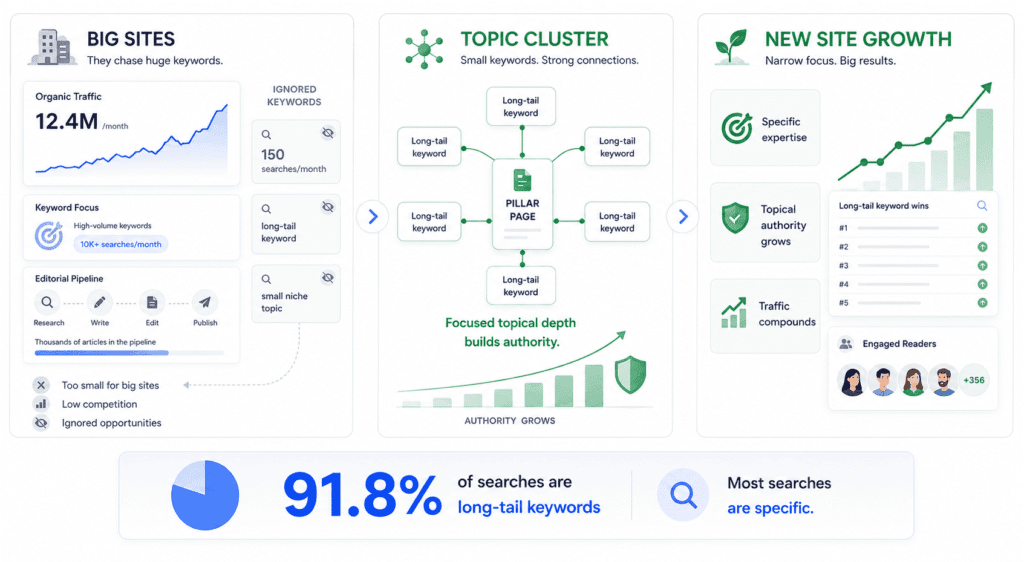

So Where Do New Sites Actually Win? (It’s Weirder Than You Think)

Here’s something that reframes the whole thing once it clicks: the sites making new site owners feel hopeless are almost entirely ignoring the keywords that are actually available to you.

Not because those keywords are bad. Because they’re too small.

A site publishing at scale — the kind with ten thousand articles and a content team — isn’t pitching keywords with 150 monthly searches in their editorial meetings. The return doesn’t justify the investment at their size. So those keywords sit there, genuinely underserved, either covered shallowly by someone who happened to stumble across them or not covered at all. For a new site that understands this, going narrow and specific isn’t settling for less — it’s the only realistic entry point into a competitive space.

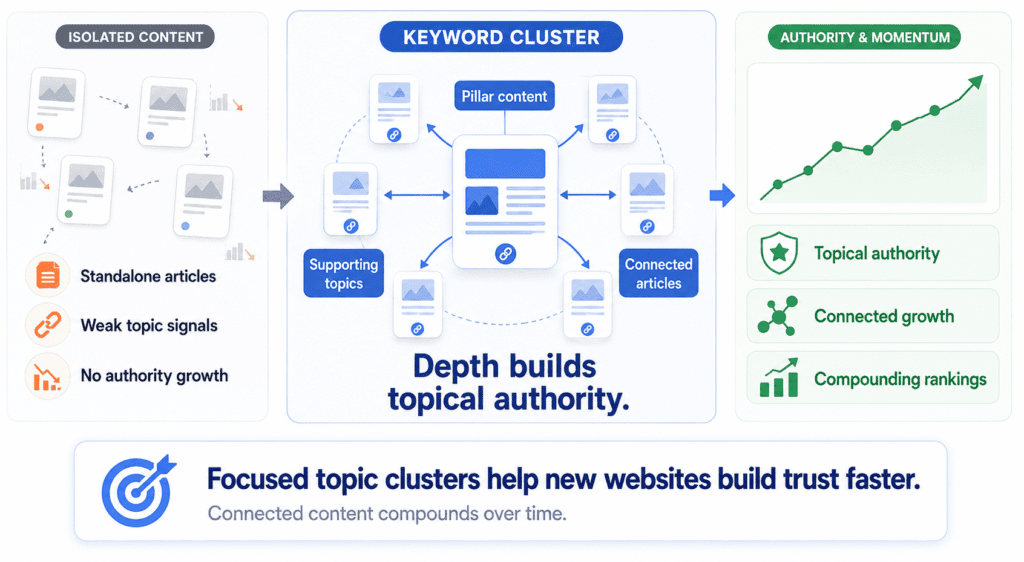

The traffic from any single small keyword won’t move the needle much. But this is where proper keyword research for new websites starts to look less like a hunt for individual targets and more like mapping a territory. Ten or fifteen tightly related small keywords, all covered well, all pointing toward a central piece of content — that cluster builds something more valuable than any individual ranking. It builds topical authority. It signals to Google that your site genuinely understands this space. And that signal is what eventually makes the larger keywords in your niche accessible. You don’t jump to them. You grow into them.

The other opening that doesn’t show up cleanly in keyword tools is the question that’s technically been answered but practically hasn’t. You’ve experienced this as a searcher — you click the top result, it mentions what you were looking for without actually resolving it, and you’re back on the results page thirty seconds later. Those searches exist in enormous numbers across almost every niche. The opportunity isn’t a gap in coverage — it’s a gap in quality. Someone wrote about it. Nobody wrote about it well enough.

And then there are the windows that open when something changes. A new tool enters the market. A platform updates how something works. A regulation shifts the landscape. For a brief period, nobody has established content on the new reality — new sites and old sites are equally positioned because the keyword barely existed six months ago. These windows close faster than most people expect. But they’re real, and being genuinely plugged into what’s changing in your niche is one of the few ways a new site competes on equal terms from day one rather than always fighting uphill.

There’s a term for the type of keywords being described throughout this section — long-tail keywords — and it’s worth naming directly because the data behind it is more compelling than most people realise. According to Backlinko’s analysis of 306 million keywords, 91.8% of all search queries are long-tail terms. Not a majority — ninety-one percent. The broad, high-volume terms that dominate keyword research conversations represent a tiny fraction of how people actually search.

For a new site, this reframes the whole exercise. You’re not targeting niche keywords because you have no choice. You’re targeting the type of keyword that represents the vast majority of real search behaviour — and doing so with content specific enough to actually satisfy what the searcher came for. The volume looks small per keyword. The cumulative picture, across a focused cluster of well-chosen long-tail targets, looks very different.

The doors aren’t where the maps say they are. They’re in the gaps the mapmakers didn’t think were worth drawing.

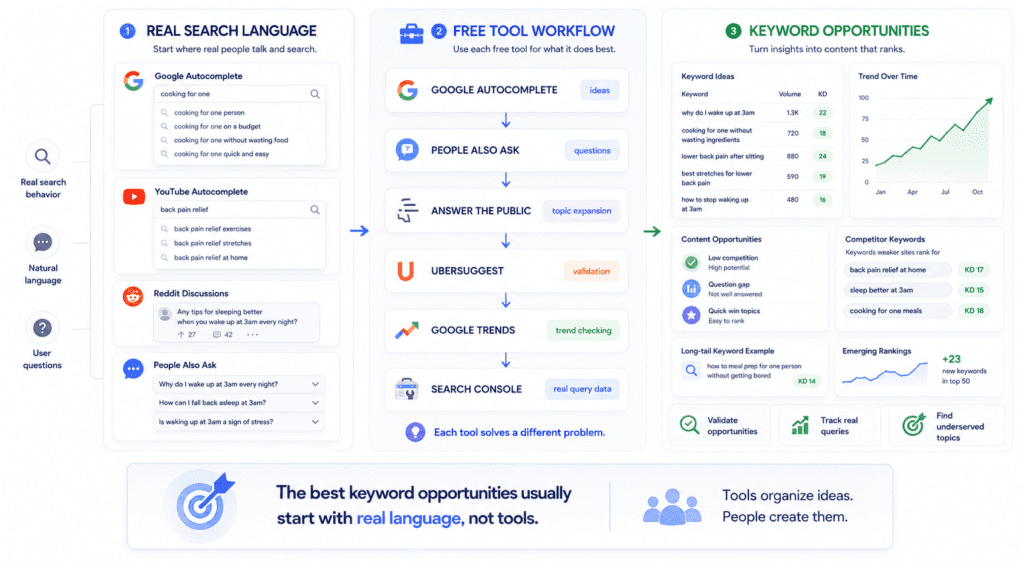

A Keyword Hunting Process That Doesn’t Require Spending $100/Month

There’s a version of this conversation that starts with “here are the best free keyword tools” and then lists eight of them. That’s not what this is. Tools without a process are just ways to generate more confusion faster. So let’s talk about the process first, and the tools will make more sense in context.

Starting With Seed Keywords That Aren’t Too Broad

Before any tool gets opened, most people have already made the mistake that limits everything downstream. They start with seed keywords that aren’t really keywords — they’re topic categories. “Fitness.” “Marketing.” “Home improvement.” These are entire industries, not starting points. Every keyword you generate from a seed that broad will be either hopelessly competitive or so vague it’s meaningless.

A seed worth working from is specific enough that you can picture a real person typing it. Not “cooking” — “cooking for one person without wasting ingredients.” Not “sleep” — “why do I wake up at 3am every night.” You can feel the difference immediately. One is a category. The other is a person with a specific problem sitting in front of a search bar at an inconvenient hour.

The best seeds almost never come from brainstorming at a desk. They come from paying attention to the actual language people use when they’re stuck — which means forums, Reddit threads, Facebook groups, comment sections, the questions that show up repeatedly in your niche’s communities. Industry insiders talk about topics. Real people ask questions. Those questions, phrased exactly the way they’re asked, are your seed material.

If you’re building a site in a space you have personal experience in, your own past confusion is genuinely valuable here. The questions you had when you were learning — especially the ones that were hard to find good answers to — are almost always real keywords. You know they’re real because you searched them. And if you struggled to find a satisfying answer, there’s a reasonable chance the content gap still exists.

One thing worth resisting early: the urge to validate seeds before you’ve finished generating them. Seeds are for opening up territory. Filtering comes later. Keep a messy running list and don’t judge it until you have enough raw material to work with.

📖 Related: Best SEO Tools for Small Businesses 📖 Related: Ahrefs for Beginners: A Complete Guide

The Free Tool Stack Worth Actually Using

Here’s where most free tool conversations go wrong — they treat every tool as a general-purpose keyword research solution and then wonder why none of them feel complete. Each of these tools is good at one specific thing. Use them for that thing and they’re genuinely useful. Ask them to do everything and you’ll end up frustrated.

Start with Google Autocomplete, and not the way most people use it. Don’t just type your seed and look at the eight suggestions that drop down. Work it systematically — type your seed keyword followed by each letter of the alphabet and write down every suggestion that looks relevant. It’s slow and slightly tedious and worth every minute, because those suggestions are pulled from actual search behaviour. Not estimates. Not projections. Real things real people typed recently enough for Google to surface them. No paid tool gives you data that’s more directly grounded in reality than this.

Worth doing the same thing in YouTube if your niche has any video presence. YouTube Autocomplete surfaces the same kind of natural-language search behaviour as Google — but because people searching on YouTube phrase things slightly differently, sometimes more conversationally, sometimes more specifically, you’ll find keyword variations that pure text-based research misses. Type the same seed keyword into YouTube’s search bar and note what it suggests. The overlap with Google is high but the gaps between them are often where the most natural-sounding, least optimised keyword opportunities live.

While you’re in the search results, pay attention to People Also Ask boxes. Most people glance at them and move on. Treating them as a keyword research tool changes what you find. Expand a question, and the box generates more questions. Keep clicking and you can follow a thread of related questions that maps out exactly how real people think about a topic — the sequence of understanding they’re trying to build, the confusions they hit along the way. Every question in a PAA box is a keyword that Google has already decided has enough search volume to surface proactively. That’s a meaningful filter that requires zero tool access.

AnswerThePublic is worth one session per topic, not ongoing use. Put in a seed and it generates a map of questions, prepositions, and comparisons around that term. The free version is limited enough to be slightly annoying for heavy use, but for an early research session it does something specific that’s hard to replicate manually — it shows you the question landscape around a topic all at once, which is useful for spotting angles you hadn’t considered. Don’t try to work through every result. Use it to identify clusters of questions you hadn’t thought to explore, then take those into Google.

For actual volume and difficulty numbers, the free tier of Ubersuggest gives you enough to work with if you use it for validation rather than discovery. Find the keywords first through the methods above, then check rough volume and competition in Ubersuggest before committing to writing. The numbers aren’t as precise as a paid tool but they’re directionally accurate — enough to tell you whether a keyword has any meaningful search volume or whether you’d be writing for an audience of twelve.

Google Trends costs nothing and solves a specific problem that Ubersuggest and similar tools can’t: it tells you whether interest in a keyword is growing, stable, or quietly dying. Volume data alone doesn’t tell you that. A keyword with 800 monthly searches that’s been declining for two years is a different proposition to one with the same volume that’s been rising. For a new site choosing between two similar keywords with comparable difficulty, Trends is a genuinely useful tiebreaker — pick the one with rising interest and you’re building content that has momentum behind it rather than one that peaked when a different administration was in office.

It’s also useful for spotting seasonal patterns before they catch you off guard. Some keywords spike predictably at certain times of year. Publishing content targeting a seasonal keyword three months before the spike rather than during it gives a new site time to build some ranking before the moment of peak demand arrives.

Once you have content live, Google Search Console becomes the most valuable free tool in your entire stack — and it’s the one most beginners either ignore or don’t set up properly. The moment your pages start getting indexed, Search Console shows you what queries they’re appearing for, including ones you never deliberately targeted. Those accidental appearances matter enormously. They show you what Google genuinely thinks your content is about, which is sometimes different from your intention, and they regularly surface keyword opportunities that no amount of upfront research would have found.

The combination that genuinely doesn’t get enough attention in keyword research for new websites conversations is Reddit plus a plain Google search. Spend time in the subreddits and communities where your target audience actually talks — not to mine for keywords directly, but to absorb the specific language people use when they’re frustrated, confused, or trying to explain a problem to someone else. Then take the most specific and natural-sounding phrases from those conversations and search them in Google. Some will have no real search presence. But some will surface genuine keyword opportunities phrased in the way people actually speak rather than the way SEO content gets written — and that gap between natural language and optimised language is one of the most consistently underserved spaces in almost every niche.

One more method that belongs in this stack and doesn’t get mentioned enough in keyword research for new websites conversations: looking directly at what slightly weaker competitors are already ranking for.

Not the dominant sites in your space — those are the ones that make new sites feel hopeless, and their keyword lists aren’t accessible to you yet. The useful targets are the sites one or two levels below them: newer domains, smaller blogs, focused niche sites that have been publishing for a year or two and have started gaining traction. What are they ranking for? What keywords are sending them traffic?

The free tier of Ubersuggest and Semrush both allow you to enter a competitor’s URL and see a limited view of their ranking keywords. It’s not comprehensive without a paid plan, but it’s directionally accurate — accurate enough to surface keywords you hadn’t considered and validate that real sites at a similar authority level are ranking for terms you were unsure about targeting. Seeing a keyword ranked by a site with comparable authority to yours is more useful than any difficulty score, because it confirms the door is actually open rather than just suggesting it might be.

That’s where the interesting stuff lives. Not in the tools. In the language.

📖 Related: Best SEO Tools for Small Businesses

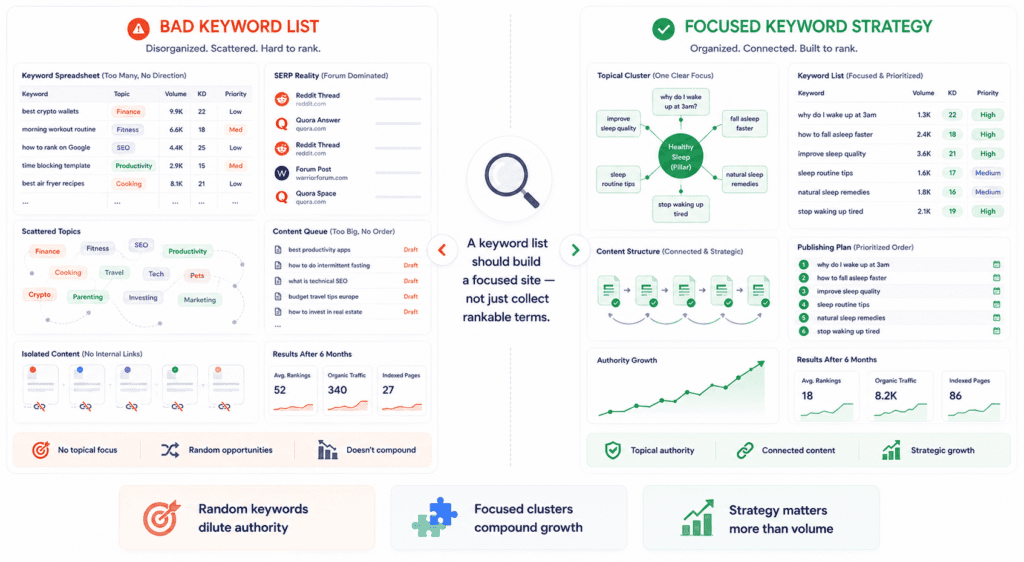

Confessions of a Bad Keyword List

At some point, almost every new site owner ends up with a spreadsheet they’re quietly proud of. Organised columns. Colour coding. A priority system. Forty or sixty or a hundred keywords that all seemed reasonable when they were added. And then the content gets written, published, and mostly ignored — and it’s genuinely hard to figure out why, because the list looked right.

Here’s what a bad keyword list actually looks like from the inside, because the mistakes aren’t always obvious until you’ve lived through them.

The one that catches the most people — and the one that’s hardest to spot before it happens — is building a list around keywords the tools label low competition that are, in reality, permanently owned by Reddit and Quora. The difficulty score looks green. The logic holds up on paper. But when you open the actual results, positions one through five are a mix of forum threads, community discussions, and aggregator pages with domain authority in the eighties. That “low competition” reading reflects a shortage of structured articles, not a shortage of strong competition. Google has already decided this type of query belongs to community content. A well-optimised blog post doesn’t beat that — it just sits below it, confused about why it isn’t ranking.

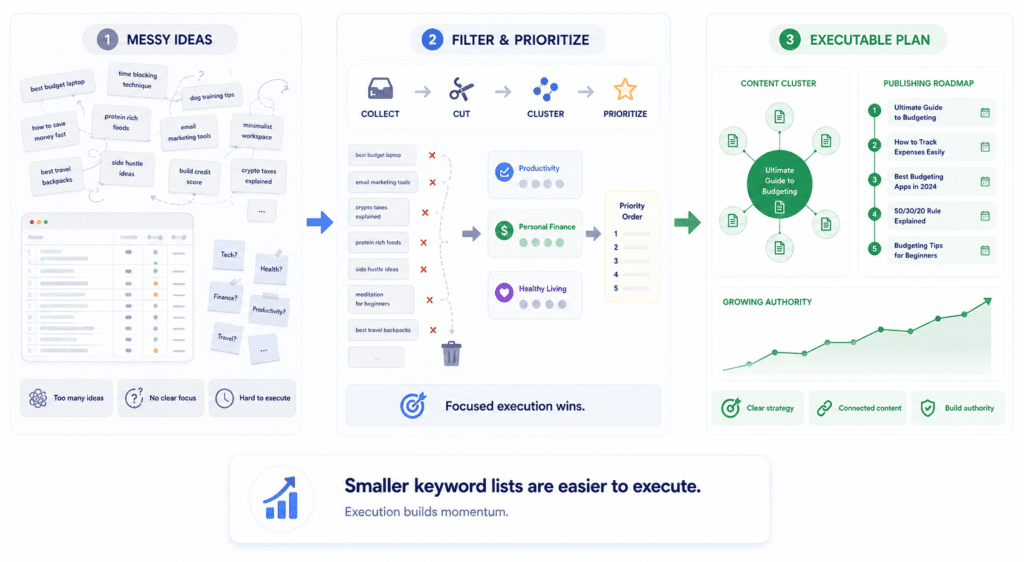

The second mistake is one I find genuinely harder to talk people out of, because it feels like productivity while it’s happening. You sit down to do keyword research, find something interesting, follow that thread to something adjacent, follow that to something else — and an hour later you have a list of thirty keywords across twelve different micro-topics, each one reasonable in isolation, collectively pointing in every direction at once. The list reflects your curiosity rather than a content strategy. And curiosity is a terrible organising principle for a new site trying to build topical authority in something specific.

Content built from that kind of list doesn’t compound. Each article exists without the surrounding context that would make the others stronger. Six months of publishing and you have a collection of unrelated pieces instead of a site Google can clearly categorise. The articles might rank for something eventually. They just won’t reinforce each other — and that reinforcement is most of where the long-term value comes from.

There’s a version that’s more specific to certain niches but worth naming: the keyword list that’s genuinely relevant to your subject but belongs to a completely different stage of the reader’s journey than where you’re actually playing. A new personal finance site targeting “best index funds 2026” is chasing a keyword where the searcher has already done the learning and wants a trusted recommendation — not an explanation, not a beginner’s guide, a specific answer from a source they have reason to believe. A site with no track record isn’t that source yet. The keyword isn’t wrong exactly. The site just isn’t in a position to satisfy it, which Google has a way of recognising even when you don’t.

And then there’s the one that wastes the most actual time: a list so large and unordered that the writing never really starts. Or it starts in the wrong place. Or it starts, produces a few articles that don’t immediately rank, and stops — because there’s no sequencing logic that would tell you whether to keep going or try something different. Two hundred keywords without an execution order isn’t a content strategy. It’s a backlog. Backlogs don’t publish themselves and they don’t build authority while they sit in a spreadsheet.

The thing all of these have in common — and this is the part that took me longer than I’d like to admit to see clearly — is that the list was built around what seemed rankable rather than what the site was trying to be. Proper keyword research for new websites isn’t just a ranking exercise. It’s an early decision about identity: what does this site actually cover, who does it cover it for, and what does a reader get here that they can’t get somewhere with ten years of domain authority behind it.

When the answer to that question is clear before the research starts, the list almost builds itself. When it isn’t, you end up with a spreadsheet that looks impressive and does nothing.

📖 Related: Semrush vs Ahrefs: Which One Is Worth It for Your Site?

How to Build a Keyword List a New Site Can Actually Execute On

At some point the research has to stop and the list has to become real — specific, sequenced, short enough to actually finish. This is where most of the thinking from the previous sections either comes together or falls apart, depending on whether the list reflects honest judgment about where the site currently is or optimistic judgment about where it might eventually get to.

Those are very different lists. Only one of them is useful right now.

Pull everything together first — every keyword from autocomplete, every PAA question, every phrase mined from Reddit, everything the free tools surfaced. Don’t filter while you’re still generating. Get the whole messy pile in one place, even the ones you’re not sure about, because a list with real options in it produces better final selections than a list that was curated too early and ended up thin.

Then start cutting. And cut harder than feels right.

Anything where the SERP is dominated by sites you genuinely couldn’t compete with in the next six months — gone. Anything where you’re not sure what format the content should take because the intent isn’t clear — set aside for later, don’t force it now. Anything that’s interesting to you personally but doesn’t connect to what the rest of the site is building — this one is harder to cut because it feels wasteful, but leaving it on the list is more wasteful. It’ll either never get written or it’ll get written and sit in isolation, building nothing.

What remains after honest cutting should feel uncomfortably small. That discomfort is the point. A focused list of twelve to fifteen keywords that you’re genuinely going to execute on is worth more than sixty keywords that exist in a spreadsheet while you figure out where to start. The list isn’t a measure of how much research you did. It’s a commitment to what you’re actually going to do next.

📖 Related: Surfer SEO Review: Is It Worth It?

How to Prioritise When Everything Feels Equally Important

The sequencing question is where good keyword research for new websites most often stalls. The cutting is done, the list is focused, and then everything on it seems equally valid and the whole thing starts to feel arbitrary. Pick one, write it, hope for the best.

There’s a better frame than difficulty score or search volume for deciding what goes first, and it’s this: which keyword, if you wrote something genuinely excellent around it today, would do the most to tell Google what this site is actually about?

Usually that’s the broadest keyword on your list that’s still within realistic reach — not so broad it’s hopeless, not so narrow it’s a footnote. Something that sits at the centre of the topic territory you’re trying to own. Write that one first. Not because it’ll rank fastest. It probably won’t. But because every article you publish after it has something to orient around, something to link back to, something that gives the later, more specific pieces a home to belong to.

After that, sequence by a combination of two things that don’t show up as columns in most keyword spreadsheets: how confident are you that you can write something meaningfully better than what’s currently ranking, and how directly does this keyword feed back into the cluster you’re building? A keyword with moderate difficulty and weak existing content beats a low-difficulty keyword pointing in a different direction, even if the numbers look cleaner on paper.

One thing that’s easy to overlook: new sites typically see their first real ranking movements somewhere between three and six months after publishing. If the first five articles all target keywords that take eight to ten months to show movement, you’ll have no feedback to work with before the doubt sets in. Mix in a few faster-moving targets — more specific, lower competition, probably lower volume — that give you something to observe and adjust from while the more ambitious pieces are still building.

Momentum matters psychologically as much as strategically. A list that gives you nothing to measure for nine months is hard to stay committed to.

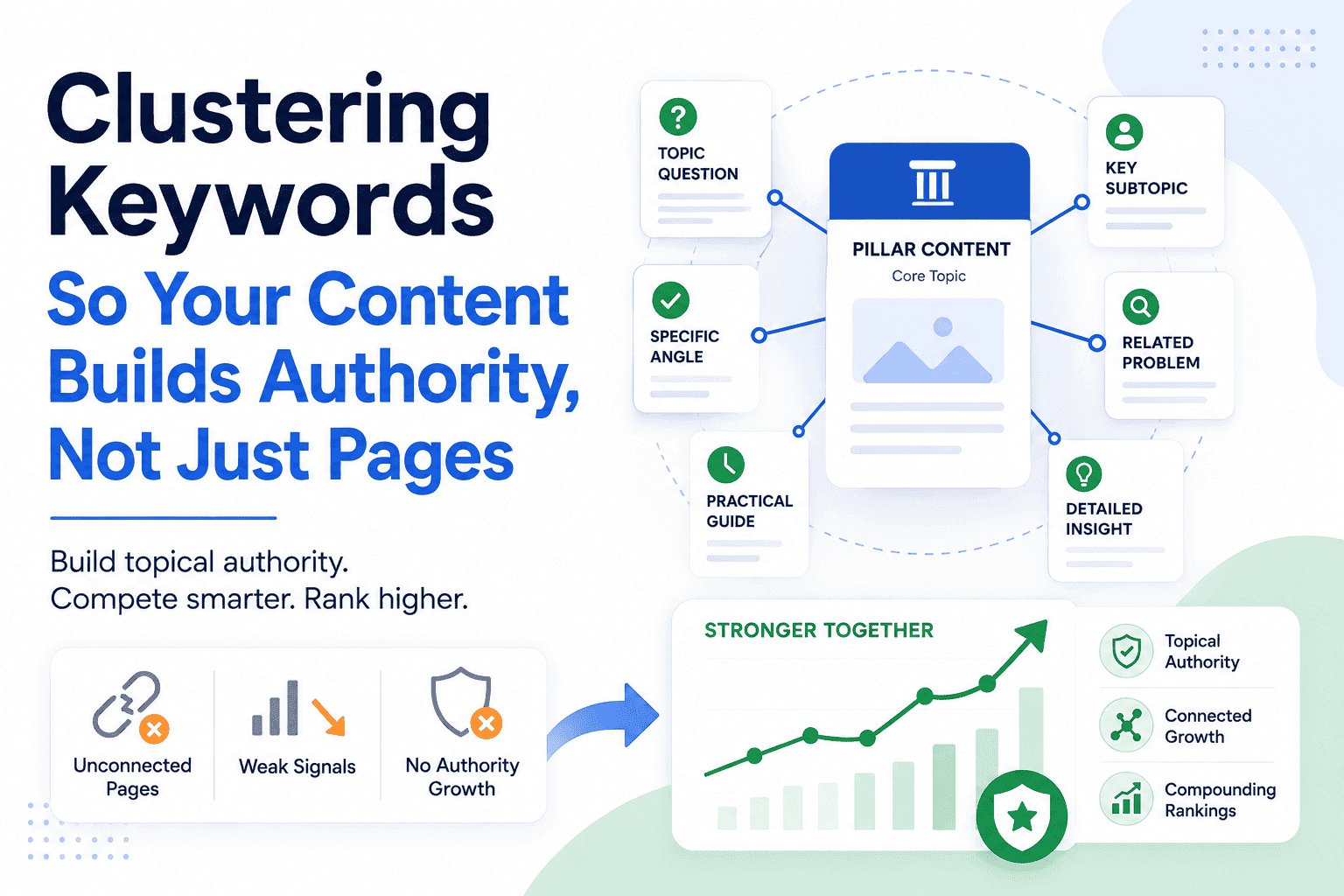

Clustering Keywords So Your Content Builds Authority, Not Just Pages

This is the structural decision that separates a keyword list from something that actually compounds — and it’s the thing most guides either skip or explain so abstractly it doesn’t land.

The basic idea is simple enough: instead of publishing individual articles that each target their own keyword and then sit independently of everything else, you group related keywords together so that each piece of content is part of a larger topic territory. One article — usually the broadest, most central piece in the group — acts as an anchor. The others go deeper into specific angles, specific questions, specific sub-problems. They link to the anchor. The anchor links back to them. Together they signal something an isolated article never can: that your site doesn’t just mention this topic, it understands it from multiple directions.

For a new site, this matters more than it might seem. You can’t manufacture years of domain history. You can’t build a significant backlink profile in three months. But you can, in a focused publishing period, become the most thorough source on a specific narrow topic that bigger sites have only skimmed. That depth is something Google can recognise and act on even without the authority signals an older domain has accumulated.

What this looks like in practice: imagine a new site covering productivity for freelancers. The anchor piece might target something like “time management for freelancers” — central enough to matter, specific enough to be realistic. Supporting articles cluster around it: how to structure a freelance workday, how to stop underestimating project time, dealing with context switching between clients, the specific problem of working from home when home keeps interrupting. Each one a real keyword. Each one linking back to the anchor. Each one deepening the signal that this site genuinely covers this territory rather than passing through it.

The mistake worth avoiding: starting too many clusters at once. Three clusters with five articles each will outperform eight clusters with two articles each almost every time, because depth within a topic is what creates authority, not breadth across topics. The temptation to keep starting new clusters before finishing existing ones is strong — new keywords always look interesting, adjacent topics always seem worth covering. Resist it until the clusters you’ve started are substantial enough to stand on their own.

There’s no universal right size for a cluster. Some topics subdivide naturally into a dozen distinct questions. Others are better served by one comprehensive article that covers the ground a cluster would have covered in fragments. The test isn’t whether you can think of enough supporting articles to fill out a structure — it’s whether each supporting article would genuinely answer a distinct question someone is actually asking. If you’re manufacturing supporting articles to hit a number, the cluster is too forced. If each one exists because there’s a real question behind it, the cluster is doing its job.

When the list is trimmed, sequenced, and organised into clusters that reflect real topic depth rather than structural ambition, something shifts. The research stops feeling like preparation and starts feeling like a plan. You know what you’re writing this week, you know how it connects to what comes next, and you know — roughly, honestly — what you’re building toward. That clarity is what makes the difference between a new site that builds momentum over six months and one that publishes inconsistently and can never quite tell whether the strategy is working or just hasn’t worked yet.

One last thing worth saying clearly, because most guides frame keyword research as a project with a start and an end: it isn’t. The list you’re building today is a first list, not a permanent one. In six months, some of those keywords will have ranked and shown you what’s working. Others will have stalled and shown you what to rethink. Your domain will have slightly more authority than it does today, which means keywords that were out of reach at launch may be worth revisiting. New topics will have emerged in your niche. Competitors will have published content that opens or closes gaps you hadn’t noticed before.

The sites that build consistent organic traffic treat keyword research as a recurring habit, not a one-time task. Revisit the list every three to six months. Not to rebuild it from scratch — to update it with what you’ve learned from actually publishing. The research gets faster and more accurate the longer you do it, because you’re no longer guessing what your site can rank for. You have data. Use it.

One Keyword, One Page — And Why It Matters More Than It Sounds

Before the list is finalised, there’s one check worth doing that takes ten minutes and prevents a problem that’s genuinely annoying to fix later. Go through every keyword on the list and confirm that no two of them are so similar in intent that you’d essentially be writing the same article twice.

This is called keyword cannibalism, and it happens more often than you’d expect — especially when a list has been built from multiple research sessions at different times. You end up with “how to start freelance writing” and “freelance writing for beginners” both on the list, both pointing at the same intent, both competing for the same searcher. When two pages on the same site target the same keyword or the same intent, they compete against each other in Google’s index — and instead of one strong page ranking, you get two weak ones splitting the signal.

The fix before publishing is simple: for every keyword on your list, ask whether a different keyword on the same list could plausibly be answered by the same article. If yes, combine them — target the primary keyword with one article and use the secondary as a supporting term within that piece rather than a separate target. One keyword, one page, one clear intent is the principle. It sounds obvious until you’re six months in and wondering why two of your articles keep swapping positions for the same search term.

Disclaimer: This article may contain affiliate links. If you make a purchase through these links, I may earn a commission at no extra cost to you. All opinions are based on hands-on testing and independent research.