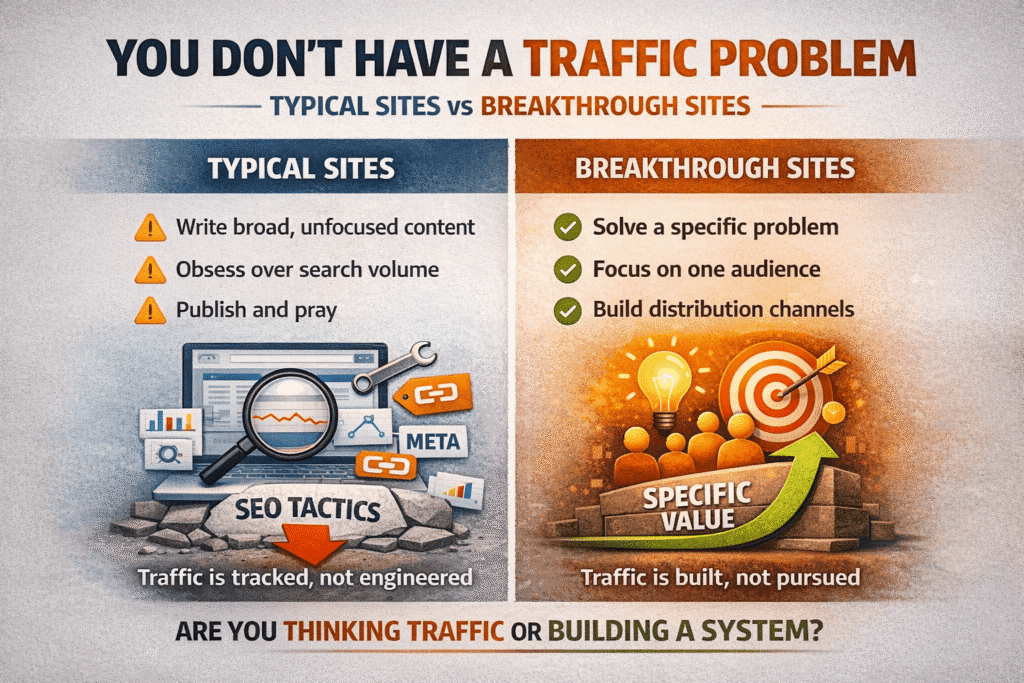

Most traffic problems aren’t traffic problems.

They’re relevance problems, distribution problems, audience problems — things that don’t show up cleanly in a dashboard but explain almost everything about why some sites grow steadily and others plateau no matter how much they publish or optimize.

What actually drives website traffic, in the long run, has less to do with tactics than most advice suggests. The tactics matter — but they’re multipliers. If there’s nothing underneath them worth multiplying, the math doesn’t work.

Table of Contents

You Don’t Have a Traffic Problem

Here’s what nobody tells you when you’re staring at a flat line in Google Analytics: traffic isn’t the problem. It never really is.

Traffic is just what happens when enough people find something worth finding. Which means if it’s not coming, the question isn’t “how do I get more of it” — it’s “have I actually built something specific enough, useful enough, or honest enough that people would seek it out if they knew it existed?”

That’s an uncomfortable question because it doesn’t have a tactical answer.

Most site owners skip straight to the tactics anyway. They audit title tags, chase backlinks, tweak meta descriptions, and publish more frequently — none of which is wrong exactly, but all of which assumes the core product is already solid. Often it isn’t. Often the site is a slightly worse version of ten other sites covering the same ground in the same way, and no amount of technical optimization fixes that.

What actually drives website traffic — sustainably, not just in bursts — is being specific enough, useful enough, and distinct enough that people have a genuine reason to choose your content over everything else already indexed. In my experience, the sites that break through aren’t usually the most optimized. They’re the most specific. They picked a narrow enough lane that they could actually be the best answer to something — not the most comprehensive answer, not the most keyword-rich, just the most genuinely useful for one type of person with one type of problem.

The irony is that kind of specificity feels risky. Narrowing down feels like leaving traffic on the table. But broad, safe, covers-everything content is precisely what gets lost in the noise — because Google has ten thousand versions of it already indexed, and yours isn’t meaningfully different.

So before the next audit, the next content calendar, the next link-building outreach — it’s worth sitting with the harder question: does this site have a clear reason to exist that nobody else is filling? If the answer is vague, that’s where the work actually starts.

The Sites Growing Right Now Aren’t Doing What You Think

If you reverse-engineer the sites gaining real traction — not viral spikes, actual compounding growth — the pattern isn’t what most SEO content would have you believe.

It’s not the publishing cadence. It’s not the backlink velocity. It’s that somewhere along the way, a specific group of people decided the site was theirs.

That sounds vague until you see it happen. A site covers a niche topic with enough honesty and specificity that readers start recommending it unprompted — in forums, in reply threads, in Slack groups you’ve never heard of. The site didn’t manufacture that. It earned it by being the most straight-talking resource in a space full of hedged, monetization-first content. Once that reputation exists, traffic becomes almost self-reinforcing. New visitors arrive pre-convinced it’s worth reading.

Most sites never get there because they’re optimized to avoid alienating anyone. Softened opinions, broad topics, safe angles. The content is technically correct and completely forgettable. It ranks occasionally, gets skimmed, and leaves no impression. Nobody shares it because sharing it doesn’t say anything about the person sharing it.

Understanding what actually drives website traffic means looking past the tactics and noticing this pattern: the sites that grow aren’t just well-optimized — they’re genuinely chosen. By a specific audience, for a specific reason, in a way that no keyword strategy directly creates.

The format assumption is worth questioning too. Long-form comprehensive content used to be a reliable edge. It’s less of one now — not because length doesn’t matter, but because everyone figured out the long-form game and started playing it. The sites pulling ahead aren’t necessarily writing more. They’re writing things that are harder to replicate: genuine analysis, uncomfortable conclusions, takes that have a real person’s fingerprints on them.

There’s one more thing. A lot of the sites growing right now built an audience before they built a content library. They were present in communities, newsletters, and conversations — places their future readers already spent time — before their own site had much to show. The traffic didn’t discover the audience. The audience came first, and the traffic followed.

That sequence gets skipped constantly because it’s slower and less measurable than keyword research. But it’s the difference between a site people find and a site people return to.

Distribution Is the Work — Publishing Is Just the Beginning

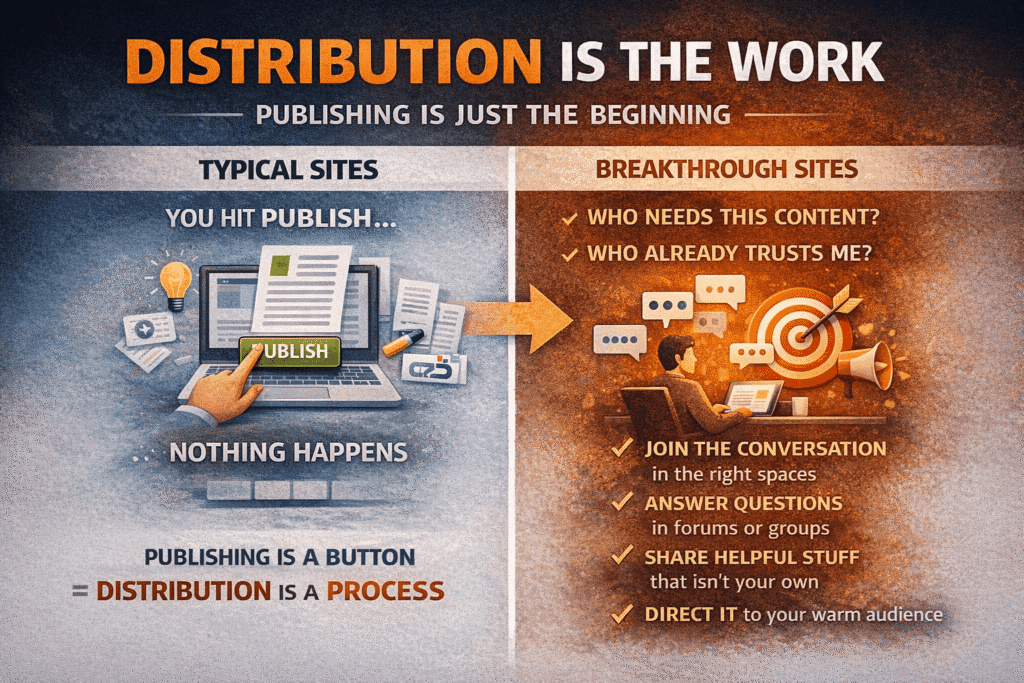

Nobody talks about the part where you hit publish and nothing happens.

You spent days on the piece. The research is solid, the argument is clear, the thing is genuinely useful. And then — silence. A handful of visits, mostly from crawlers. This isn’t a content quality problem. It’s a distribution problem, and it’s the part of the process that most content advice skips entirely because it’s harder to systematize and less satisfying to write about than keyword research.

The publish button is not a distribution strategy.

What distribution actually means — not the recycled advice version, but the version that moves the needle — is knowing where your reader already is before you write anything. Not after. That question shapes everything: the angle, the format, the depth, the title. A piece written for someone who hangs around a specific subreddit reads differently than one written for a newsletter audience or an industry Slack group. And it should.

Most people reverse this completely. They write first, then figure out where to put it. Then they share it on their own social channels to an audience that mostly doesn’t exist yet, call that distribution, and wonder why it didn’t work. This is one of the most common reasons sites plateau early — not because the content is weak, but because it never reached the people it was written for.

When you dig into what actually drives website traffic over the long term, distribution keeps coming up as the missing variable. Not as an afterthought, but as something that gets decided before the first draft — who reads this, where do they already go, and how does this piece get in front of them there.

The version of distribution that actually builds traffic over time is less about broadcasting and more about being present in the places your readers trust before you need anything from them. Answering questions in forums. Contributing genuinely to community conversations. Being the person who shares other people’s useful work without an agenda. It’s slow and it doesn’t scale neatly, which is exactly why most sites don’t do it.

There’s a trap on the other end too. The “repurpose into every format, post on every platform” approach sounds like distribution but it’s mostly noise. Thin presence everywhere is not the same as real presence somewhere. An audience that actually knows you in one place is worth more than algorithmic impressions across six platforms where you’re functionally a stranger.

The sites that grow because of distribution tend to have built relationships before they needed them. Not as a tactic — as a genuine habit of showing up, contributing, and being findable in the right rooms. By the time they publish something worth reading, there are already people primed to read it.

That’s the version of distribution that works. It’s just not the version that fits on a checklist.

Algorithms Are Behavioral Mirrors, Not Gatekeepers

There’s a persistent idea in SEO circles that Google is something to be negotiated with — a system of rules that rewards compliance and punishes deviation. That mental model produces exactly the wrong instincts.

Google isn’t deciding who deserves traffic. It’s measuring who’s already earning attention. Every core update, every quality signal, every ranking factor is an attempt to get better at reading one thing: do real people find this useful enough to click, stay, return, and share? The algorithm didn’t invent that standard. Human behavior did. Google is just trying to catch up to it.

The practical implication is uncomfortable for anyone who’s spent time on technical optimization. It means the ceiling on “optimizing for Google” keeps dropping — not because the rules keep changing arbitrarily, but because Google keeps getting better at seeing through the tactics that used to work. Keyword stuffing, thin content padded to hit word counts, link schemes — these worked until the mirror got cleaner. The mirror is getting cleaner every year.

Understanding what actually drives website traffic means accepting that the algorithm was never the real audience — it was always a proxy for one. Every signal Google measures is an attempt to approximate something it can’t directly see: whether a real person found what they were looking for, or left disappointed.

What holds up is harder to manufacture. A site where people actually read past the first paragraph. Where they come back without being retargeted. Where they link to it in conversations because it said something worth referencing. Google can’t fully measure any of that directly — but it can measure the behavioral footprints those things leave behind. Time on page, return visits, branded search volume, click-through rates. The algorithm is always approximating. The approximation keeps improving.

Technical SEO still matters in this picture — just not in the way most people frame it. A slow site, a confusing structure, pages that can’t be crawled properly — these create friction between good content and the people trying to find it. Fixing them isn’t gaming anything. It’s just removing obstacles. The mistake is thinking that removing obstacles is the same as building something worth finding.

In my experience, the sites most rattled by algorithm updates are the ones that were optimizing for the gap between what Google could measure and what was actually true about their content. When the measurement improves, the gap closes, and the rankings follow. The sites that barely noticed the update were the ones where the measurement was already roughly accurate — because they weren’t trying to get ahead of it.

The algorithm isn’t the audience. But it’s getting better at pretending to be one.

Consistency Is Overrated. Memorability Isn’t.

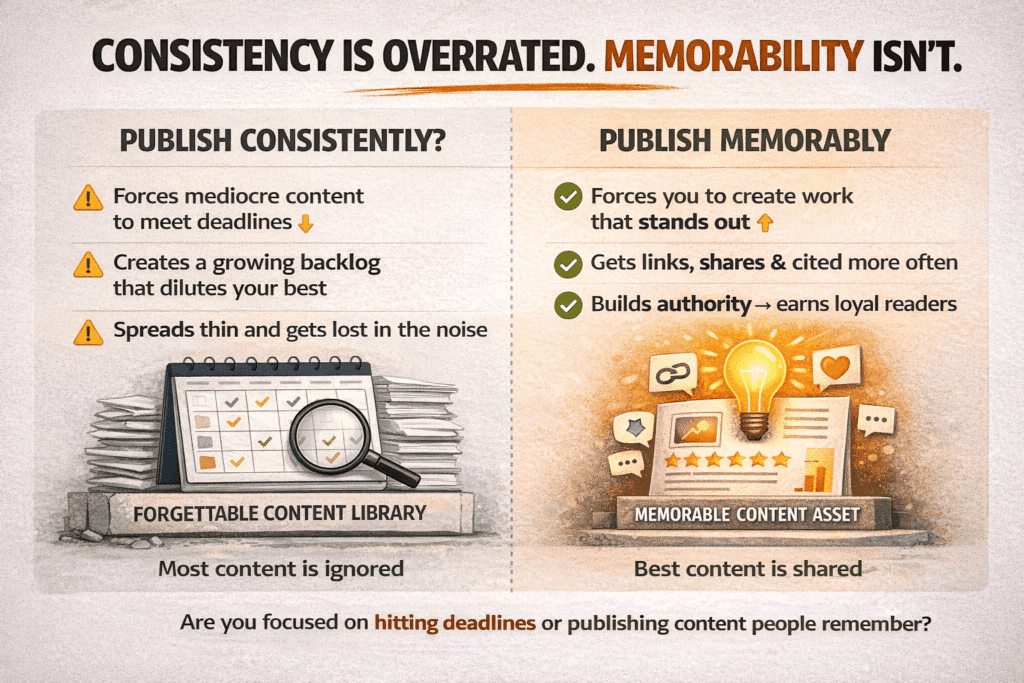

At some point “publish consistently” became the default answer to every content growth question. Struggling to get traffic? Be more consistent. Site not growing? You need a content calendar. It’s the advice equivalent of “drink more water” — not untrue, not useful.

The sites that get cited as proof of consistency working almost never grew because of the frequency. They grew because they published things people remembered, and they did it often enough for that reputation to compound. Frequency was a side effect of having something to say, not a strategy in itself. The retelling flips the causality, and a lot of sites end up optimizing for the wrong variable.

What nobody talks about is the backlog problem. Publish consistently for two years at average quality and you’ve built a library of forgettable content that now has to be maintained, crawled, and accounted for. It dilutes the good work. It signals to returning visitors — subconsciously, but reliably — that most of what this site produces is fine and skimmable. Recovering from that is a harder project than most sites realize until they’re already in it.

Memorability operates completely differently. A single piece that reframes how someone thinks about a problem — that makes them screenshot something, send it to a colleague, come back to it six months later — creates a different kind of reader than one who found you through a keyword and left after ninety seconds. That reader tells people. They link to it without being asked. They search for your site by name next time instead of through Google.

Those behaviors are exactly what the algorithm is trying to measure. And when you look at what actually drives website traffic that compounds — not spikes, not short-term ranking bumps, but the kind that grows quietly and holds — it’s almost always traced back to content people genuinely remembered, not content that was simply published on time.

The publishing calendar is the enemy of this because it turns editorial decisions into logistical ones. The question stops being “is this ready, is this good, does this say something worth saying” and becomes “is it Tuesday.” Most pieces go out before they’re done because the schedule says they should. The result is content that exists but doesn’t do anything — not bad enough to embarrass anyone, not good enough to matter.

There’s a floor to this argument. One remarkable piece a quarter isn’t a content strategy. Frequency does matter — just nowhere near as much as the consistency gospel suggests, and the cost of chasing it without the quality to back it up is real and cumulative.

The bar isn’t “did you publish.” It’s “did anyone care.”

Word-of-Mouth Has a Mechanism — Here’s What It Actually Is

At some point “publish consistently” became the default answer to every content growth question. Struggling to get traffic? Be more consistent. Site not growing? You need a content calendar. It’s advice that’s technically defensible and practically useless — because it tells you nothing about what to publish, who it’s for, or whether anyone will care when it arrives.

The sites that get cited as proof that consistency works almost never grew because of the frequency. They grew because they published things people remembered, and they happened to do it often. Frequency was a side effect of having something to say. The retelling reverses the causality, and sites end up optimizing for the schedule instead of the substance.

There’s a cost to this that accumulates quietly. Two years of average-quality publishing creates a backlog — hundreds of pages that are thin enough to dilute your best work, numerous enough to maintain, and forgettable enough that returning visitors start to expect mediocrity. That expectation is hard to reverse. Most sites never try. They just keep publishing on Tuesday.

Memorable content operates on a completely different logic. A piece that reframes how someone thinks about a problem — that gets screenshotted, forwarded, linked to without prompting — builds something that consistent mediocrity never does: an audience that comes back by choice, searches for the site by name, and sends other people there without being asked. That behavior is what actually drives website traffic that holds up over time, because it’s generated by genuine reader investment rather than algorithmic circumstance.

The publishing calendar kills this because it converts editorial questions into logistical ones. Is this ready? Does it say something true that isn’t being said elsewhere? Would someone who knows this topic well find it useful? Those questions get replaced by: is it Tuesday?

There’s a floor to the argument — one excellent piece per quarter isn’t a strategy, and frequency does matter at some level. But for most sites that floor is much lower than the treadmill they’ve built, and the gap between “published on schedule” and “worth remembering” is where most traffic potential gets quietly wasted.

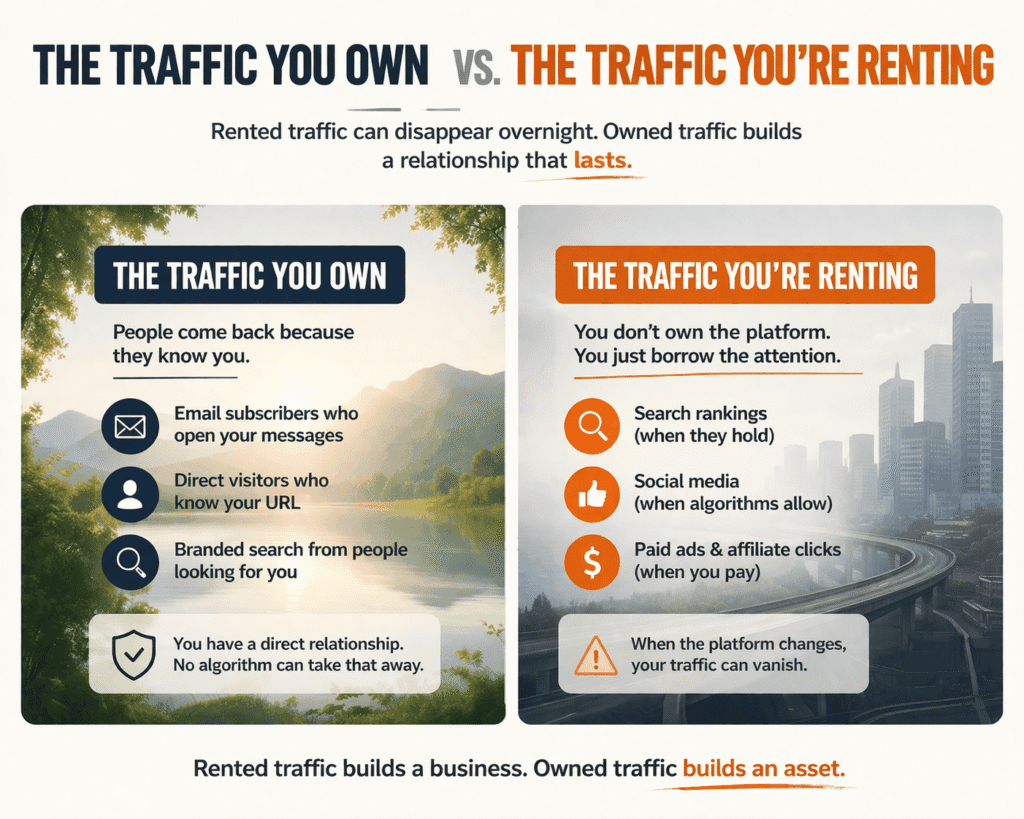

The Traffic You Own vs. The Traffic You’re Renting

Every source of traffic sits somewhere on a spectrum between owned and borrowed. Most sites don’t think about this until something breaks — a ranking drops, a platform changes its algorithm, an ad account gets suspended — and suddenly half their visitors disappear overnight with no obvious path to getting them back.

Rented traffic isn’t bad. It’s just fragile.

Search traffic, social referrals, paid clicks — these all work until they don’t. And the “until they don’t” part is entirely outside your control. Google doesn’t owe anyone rankings. Facebook has been quietly strangling organic reach for a decade. Paid traffic stops the moment the budget does. None of this is a reason to avoid these channels, but it is a reason to be clear-eyed about what you’re building on top of them.

Owned traffic looks different. It’s the person who types your URL directly because they’ve been before and they’re coming back. It’s the email subscriber who opens because they recognize the name. It’s the branded search — someone Googling your site specifically rather than a topic you happen to cover. These are audiences you’ve earned a direct relationship with, and no algorithm update touches them.

The gap between sites that survive major disruptions and sites that don’t usually comes down to how much of their audience they actually own versus how much they were renting without realizing it. In my experience, most sites dramatically overestimate the former. They have traffic, so they assume they have an audience. The two aren’t the same thing.

What actually drives website traffic that survives platform shifts, algorithm updates, and market changes isn’t a smarter keyword strategy or a better backlink profile — it’s the percentage of your audience that would notice if your site disappeared. That number is the real measure of whether you’ve built something or just borrowed it.

Building owned traffic is slower and less measurable in the short term, which is why it gets deprioritized. An email list doesn’t show up in your rankings dashboard. Direct traffic is a small line in Google Analytics that most people ignore. Branded search volume is almost never tracked by sites that should be tracking it obsessively. These are the metrics that tell you whether you’re building something durable or just renting space in someone else’s ecosystem.

The practical version of this isn’t complicated. Every piece of content, every community interaction, every published opinion is an opportunity to give someone a reason to come back on their own terms — to bookmark the site, subscribe to something, remember the name. Most sites treat these as conversion opportunities for a product or service. The ones thinking longer term treat them as relationship-building moments with a future direct visitor.

There’s a version of this advice that tips into “build an email list” platitude territory, so it’s worth being specific about what owned traffic actually requires: a reason for someone to stay connected. Not a lead magnet, not a discount code — a genuine expectation that what comes next will be worth their attention. That’s a content quality problem as much as a strategy problem, which is why the two can’t really be separated.

Rented traffic builds a business. Owned traffic builds an asset. Most sites need both, but the ratio most sites are running — heavily dependent on search and social with almost no direct relationship with their audience — means they’re one algorithm update away from starting over. That’s not a traffic strategy. That’s a traffic loan with no fixed repayment terms.

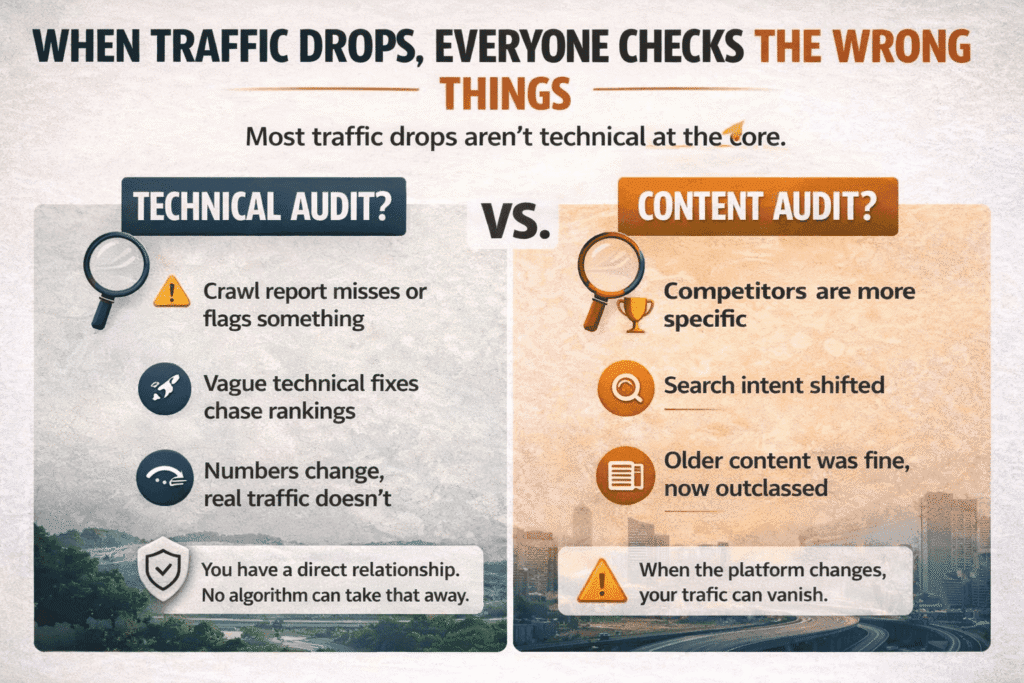

When Traffic Drops, Everyone Checks the Wrong Things

Traffic drops and the first thing most people do is open a dashboard. Which makes sense — dashboards are there, they’re immediate, they produce findings. The crawl report flags something, there’s a task, the task gets done, the traffic doesn’t come back, and now you’re confused because you fixed the thing.

The thing wasn’t the problem.

Technical SEO issues that cause meaningful traffic drops without anyone noticing them in real time are genuinely rare. Google crawls imperfect sites every day. A missing canonical tag on three pages didn’t move the needle. A Core Web Vitals score in the orange didn’t either. These things matter at the margins, and they’re worth maintaining, but they almost never explain a significant drop when you go looking for a culprit after the fact.

What actually happened in most cases is quieter and harder to fix. Something else got better. A competitor rewrote their page and it’s now more thorough, more direct, more specific to what people searching that term actually want. Or the search intent shifted — the people typing that query now want something slightly different than they did eighteen months ago, and the existing content was written for the old version of that question. Or the topic matured and the bar for what counts as a useful answer moved up without the site moving with it.

None of that is findable in Search Console. It requires actually reading the pages that lost traffic — not auditing them, reading them — and asking whether they’re genuinely better than what’s ranking above them now. Not longer. Not more optimized. Better. More honest, more specific, more useful to the actual person who searched that term.

Most of the time they aren’t. Most of the time the content is two or three years old, was decent when it was written, and has been quietly overtaken by sites that kept improving theirs. The traffic drop isn’t an event that happened to the site. It’s the delayed reflection of a quality gap that’s been widening for a while.

The sites that recover fastest from drops tend to skip the technical audit phase almost entirely — or run it quickly, find nothing, and move on. They go straight to the harder question: why would someone choose this over what’s ranking above it right now? If there’s no good answer, that’s the work. Everything else is procrastination with a spreadsheet.

There’s real irony in how the SEO industry has trained site owners to respond to drops. The tooling is sophisticated, the checklists are thorough, and the process feels rigorous. It’s also a very effective way to spend three weeks fixing things that weren’t broken while the actual problem — content that’s no longer the best answer to anything specific — goes unaddressed.

The audit finds what’s broken. It doesn’t find what’s been quietly surpassed. Those are different problems, and only one of them shows up in a report.

The hidden mistakes slowing your traffic growth.

Having a Reason to Exist Is a Traffic Strategy

Ask most site owners why their site exists and they’ll describe what it covers. That’s not the same answer.

A site that covers personal finance for millennials covers a topic. A site that exists because its founder is tired of financial advice that assumes you have money to start with — that has a reason. The difference sounds subtle and it isn’t. One produces content that competes on keywords. The other produces content that resonates with a specific reader who feels like it was written for them, because it was.

Most sites are built without that second thing. The content strategy comes first, the audience gets inferred from keyword research, and the “why” gets written into an about page that nobody reads. What’s left is a site that’s technically functional and strategically indistinguishable from the other thirty sites covering the same ground the same way.

The traffic consequences of this are slow and easy to misread. In the early stages, SEO can compensate — good optimization, reasonable domain authority, consistent publishing. The site gets traffic without having earned an audience. Then something shifts. A competitor gets more specific. An algorithm update rewards depth and penalizes breadth. The traffic that was rented comes due, and there’s no owned audience underneath it to cushion the fall.

Committing to a reason to exist means making choices that feel like losses. A narrower audience. A more specific angle. Opinions that some readers will disagree with. These aren’t bugs in the strategy — they’re the strategy. A site that repels the wrong readers is one that’s specific enough to matter to the right ones. Most sites never find out what that feels like because they optimize for reach before they’ve built anything worth reaching people with.

There’s something worth naming about why this is hard to sustain even when people understand it. The pressure to broaden always comes back. Growth slows and the instinct is to cover more, go wider, add categories. Every one of those decisions trades specificity for volume, and the trade feels rational in the moment and damaging in hindsight. The sites that hold their lane — that stay specific even when it’s uncomfortable — are the ones still growing five years later while the ones that broadened are wondering why their traffic plateaued.

I’ve watched sites with genuinely good content struggle for years because they couldn’t answer the question of who specifically they were for. And I’ve watched much thinner sites grow steadily because they could answer it in one sentence and every piece of content reflected that answer. The second type wasn’t better at SEO. It was better at existing for a reason.

That turns out to matter more than most traffic advice suggests it does.

Knowing which tools actually fit your workflow matters more than chasing the most popular ones — and choosing the right marketing tools for your business starts with understanding what problem you’re actually trying to solve.

Budget shapes strategy whether you plan for it or not, and the debate between free vs paid marketing tools is less about cost and more about what stage of growth you’re actually in.

Disclaimer: This article may contain affiliate links. If you make a purchase through these links, I may earn a commission at no extra cost to you. All opinions are based on hands-on testing and independent research.