SaaS spend doesn’t spiral because of bad decisions. It spirals because of a hundred small, individually reasonable decisions that nobody was ever in a position to see all at once. A SaaS stack audit is how you finally see them together — and what you do with that view is where it gets interesting.

Table of Contents

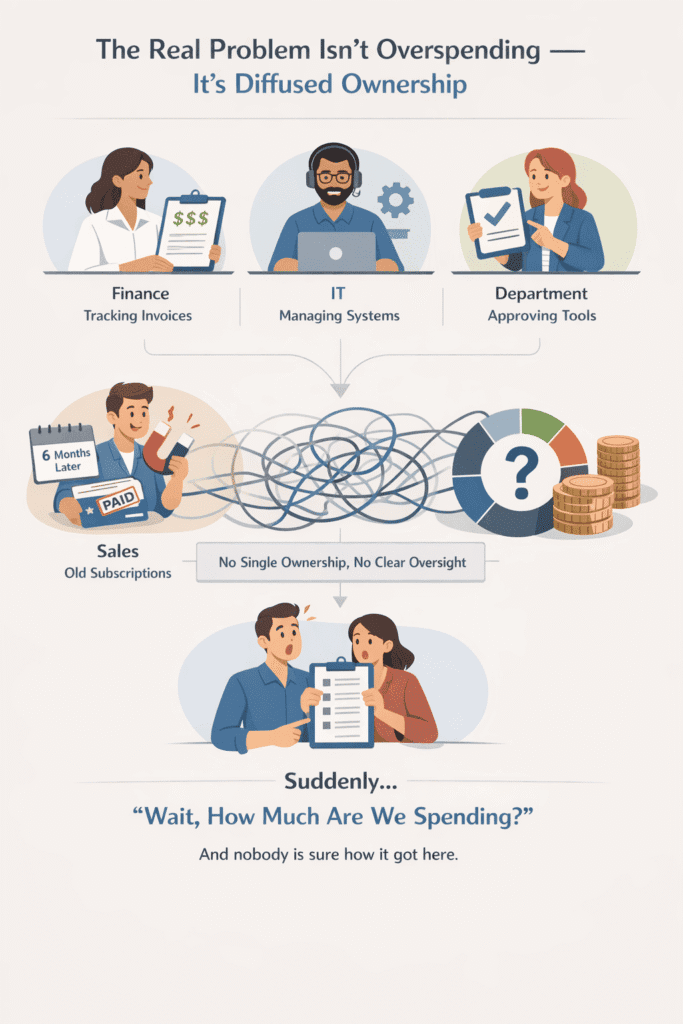

The Real Problem Isn’t Overspending — It’s Diffused Ownership

Nobody is trying to waste money. That’s the thing that gets missed when these conversations happen.

Finance is tracking invoices. IT is managing what they know about. Department heads are approving tools their teams ask for. Someone in sales signed up for a prospecting tool on a free trial six months ago, it converted to paid, and it’s been quietly billing ever since. None of these people did anything obviously wrong. They were each handling their piece. The problem is that handling your piece and owning the whole thing are completely different jobs — and in most companies, nobody has the second job. This kind of fragmentation is often described as software sprawl in organizations, a pattern widely observed by Gartner.

What this produces isn’t reckless spending. It’s something more mundane and harder to fix: a stack that grew through a thousand individually reasonable decisions that nobody was ever in a position to see all at once.

In my experience, the moment this becomes visible is always a little uncomfortable. Someone pulls a consolidated number — usually for the first time, usually because something external forced it, a budget freeze, a new CFO, a bad quarter — and the figure is higher than anyone expected. Not catastrophically higher. Just higher than it should be for what the company is actually getting. And then comes the harder realization: nobody is quite sure how it got there.

The instinct at that point is to open a spreadsheet. Start listing tools, find the ones nobody uses, cancel them. Which is fine, as far as it goes. But it treats the output without touching the input. The stack didn’t bloat because of the tools. It bloated because there was no structure around how tools enter the stack in the first place — no single person with both the visibility and the authority to slow that process down. This is exactly why a proper SaaS stack audit so often surprises people: it’s not just a cost exercise. It’s the first time anyone has looked at the whole thing together.

That last part matters more than people admit. Plenty of companies have someone nominally “responsible” for SaaS spend. But responsible for tracking it is not the same as empowered to control it. If your SaaS owner can see everything and change nothing, you don’t have an owner. You have a very well-informed bystander.

The SaaS stack audit has to start here. Not with the spreadsheet — with the question of who can actually make binding decisions about the stack. If that answer is murky, or if it splinters across three departments depending on which tool you’re talking about, that’s the finding. Everything else is downstream of it. If your stack feels messy, it’s often tied to a deeper issue — not just tools, but how decisions get made. This is the same pattern we see when analyzing what actually drives website traffic.

Most SaaS stacks don’t grow because of strategy — they grow because tools feel like progress. It’s the same trap behind the broader debate around content vs tools in digital marketing.

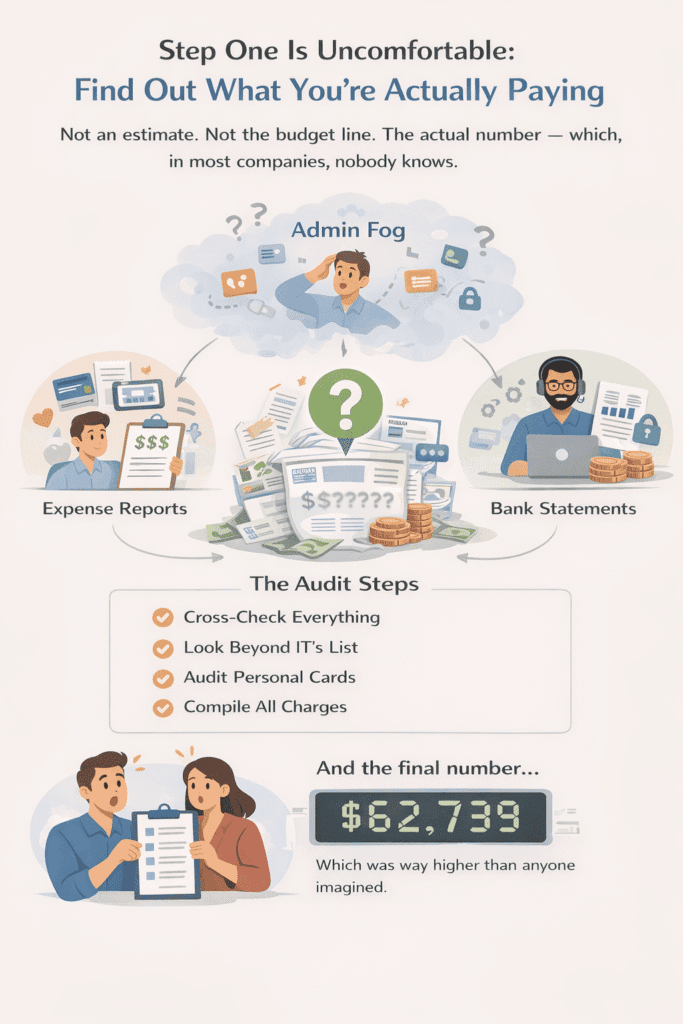

Step One Is Uncomfortable: Find Out What You’re Actually Paying

Not an estimate. Not the budget line. The actual number — which, in most companies, nobody knows.

That’s not an exaggeration. It’s genuinely rare to find an organization that can tell you, with confidence, what it spends on software each month across every team, every card, and every payment method. What most companies have instead is a partial picture: the tools that went through formal procurement, the invoices finance happens to see, the licenses IT manages. Everything else exists in a kind of administrative fog.

Getting out of that fog is the first job of any SaaS stack audit, and it’s more tedious than it sounds. You’re not just pulling a report. You’re cross-referencing bank statements against expense reports against SSO logs against what department heads tell you — and those four sources will not agree with each other. That gap between them is where the money is. What sits in that gap is often linked to hidden SaaS costs and shadow IT, a problem frequently highlighted by IBM in enterprise environments.

What you’ll find in that gap, almost every time: subscriptions on personal cards being expensed monthly that never made it into any centralized system. Annual contracts that renewed quietly without triggering a review because they only hit the books once a year. Free trials that converted to paid when nobody was watching and have been billing on a card ever since. Tools set up by people who left the company six months ago, still running, sometimes still used by the team on inherited credentials, sometimes just running.

The annual renewals are the most consistently underestimated. A tool that bills monthly shows up every month — someone notices it eventually. A tool on an annual contract disappears from attention for eleven months, renews, and the moment passes before anyone thinks to ask whether it should have. Do that across five or six tools over two or three years and you have a meaningful slice of budget that’s essentially on autopilot — spending that continues not because anyone decided it should, but because nobody decided it shouldn’t.

What makes this step uncomfortable isn’t the tedium. It’s what the process reveals about how little structured oversight existed before you started. Finding a tool nobody remembers approving isn’t an anomaly — in most audits, it happens repeatedly. And each time it does, it’s a small reminder that the stack has been making its own decisions for a while.

One practical note: don’t try to evaluate tools while you’re finding them. The temptation to start making cut decisions mid-inventory is strong, especially when something obviously redundant surfaces early. Resist it. A SaaS stack audit done in two simultaneous phases — discovery and judgment running at the same time — tends to produce sloppy conclusions. You can’t make clean decisions about a stack you don’t fully see yet. Get the complete picture first, then decide.

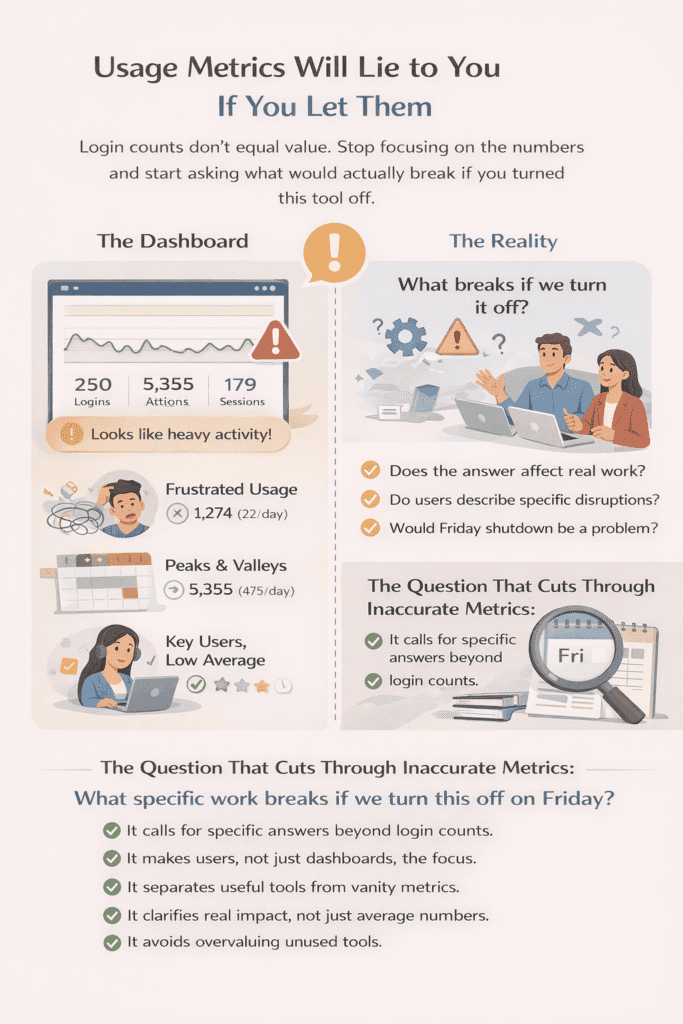

Usage Metrics Will Lie to You If You Let Them

Login frequency feels like the obvious thing to look at. It’s quantifiable, it’s available, and it seems to remove the politics from the decision. That’s exactly why it’s dangerous.

The problem isn’t that usage data is wrong. It’s that it measures the wrong thing for most of the tools that actually matter. Tools that are genuinely embedded in how work gets done tend to have strange usage patterns — opened in bursts, used heavily for one week out of six, critical to two people and invisible to everyone else. Tools that are actively harmful to productivity, badly configured, or just poorly adopted can show high login numbers because people are logging in to fight with them. The metric doesn’t distinguish between those two situations but you have to. This is a classic example of confusing vanity metrics with actionable metrics, something extensively discussed by HubSpot.

What usage data is actually good for is the easy end of the spectrum — the tools with genuinely no activity, the ones that drifted out of workflows so gradually that nobody noticed until the audit surfaced them. That signal is reliable and you should trust it. Three logins in ninety days across a thirty-person team is a finding. Act on it. Everything in the middle is where it gets complicated.

A tool used heavily by two people and barely touched by twenty looks like poor adoption in the aggregate numbers. But if those two people run a function that depends on it, the aggregate number is actively misleading you. And this happens more than people expect, because most usage dashboards are built to show company-wide metrics, not role-specific ones. You’re seeing an average that flattens the one use case that actually matters.

The other thing nobody mentions: most usage data comes from the vendor — which means the definition of “active user” was written by the people who benefit from that number being high. In some platforms, clicking through an automated email notification counts. Opening the app to check a notification and closing it counts. You’re often not measuring whether the tool is valuable — you’re measuring whether people have encountered it recently, which is not the same thing at all.

The question that actually cuts through all of this is brutal in its simplicity: what breaks if we turn this off on Friday? Not “is it used” — what specifically stops working. Ask that to the actual users, not their managers. Managers will often say “yes we use it” because they approved the purchase and cancelling it feels like admitting a mistake. The person doing the work either has a specific answer or they don’t. The specificity of the answer is the data point.

This is one of the places where a SaaS stack audit either produces real insight or just generates a tidy spreadsheet that nobody fully trusts. The difference is whether you treat usage metrics as a starting point for the right conversations, or as a substitute for having them. The numbers can tell you where to look. They can’t tell you what you’re actually looking at. This is the same mistake many teams make when they prioritize tools over outcomes — a pattern explored in content vs tools in digital marketing.

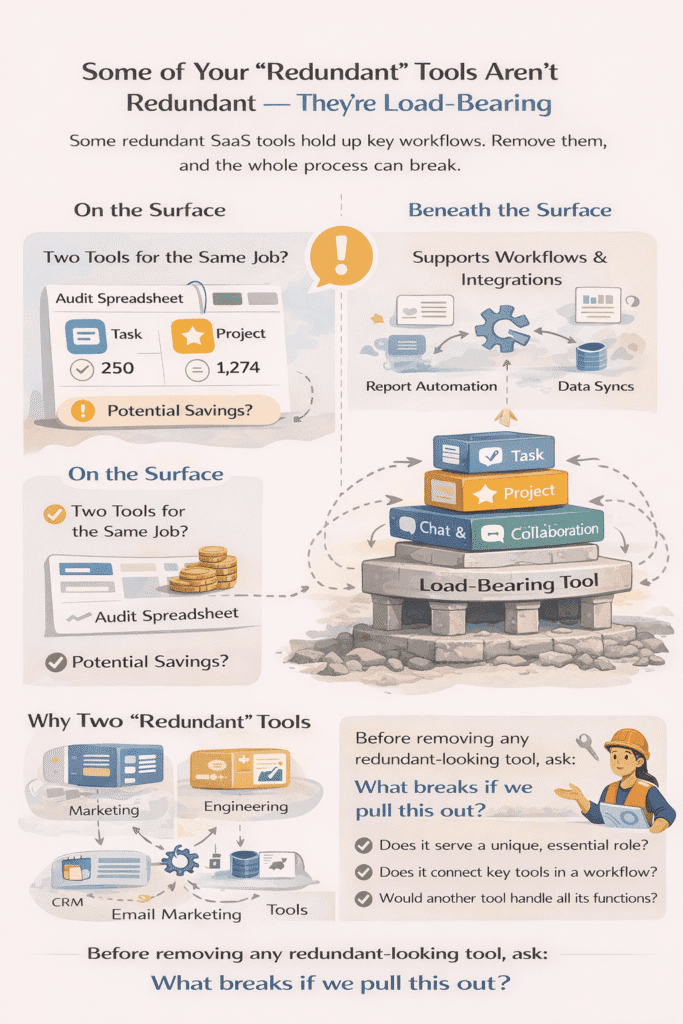

Some of Your “Redundant” Tools Aren’t Redundant — They’re Load-Bearing

Every stack has them. Two tools that appear to do the same thing, sitting side by side on the audit spreadsheet, looking like an obvious consolidation opportunity. Sometimes they are. Sometimes pulling one out brings down something you didn’t know was resting on it.

The redundancy assumption is seductive because it feels logical. Two project management tools, two file storage solutions, two tools with overlapping communication features — the instinct is to pick one and eliminate the other. And in a clean, rationally designed stack, that would be the right call. But stacks aren’t designed rationally. They accumulate. And in the process of accumulating, tools develop dependencies, workarounds, and integrations that aren’t visible from the outside and aren’t documented anywhere because nobody ever thought they’d need to explain them.

The classic version of this is when two teams independently adopted different tools for what looks like the same job, but are actually using them for different reasons. Marketing is using one project management platform because it handles campaign briefs and creative approvals in a way that fits their workflow. Engineering is using a different one because it integrates with their deployment pipeline in a way the first one doesn’t. On a spreadsheet, that looks like redundancy. In practice, consolidating them means one team loses something they genuinely need — and the solution is either a messy migration or a tool that half the company uses badly.

What makes this harder to spot is that the people closest to these tools often can’t articulate why they need them. Not because they don’t have reasons — because the reasons are embedded in muscle memory and daily habit rather than explicit logic. Ask someone why they use a particular tool and they’ll describe the surface features. Ask them what they’d do differently if the tool disappeared and you start getting closer to the actual answer. Those are different questions and they produce very different information.

There’s also a category of tool that’s load-bearing in a less obvious way — not because of what it does directly, but because of what it connects. A middleware tool, an integration layer, something that sits between two other systems and makes them talk to each other. These are the most dangerous ones to remove because their value is almost entirely invisible during normal operations. The tool doesn’t do anything you’d notice day to day. It just quietly ensures that data moves from one place to another, or that an automation runs, or that a report populates correctly. Until it doesn’t.

In my experience, the integrations question is the one most SaaS stack audits skip — because it requires talking to people who understand the technical architecture rather than just the business workflows, and those conversations take longer and feel less productive in the moment. But skipping them is how you end up cancelling a $15/month tool that was holding together a process that cost three days of engineering time to rebuild.

The practical test here is slower and less satisfying than a usage report, but it’s the right one: before removing anything that looks redundant, map what it connects to. Not just what it does in isolation — what depends on it. Other tools, other processes, other teams. If that map is empty or nearly empty, the redundancy is probably real. If it turns out the tool is sitting in the middle of three workflows nobody thought to mention, you’ve found a load-bearing wall dressed up as a spare room.

Consolidation is still often the right answer. But the decision should come from understanding the architecture, not from pattern-matching on feature overlap. Two tools that look identical from the outside can be doing completely different structural jobs on the inside. The audit has to go deep enough to see the difference. This is where most “best tools” lists fall short — they compare features, not how tools actually function inside a real workflow.

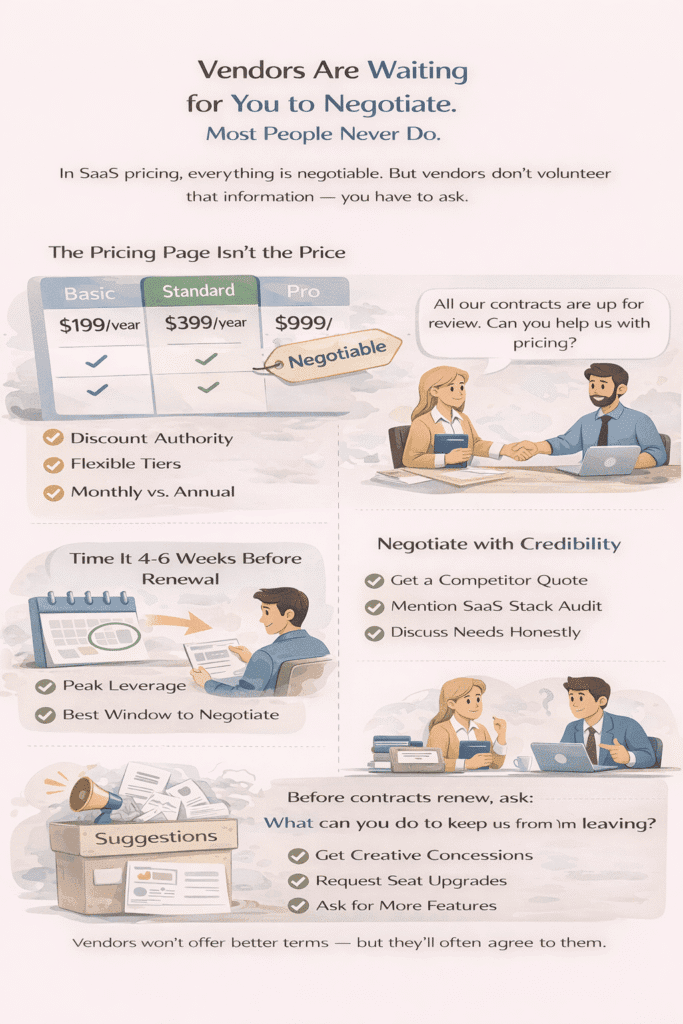

Vendors Are Waiting for You to Negotiate. Most People Never Do.

The number on the pricing page is not the price. It’s the price for people who don’t ask.

Most SaaS vendors have discount authority baked into their account teams. They have flexibility on seats, on tiers, on annual versus monthly, sometimes on the base rate itself. This isn’t a secret — it’s just not advertised, for obvious reasons. And the gap between what companies pay and what they could pay if they simply started a conversation is, across a mid-size company’s full stack, often significant enough to be embarrassing in retrospect.

The psychological barrier is real though. Published pricing feels official. A clean pricing page with three tiers and a toggle for annual billing doesn’t look like the opening of a negotiation. It looks like a menu. And so people order from the menu, auto-renew from the menu, and three years later have paid a meaningful premium over what a five-minute conversation might have produced.

Timing is the thing most people get wrong when they do try. Negotiating after renewal is essentially pointless — you’re already locked in and the vendor knows it. The window is the four to six weeks before the contract renews, when the account team’s anxiety about losing you is at its highest and they still have time to process a concession internally. Reach out during that window and the conversation starts from a different place than it does when you’re calling from a position of having already signed.

What actually moves vendors, in my experience, is not aggression — it’s credibility. A competitor quote helps less because of the implicit threat and more because it demonstrates you’ve done the work. You know what alternatives cost. You’re not just complaining about price in the abstract. Similarly, flagging that you’re mid-way through a SaaS stack audit and reviewing all contracts is genuinely effective — not as a tactic, but because it’s true and it reframes the conversation from “this customer wants a discount” to “this customer is making a structured decision and needs a reason to stay.” Those land differently on the other end of the call.

The threat to cancel is worth addressing directly because it’s the move people default to and it’s the one that works least reliably. If your usage is deep, your integrations are real, and you’ve been a customer for two years, nobody believes you’re leaving over 15%. They might give you something anyway just to close the conversation, but they know and you know the threat isn’t fully credible. The more honest version — “we want to stay, we’re committed to this tool, we need the pricing to reflect that” — is less dramatic and more effective, which feels counterintuitive until you’ve actually sat through enough of these conversations to see the pattern.

One thing that genuinely surprises people: price is often not the most available lever. Vendors who won’t touch the base rate will sometimes add seats, upgrade your tier, extend payment terms, or throw in features from a higher plan without making it feel like a negotiation at all. The ask doesn’t have to be a percentage. Sometimes “what can you do for us at renewal” is enough to open a conversation that produces something real — because the account team was waiting for someone to ask.

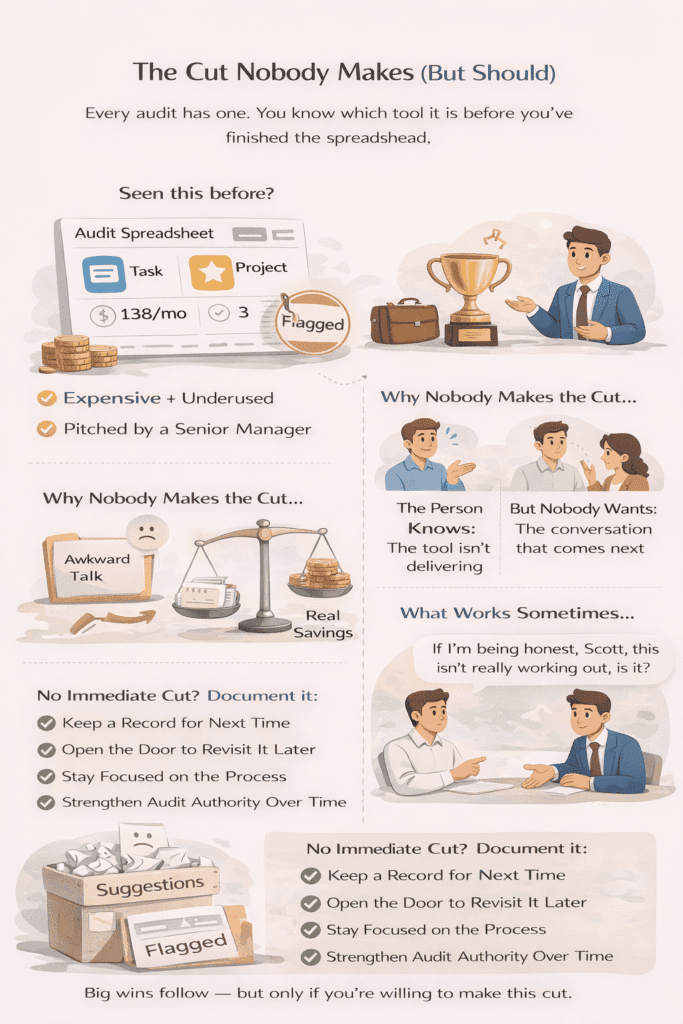

The Cut Nobody Makes (But Should)

Every audit has one. You know which tool it is before you’ve finished the spreadsheet.

It’s not the most expensive line item. It’s not the one with the worst usage numbers. It’s the one a senior person championed, got budget for, announced with some fanfare, and that never quite delivered what was promised — but also never failed visibly enough to give anyone an opening to say so. It just settled into the stack like furniture. Expensive furniture nobody sits on.

The unspoken calculation that keeps it there is pretty simple: the saving is real but finite. The awkwardness of the conversation is also real, and it doesn’t feel finite. So the tool stays. The audit closes with a slightly disappointing savings number, and everyone moves on having privately agreed not to examine why.

What I find interesting about this pattern — and I’ve seen it often enough that it feels like a pattern rather than a coincidence — is that the person who bought the tool usually knows. They’ve watched the adoption numbers. They’ve noticed the team working around it rather than with it. They’re not being protected from information. They’re being protected from having to act on information they already have, in front of people who were there when they made the original call. That’s a different problem, and it doesn’t have a spreadsheet solution.

The approaches that don’t work: building an airtight data case and presenting it formally, which turns the meeting into a tribunal nobody asked for. Going around the person to someone more senior, which creates a different political problem than the one you were trying to solve. Waiting for the tool to fail loudly enough that the decision makes itself, which can take years and costs money the whole time.

What sometimes works — and I say sometimes deliberately, because this isn’t a reliable formula — is finding a moment of genuine one-on-one honesty before it becomes a group conversation. Not a presentation. Not a recommendation. Just a direct question: “What’s your honest read on how this has landed?” Most people, asked that way, in private, will tell you the truth. And when the person who championed the tool is also the person who surfaces the case for reconsidering it, the organizational dynamics shift completely. It’s no longer a verdict. It’s a pivot.

The other thing worth saying is that this cut sometimes just doesn’t happen on the first SaaS stack audit. The timing isn’t right, the relationship isn’t there, something else is going on that makes this the wrong moment. That’s a real outcome, not a failure. Document it. Make sure the next review starts with the tool already flagged rather than having to rebuild the case from zero. The cut that should happen in Q1 and happens in Q3 is still a cut.

But the ones that never happen — because every audit finds a reason why this particular moment isn’t quite right — those are worth being honest about too. Sometimes tools don’t get cut because the organization isn’t ready. And sometimes they don’t get cut because the audit never really had the authority to make the hard calls in the first place. Those are different problems, and conflating them is how the furniture stays. This is less about tools and more about decision-making — something that shows up across almost every marketing mistake.

An Audit Is a Snapshot. Bloat Is a Behavior.

Here’s the thing nobody wants to say at the end of one of these: the stack will grow back.

Not immediately. Not all at once. But the same conditions that produced the bloat in the first place — distributed purchasing, no central visibility, individual teams making individually reasonable decisions — those conditions don’t disappear because you ran an audit. The audit cleared the backlog. The intake process is still the same. And backlogs rebuild.

Most companies that do this seriously are back to a similar spend level within eighteen months. Sometimes faster. Not because the audit failed, and not because the people involved were careless — but because an audit is a one-time act of attention in a system that needs ongoing attention. Doing it once and expecting the results to hold is like cleaning out a closet and expecting it to stay that way without changing anything about how clothes enter the house.

The structural fix is genuinely simple, which is almost why it doesn’t happen. Someone needs to own the stack with real authority — not track it, not report on it, own it. See new tool requests before they become subscriptions. Have the standing to slow things down without it becoming a negotiation. That’s it. One person, clear mandate, actual power. Most companies instead build a committee, or a shared doc, or a Slack channel called #saas-tools that starts active and goes quiet within a month.

There’s an honest admission buried in all of this: a SaaS stack audit is most valuable not for what it saves, but for what it reveals about how decisions get made. The spend number is a symptom. The ownership vacuum, the auto-renewals nobody caught, the politically protected tool that survived three reviews — those are the findings that matter. Fix the spend without addressing those and you’ve done the easy part twice.

Some re-bloat is fine, worth saying. Companies grow. Needs change. The point was never to achieve some permanent minimalist stack — it was to make the growth intentional rather than accidental. There’s a version of a larger, more expensive stack that’s completely justified because someone looked at it and decided it should be that way. That’s different from the version where it got there because nobody was watching.

The audit is how you find out which version you inherited. Everything after it is about which version you choose.