Most articles about digital marketing mistakes focus on the obvious ones. The ad spend that went to the wrong audience. The campaign that missed the brief. The rebrand nobody asked for. Those mistakes are expensive, but they’re also visible — you see them, you fix them, you move on.

The ones worth worrying about are quieter than that.

They’re the decisions that made complete sense when they were made. The strategies that looked like they were working right up until the month they visibly weren’t. The assumptions so fundamental to the plan that nobody thought to question them.

These are the digital marketing mistakes that waste time at a scale that budget losses don’t capture — not a bad quarter, but six months of momentum that didn’t compound, an audience that drifted while nobody was watching, a direction that got changed just before it would have started working.

That’s what this article is actually about. Not a checklist of things to avoid, but an honest look at the patterns that cost marketing teams months — sometimes entire strategic cycles — without ever announcing themselves as problems while they’re happening.

Some of it will be uncomfortable to read. Most of it will probably be familiar.

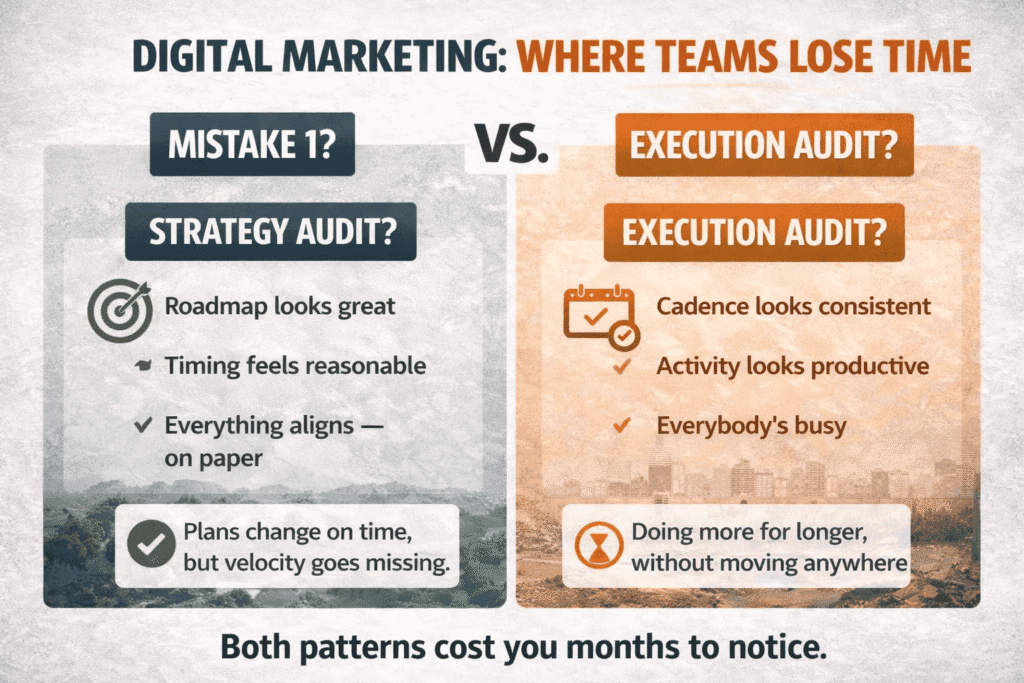

The Six-Month Delay Problem

Nobody panics in month one.

That’s the part nobody talks about when they discuss digital marketing mistakes that waste time — the long, comfortable silence before anything visibly breaks. You make a call. You shift budget, deprioritize a channel, commit to a content cadence that feels productive. Life continues. Reports go out. The numbers don’t scream at you. So you assume you’re fine.

And then it’s month six, and the pipeline looks like a drought, and everyone is staring at the current month’s activity trying to figure out what went wrong now — when the actual decision that caused it was made half a year ago, buried under everything that came after.

This is what makes it different from a bad campaign. A bad campaign is almost a gift — you see it fail fast, you stop spending, you move on. The six-month delay is quieter and meaner. It lets you keep working, keep reporting, keep feeling like things are moving, while the real damage accumulates somewhere just outside your line of sight.

What tends to fill that gap is activity. Content goes out. Emails get sent. Someone redesigns the newsletter template. And because the team is visibly doing things, there’s no moment where anyone stops and says something is wrong. The busyness itself becomes a kind of reassurance.

I think about it like a slow puncture. You drive on it for weeks. The car feels mostly fine. Then one day you’re on the side of the road wondering when it happened, and the honest answer is: gradually, and then all at once.

The thing most teams get wrong in retrospect isn’t the decision — it’s that they can’t find it anymore. By month six, there have been twelve other decisions layered on top. The thing you’re looking for isn’t in this month’s data. It’s in a strategy doc from last spring that nobody has opened since.

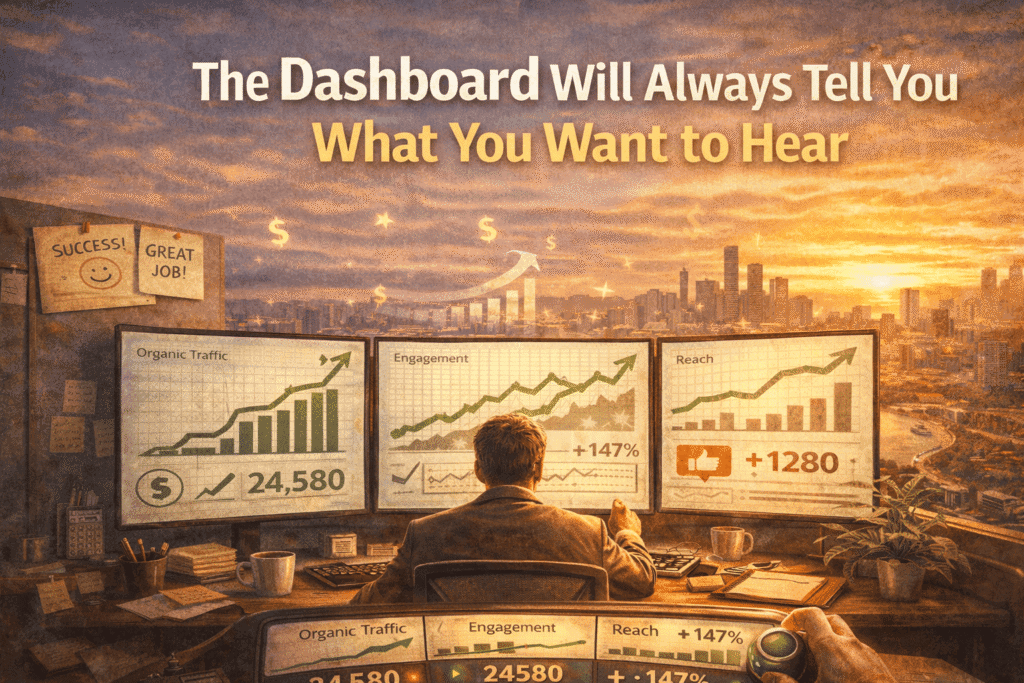

The Dashboard Will Always Tell You What You Want to Hear

At some point, someone on your team built a dashboard. They chose what to put in it. And from that day forward, those metrics became the definition of success — not because anyone decided that explicitly, but because what gets measured gets reported, and what gets reported becomes the thing everyone optimizes for-— a pattern research on confirmation bias in decision-making has documented across industries long before it became a marketing problem.

That choice, made once, often quietly shapes everything that follows.

The part that’s hard to admit is that the metrics you track weren’t chosen neutrally. They were chosen by people who already had a theory about what was working. Traffic was tracked because someone believed content was driving growth. Engagement was tracked because someone argued social was building brand awareness. The dashboard didn’t reveal the truth — it institutionalized a hypothesis. And the tools feeding that dashboard matter more than most teams realize — something worth thinking about whether you’re working with free or paid marketing tools, since the metrics each surfaces will quietly shape what you pay attention to.

What I’ve seen happen — more than once, in more than one type of organization — is that teams get genuinely skilled at producing the metric they chose. Organic traffic climbs. Email open rates improve. Social following grows steadily. And everyone involved feels, reasonably, like they’re doing their jobs well.

The question nobody asks out loud is whether any of it is connected to the thing the business actually needs. Not because they’re avoiding it on purpose, but because the dashboard doesn’t ask it. And if the dashboard doesn’t ask it, the Monday morning meeting doesn’t ask it either.

This is where some of the most costly digital marketing mistakes that waste time hide — not in failed campaigns or poor execution, but in the quiet acceptance of a measurement system that was never designed to challenge itself.

This is different from the vanity metrics conversation you’ve probably heard before. That conversation is usually about swapping shallow metrics for deeper ones — impressions for conversions, followers for leads. Useful, but it doesn’t go far enough. The real issue isn’t which metrics you picked. It’s that any set of metrics, once chosen, starts to constrain how you see the problem. You stop noticing what isn’t being measured. And the things that aren’t being measured are often the things that would change your strategy entirely if you could see them.

There’s a question worth asking about any marketing strategy, and almost nobody asks it: what would the data need to show for us to conclude this isn’t working? Not as a hypothetical — as an actual, specific answer. If you can’t answer it, you don’t have a measurement strategy. You have a confirmation system. The numbers will keep coming in, the reports will keep going out, and the story will keep making sense right up until the moment it doesn’t.

By which point, you’ll be in month six wondering what happened.

You’re Marketing to a Customer Who No Longer Exists

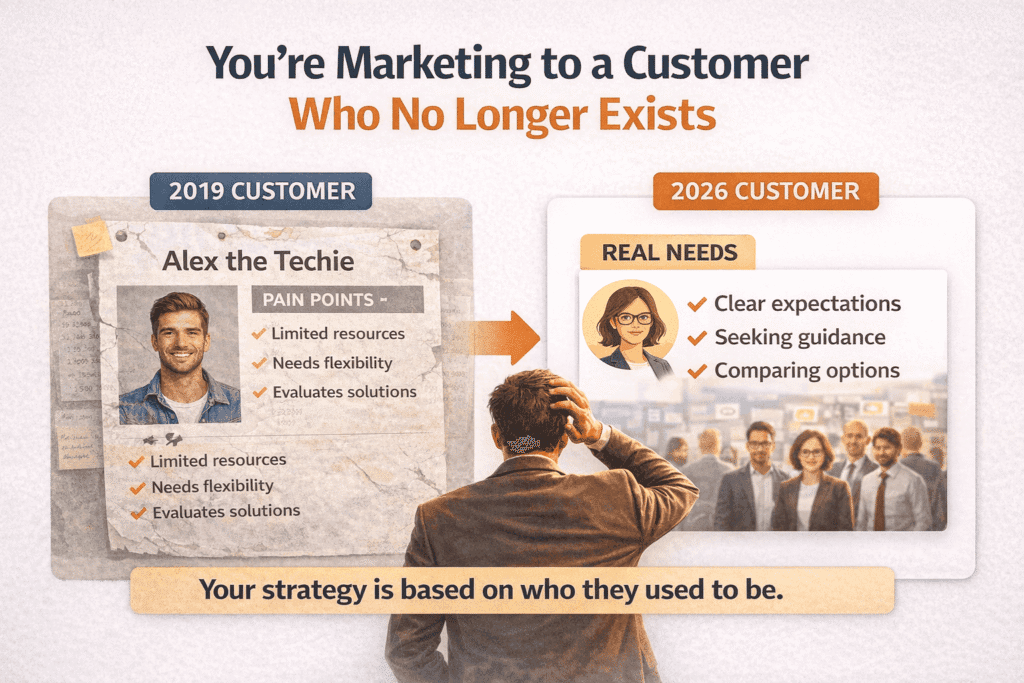

There’s a persona document somewhere in your shared drive. It has a name, probably a stock photo, definitely a list of pain points. And it was written at a specific moment in time — a product launch, an agency kickoff, a strategy session — that is no longer the moment you’re in.

Nobody updated it. Not because anyone forgot, but because there was always something more urgent to do, and besides, it still felt roughly accurate. That’s the thing about a persona going stale — it doesn’t announce itself. The document looks the same. The messaging built around it looks the same. The only thing that changes is that it quietly stops working as well as it used to, and nobody immediately connects those two facts.

The version of your customer that lives inside your organization is built from a specific set of inputs: the early adopters who gave feedback, the sales calls that closed, the support tickets that got escalated, the loudest voices in whatever community you were paying attention to at the time. That’s not a representative sample of who’s buying now. It’s a historical record of who was buying then, filtered through the people inside your company who were paying attention.

Here’s where it gets uncomfortable. As a product grows, the buyer profile shifts in ways that aren’t always obvious from the inside. Early adopters are a specific kind of person — they tolerate rough edges, they seek out solutions actively, they respond to direct and technical messaging because they already understand the problem space. The buyers who come after them don’t. The messaging that converted the first few hundred customers can quietly repel the next thousand, because it’s written for someone with context that the new audience simply doesn’t have yet.

What you’ll notice — if you’re watching for it — is a slow degradation in the quality of the conversation rather than the quantity. Leads still come in. But the sales cycle gets longer. Objections start appearing that didn’t used to. Customers arrive with expectations that don’t match what you built. The instinct is to look at the funnel, the creative, the offer. The actual answer is upstream of all of that.

This is one of those digital marketing mistakes that waste time in the most invisible way — because nothing breaks loudly enough to trigger a real investigation until you’re already months behind.

The research instinct is understandable but overrated as a fix. Surveys help. Customer interviews help. But they have their own lag, their own selection bias — the customers who respond to research requests are not a neutral sample — and they’re almost always designed to validate a direction rather than genuinely challenge one. You get back a report that confirms something you mostly already believed, and call it insight.

What’s harder to systematize, and more useful, is staying close to the raw sales conversation — not the CRM summary, but the actual call. The specific words someone uses before your framing has reached them. The comparison they make that you weren’t expecting. The objection that doesn’t fit any of your prepared responses. That’s where the drift shows up first, months before it appears in your conversion data.

The customer you’re marketing to exists somewhere between who actually showed up last quarter and who you’ve collectively decided they are. Those two things are rarely as close as the persona document makes them look.

What Actually Happens When the Algorithm Changes

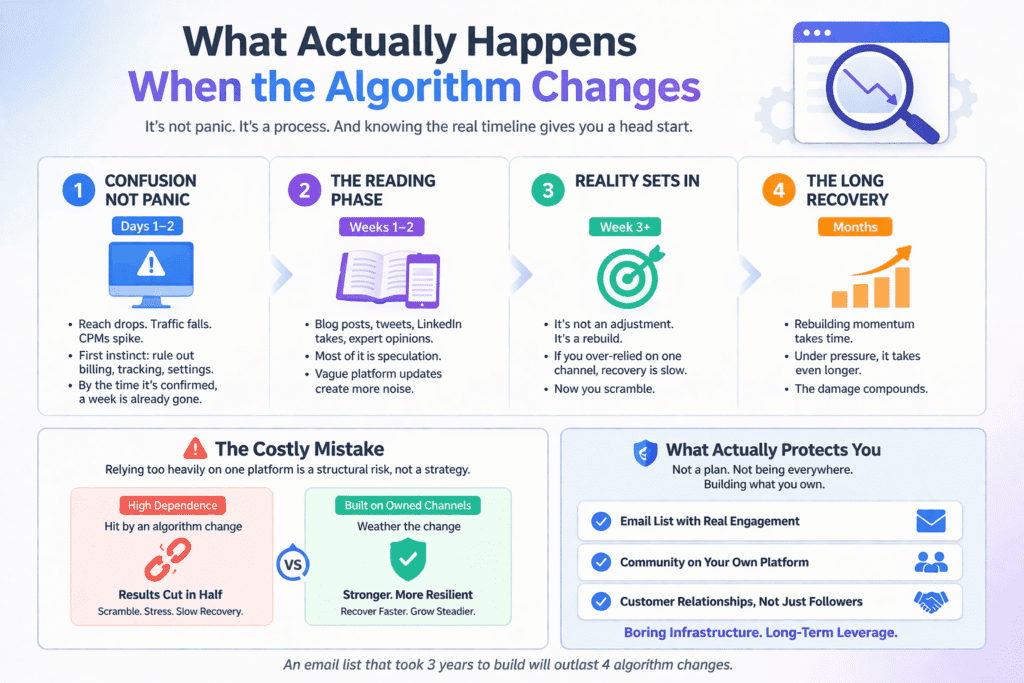

The first thing that happens is confusion, not panic.

That’s worth saying clearly because most of the advice around algorithm changes assumes you’ll know immediately what hit you. You won’t. When reach drops or traffic falls or CPMs suddenly spike, the first two days are spent ruling out everything else — a billing issue, a tracking error, something someone changed in the campaign settings without mentioning it. By the time you’ve confirmed it’s actually the platform, you’ve already lost a week to the wrong investigation.

Then comes the reading phase. Blog posts, Twitter threads, LinkedIn takes from people who sound authoritative. And here’s the thing about that — the commentary that circulates in the first two weeks after a major algorithm shift is almost entirely speculation. The people writing with the most confidence are usually the ones who understand it least. The platforms themselves release vague guidance — Google’s own Search Central Blog is a useful reference point, though it rarely tells you as much as you need in the immediate aftermath of a core update.

None of this is avoidable, exactly. It’s just what the process actually looks like from the inside, as opposed to how it gets described in retrospect when someone writes a case study about how they navigated it successfully.

The part that actually costs you isn’t the confusion phase. It’s what comes after, when you realize that recovery isn’t a matter of adjusting your strategy — it’s starting over. If a channel was driving the majority of your results and it gets cut in half by a platform decision you had no input on, you don’t pivot gracefully to something else. You scramble toward something else that isn’t ready yet, that hasn’t been built up, that has no momentum behind it. Building momentum takes time under normal circumstances. Building it under pressure, while also trying to claw back what you lost, is a different thing entirely.

This is where digital marketing mistakes that waste time stop being about tactics and start being about structure — specifically, the structural decision to let one platform carry more weight than your business can afford to lose.

In my experience the teams that came through these shifts in the best shape weren’t the ones with contingency plans documented somewhere. They were the ones who had, almost accidentally, been building something off-platform at the same time — an email list that was actually engaged, a community that existed somewhere they controlled, a customer relationship that didn’t depend on a feed algorithm deciding to show their content that day. Not because they predicted anything. Just because they’d been doing the unglamorous work alongside the growth that felt more exciting.

The diversification advice you’ll hear — be on multiple channels, don’t build on rented land — is correct in principle and almost useless in practice, because it gets interpreted as “be everywhere,” which just means being spread thin across six platforms while building nothing substantial on any of them. That’s not protection. That’s just distributed mediocrity.

What actually protects you is narrower and less satisfying to implement: pick one or two things you own, build them slowly and consistently, and treat them as boring infrastructure rather than growth levers. An email list that took three years to build and never drove a spike in your weekly metrics will outlast four algorithm changes. That’s not a thrilling insight. It’s just what the recovery timeline looks like when you map it against people who had that list versus people who didn’t.

Busy Is Not the Same as Building

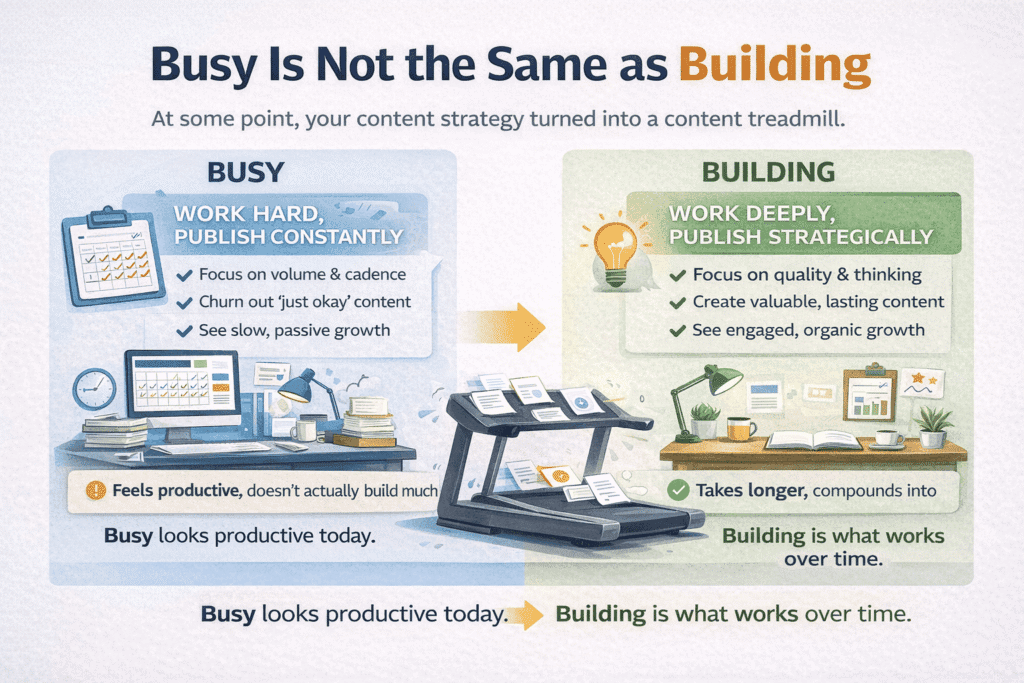

At some point, publishing four times a week stopped being a content strategy and became a content obligation.

Nobody decides this consciously. It accumulates. You start posting consistently because consistency matters — and it does, genuinely — and then consistency becomes the cadence, and the cadence becomes the expectation, and suddenly the question isn’t “what’s worth saying this week” but “what can we get out by Thursday.” The goal quietly shifts from building something to maintaining something, and those are not the same activity even though they look identical from the outside.

The output keeps coming. The calendar stays full. And the metrics — reach, impressions, follower count — move in the right direction, slowly, which is enough to keep the whole thing going. What doesn’t get measured is what the audience is actually taking away — and according to Content Marketing Institute’s annual research, most marketing teams still struggle to connect content output to meaningful business outcomes. Whether the people consuming the content are developing any particular relationship with the brand, or just scrolling past it because it showed up.

There’s a specific kind of marketing exhaustion that comes from this, and it hits the team before it hits the strategy. Writers running out of angles. Designers recycling formats. Marketers pitching ideas not because the ideas are good but because something needs to go in the slot. The work gets thinner. Not obviously — the production quality might stay the same or even improve — but the thinking behind it gets thinner, because the thinking is being rationed across too many pieces that all need to ship.

What’s strange about this is that it often coincides with a period that looks, from the outside, like marketing maturity. The brand is active. The content is consistent. There’s a recognizable visual identity. Everything appears professional and together. And underneath it, the team is exhausted and the audience is largely indifferent, and nobody quite wants to say that out loud because it would require admitting that a lot of the recent effort didn’t compound into anything.

The volume trap is particularly hard to escape because slowing down has real costs that are visible immediately, while the benefits of slowing down are invisible for months. Post less frequently and your reach drops. The algorithm notices. Follower growth stalls. Someone in leadership sees the numbers and asks what happened to the content program. So teams keep going, keep filling the calendar, keep producing things that are fine but not particularly memorable, because the alternative requires absorbing short-term pain for long-term gains that are genuinely hard to promise.

This is one of those digital marketing mistakes that waste time in a way that’s almost impossible to see while it’s happening — because the team is working hard, the content is going out, and everything looks like it’s functioning. The damage only becomes visible when you step back and ask what any of it actually built.

I don’t think there’s a clean answer to this. The honest version is that building something an audience actually values requires a different relationship with output than most marketing teams are structured to have. It requires being willing to publish less and think more, which runs directly against how marketing effort gets measured and reported in most organizations. That’s not a content strategy problem. It’s an incentive problem. And it often starts earlier than most people think — at the point of choosing the right marketing tools for your business, where the default metrics built into those tools begin shaping what success looks like before any strategy has been set.

What I’d push back on is the idea that the solution is simply “quality over quantity” — that framing is too easy and doesn’t account for the organizational pressure that creates the volume problem in the first place. The real question is whether the people making content decisions have enough protection from short-term metric pressure to make long-term content choices. In most teams, they don’t. And until that changes, the calendar will keep winning.

The Funnel Wasn’t the Problem

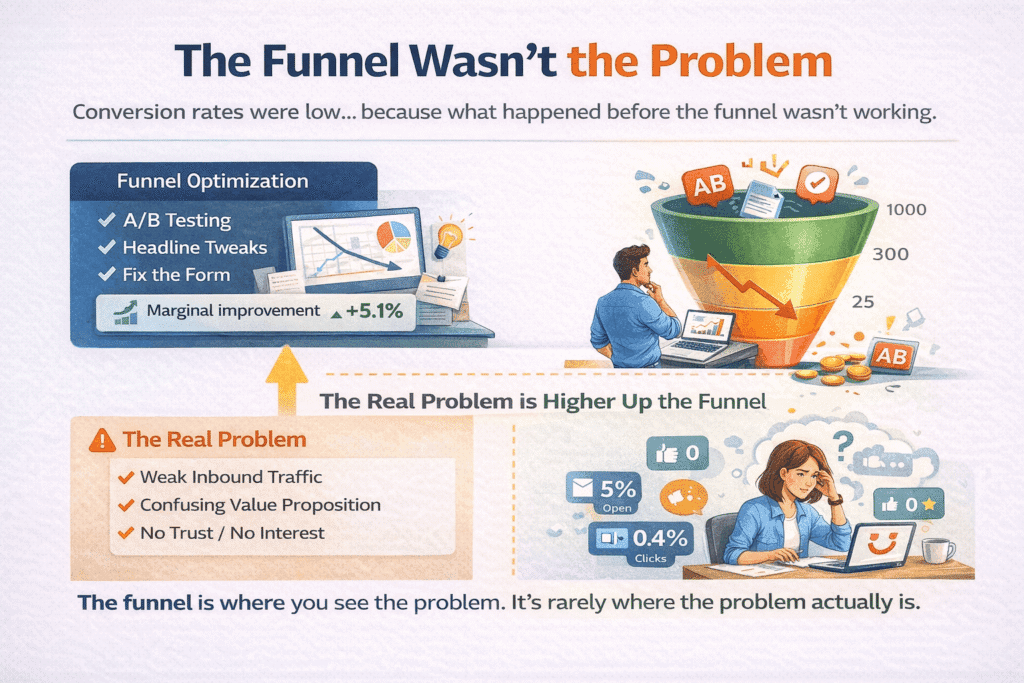

The uncomfortable thing about funnel optimization is how reasonable it always seems at the time.

Results soften. Someone pulls the conversion data. Drop-off at step two looks high, the landing page CTR is underwhelming, the form completion rate has been quietly declining for two months. So the team does what makes sense — they start testing. Headlines get rewritten. The form gets shortened. The CTA button changes color, then copy, then position. A/B tests run. Some of them produce lifts. Small ones, but real. The dashboard looks better than it did.

And the actual problem goes completely unaddressed.

Because here’s what the conversion data doesn’t tell you: whether the people arriving at the funnel had any business being there in the first place. Whether they understood what they were being asked to consider. Whether anything in the preceding thirty days of marketing had given them a reason to care. The funnel measures what happens at the decision point. It has nothing to say about everything that shaped the person before they got there.

Low conversion rates are a symptom. The funnel optimization instinct treats them as a diagnosis. And once you’ve committed to a quarter of CRO work, you’ve also committed — implicitly, without anyone deciding this explicitly — to the assumption that the funnel is where the problem lives. That assumption never gets tested. It just gets acted on, because acting on it produces activity, and activity produces reports, and reports give everyone something to point to while the underlying issue continues doing whatever it was doing before anyone looked at the landing page.

What makes this particularly hard to catch is the partial lift. You run enough tests, you’ll find something that improves the number slightly. Enough to satisfy the immediate pressure. Not enough to solve what’s actually wrong. And by the time that becomes clear, the team has moved on to the next quarter’s priorities, and nobody goes back to ask whether the improvement was real or just a small adjustment to a system that was still fundamentally pointed in the wrong direction.

I’ve watched this cycle run two or three times inside the same organization before anyone names it. The funnel gets optimized. Numbers improve marginally. The next dip gets blamed on the funnel again. More optimization. More marginal improvement. It becomes the default response to any conversion problem — because it worked, sort of, once — and because the alternative is sitting with a much harder question. This is precisely where digital marketing mistakes that waste time compound most quietly — not in a single bad decision, but in a cycle that feels productive while solving the wrong problem, repeatedly.

That question is something like: before someone reaches the funnel, what do they believe, and is that belief the one they need to have? Not in the abstract. Specifically — does this person understand what they’re being asked to do, do they have enough context to evaluate it honestly, and has anything in their experience of the brand so far given them a reason to say yes now rather than closing the tab and thinking about it later? Those questions don’t live in the funnel. They live in the content that ran three weeks ago, the ad they saw twice and half-ignored, the email they opened but didn’t click. Places that are harder to measure and slower to change and much less satisfying to put in a monthly report.

The funnel is the last place the problem shows up. It’s almost never where the problem started.

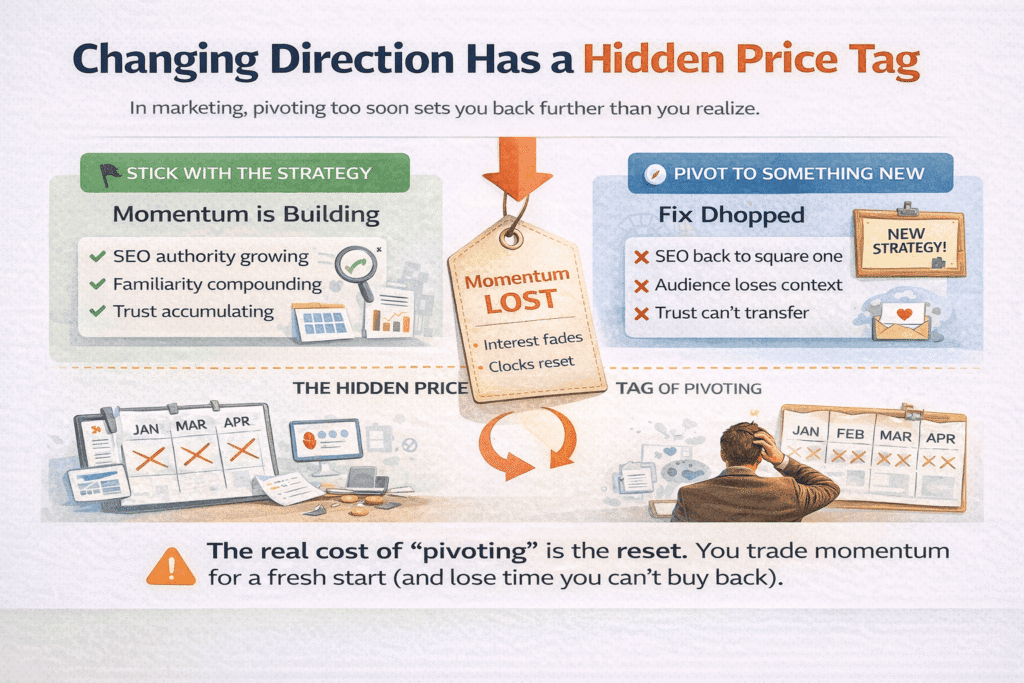

Changing Direction Has a Hidden Price Tag

Pivoting is one of those words that has been so thoroughly rehabilitated by startup culture that it no longer sounds like what it actually is: stopping something before it had a chance to work.

That’s not always wrong. Some things genuinely need to be stopped. A strategy that’s fundamentally broken doesn’t become less broken with more time and budget, and there’s a real cost to persisting with something out of sunk cost reasoning rather than genuine conviction. But the pivot instinct — the reflex toward changing direction when results aren’t coming fast enough — has a shadow side that almost never gets accounted for, which is what the change itself costs in terms of momentum that was quietly accumulating and is now gone.

Momentum in marketing is invisible until it isn’t. You don’t see it building. SEO authority accrues slowly across dozens of pieces of content over months before it starts producing meaningful organic traffic. Traffic holds. Leads keep coming in — slowly, on momentum. But understanding what actually drives website traffic versus what merely maintains it is a distinction most teams never stop to make — and that gap is where the delay begins.

Audience familiarity builds through repeated exposure before it produces trust. Brand recognition compounds across touchpoints before it starts shortening sales cycles. None of these show up in the weekly report as a line item. They’re not measurable in any satisfying real-time way. Which means when the decision to change direction gets made, nobody is accounting for them. They’re not on the table because they were never on the dashboard.

What gets counted instead is what’s visible — the campaign that isn’t converting, the channel that isn’t growing fast enough, the content program that hasn’t produced a spike. And because those things are visible and the accumulating momentum isn’t, the calculus always tilts toward change. It feels like a rational response to data. It is, in a narrow sense. But it’s rational in the way that cutting down a tree is rational if you’re only measuring the wood and not the shade.

The thing that’s genuinely hard to communicate to anyone who hasn’t watched this play out over a long enough timeline is that much of marketing’s value is positional rather than transactional. A transactional campaign produces results or it doesn’t, and you know within weeks. Positional work — the kind that shifts how an audience thinks about a category, or builds the kind of familiarity that makes a brand feel like the obvious choice — operates on a completely different clock. It doesn’t respond to the same feedback loops. And because it doesn’t, it’s almost always undervalued at exactly the moment it’s starting to work.

In my experience the pivot conversation usually happens around month three or four of a strategy that needed twelve. Not because anyone is being impatient in a character flaw sense, but because the organizational pressure to show results operates on a quarterly rhythm that has nothing to do with how long it actually takes to build something. The timeline mismatch is structural, and it produces a predictable outcome: strategies get abandoned just before they would have compounded, and the new direction starts its own clock, and the cycle repeats.

This is where digital marketing mistakes that waste time operate at their most systemic level — not as individual bad decisions but as a pattern that the organization keeps repeating because the incentive structure rewards responsiveness over persistence, and because the cost of changing direction is never fully calculated before the change gets made.

The cost that almost nobody accounts for is the reset. When you change messaging, the audience has to relearn what you’re about. When you change channels, you lose whatever algorithmic or relational equity you’d built. When you change your content positioning, the archive of work that was starting to establish authority becomes less coherent and therefore less powerful. None of these resets are fatal. But they’re not free either, and they take time — the same time you were trying to save by making the change in the first place.

The more useful question, before any pivot gets approved, is a simple and uncomfortable one: are we changing direction because the strategy is wrong, or because we’re not willing to wait long enough to find out? Those are different situations that can look identical from inside a quarterly review. Telling them apart requires a level of strategic honesty that most organizations find genuinely difficult — because one answer validates action and the other asks for patience, and patience is a much harder thing to sell in a room full of people looking at a flat graph.

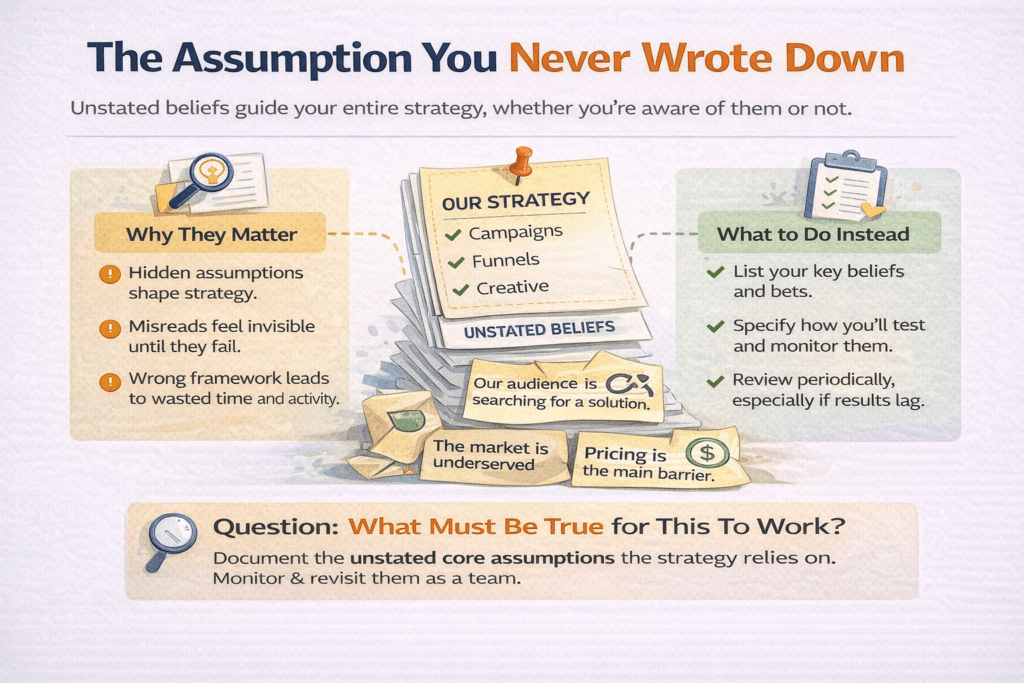

The Assumption You Never Wrote Down

Every marketing strategy is built on a set of beliefs about how the world works. Almost none of them get written down.

Not because anyone is being careless. Because they don’t feel like assumptions at the time — they feel like facts. The belief that your target audience is actively searching for a solution like yours. The belief that the problem you’re solving is felt urgently enough to prompt action. The belief that the channel you’re investing in reaches the people who actually buy, not just the people who engage. These aren’t hypotheses anyone tested and confirmed. They’re the water the strategy swims in — present everywhere, examined nowhere.

This matters most when something stops working and the team goes looking for answers in the wrong places. The campaign gets audited. The funnel gets examined. The creative gets reviewed. And nobody looks at the assumption underneath all of it, because the assumption was never written down anywhere — which means there’s no document to pull up, no meeting where it was agreed upon, no clear moment where someone said “we believe this” and another person said “yes, that seems right.” It just existed, silently, as the foundation everything else was built on.

What’s strange is that the assumption is usually visible in retrospect. You can almost always trace a failed strategy back to a belief that turned out to be wrong — not a tactical error, not a budget problem, not a creative miss, but a fundamental misread of something about the market or the audience or the timing that shaped every decision downstream. The strategic direction made complete sense given what was believed. The belief just wasn’t accurate. And because it was never written down, it was never stress-tested, never challenged, never held up against any evidence that might have complicated it before the strategy was built on top of it.

There’s a version of this that’s almost philosophical — the idea that all strategy is essentially a bet on a set of unprovable beliefs about the future, and that writing them down doesn’t make them less uncertain, just more visible. That’s true. But visible uncertainty is dramatically more useful than invisible uncertainty, because visible uncertainty can be monitored. You can say: we believe this is true, here’s what we’d expect to see if it is, and here’s what we’d see if it isn’t. That’s not a guarantee. It’s just a way of building a strategy that knows what it’s assuming — which is a completely different thing from a strategy that doesn’t.

In my experience the assumptions that do the most damage are the ones that feel the most obvious. The ones nobody questioned in the briefing because questioning them would have felt like missing the point. The market is underserved. Customers want a simpler solution. Price is the main barrier. These things might be true.

They also might be true for a version of the market that’s smaller than it looks, or true in a way that doesn’t actually translate into the buying behavior the strategy depends on. The gap between “this is probably true” and “this is true in the specific way our strategy requires” is where a lot of digital marketing mistakes that waste time actually originate — not in the execution, but in the unexamined belief that made the execution feel justified.

The practical thing — and this is less exciting than it sounds — is to end every strategy conversation with a different kind of question than the ones usually asked. Not “what are we going to do” but “what would have to be true for this to work.” And then write those things down. Not as a formality. As a genuine list of beliefs that the strategy depends on, that someone is responsible for monitoring, that get revisited when results don’t match expectations rather than going straight to the tactics.

Because the alternative is what usually happens. Something doesn’t work. The team looks at the execution. The execution looks fine. The creative was solid, the targeting was reasonable, the budget was adequate. And nobody can explain why it didn’t land, because the thing that explains it — the assumption that turned out to be wrong — was never part of any document anyone can open.

It’s still there, though. It’s always been there. It just never had to introduce itself.

The Only Audit That Actually Matters

Here’s what’s true about everything covered in this article: none of it is new information, exactly.

You already knew that metrics could be gamed by the person who chose them. You already had a suspicion that the customer persona was out of date. You’ve felt the unease of a strategy that looked busy but wasn’t building toward anything. These aren’t revelations. They’re recognitions — the specific discomfort of seeing something named clearly that you’d been aware of at some level without quite having the language for it.

What’s harder to sit with is the time question. Not the money. Teams absorb budget losses, reallocate, move on. The quarterly write-off is a known organizational ritual. What doesn’t get processed in the same way is the six months of compounding that didn’t happen, the audience trust that got reset, the momentum that was starting to exist and then wasn’t. Those don’t show up as a line item anywhere. They just show up as a future that’s slightly further away than it needed to be.

The thing I keep coming back to — after watching these patterns repeat across different teams, different industries, different budget sizes — is that the expensive mistakes almost never look like mistakes when they’re being made. They look like reasonable responses to available information. A pivot that makes sense given the data. An optimization that addresses the visible problem. A strategy built on assumptions that seemed too obvious to question. The error only becomes legible later, and by then it’s layered under enough subsequent decisions that most people never find it.

This is the thread running through every pattern of digital marketing mistakes that waste time — not that the decisions were reckless, but that they were reasonable. Reasonable given the metrics that were being tracked. Reasonable given the customer model that hadn’t been updated. Reasonable given a strategy built on assumptions that felt like facts. The reasonableness is what makes them so hard to catch and so expensive to carry.

Which is not an argument for paralysis. It’s an argument for a different kind of attention — the kind that stays curious about the belief underneath the tactic, that asks what the data isn’t measuring, that treats the customer as someone who keeps changing rather than someone who was figured out once. That kind of attention doesn’t require a new framework or a different agency or a bigger budget.

It just requires being willing to ask the uncomfortable question before the results make it unavoidable.